Your team ships a feature on schedule. The code is clean. The pull requests were reviewed. The test suite is green. Then users try it and ask why the workflow feels wrong.

That failure usually isn't about effort. It's about mixing up verification and validation.

In plain terms, verification checks whether your team built the product correctly against specs and standards. Validation checks whether the thing you built effectively solves the user's problem in its intended environment. If you're leading an AI, SaaS, or fintech team, that distinction decides whether you catch rework in sprint planning or in production.

Validation vs Verification The Core Difference and Why It Matters

TL;DR

- Verification asks, “Are we building the product right?”

- Validation asks, “Are we building the right product?”

- Verification happens earlier and is mostly about specs, code quality, architecture, and process discipline.

- Validation happens on working software or models and is mostly about user outcomes, behavior, and business fit.

- For AI teams, you need both. A model can be perfectly implemented and still fail in production because the live data or user context changed.

The fastest way to explain validation vs verification software testing is this. Verification protects engineering quality. Validation protects product value.

A CTO usually feels the pain from both sides. Miss verification, and defects slip downstream into expensive fixes. Miss validation, and the team delivers something polished that customers don't want, don't trust, or can't use.

Who should care

This matters most if you're:

- A CTO or VP Engineering deciding where QA, platform, and MLOps effort should go

- A product lead trying to avoid shipping technically correct features with weak adoption

- An engineering manager or Head of AI structuring release criteria for software and models

- A founder who can't afford weeks of avoidable rework after launch

Practical rule: Treat verification artifacts and validation evidence as different classes of proof. Teams that blur them usually struggle during release reviews, postmortems, and compliance checks. If your team also needs cleaner records for audits, this guide on avoiding common audit evidence pitfalls is worth bookmarking.

One more nuance matters. Verification and validation aren't rival phases. They are separate controls answering separate risks. High-functioning teams run them continuously, but they assign different owners, different evidence, and different exit criteria.

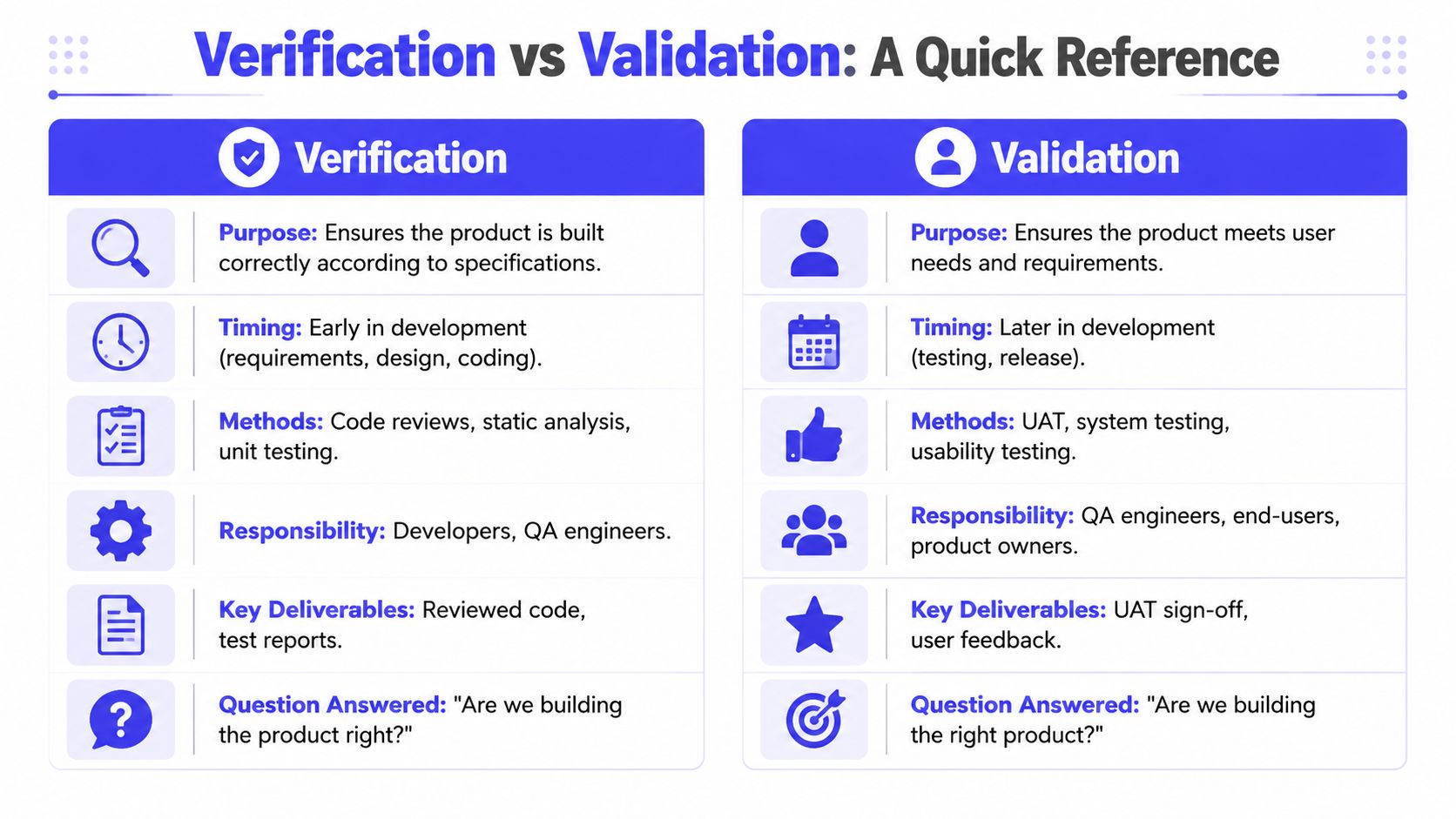

Verification vs Validation At a Glance

Here's the quick reference I use when aligning engineering, QA, and product.

| Dimension | Verification | Validation |

|---|---|---|

| Primary question | Are we building the product right? | Are we building the right product? |

| Purpose | Check conformance to requirements, design, and standards | Check fitness for user needs and business use |

| Typical timing | Early and throughout development | On running software or working increments closer to release and in production feedback loops |

| Common methods | Requirements reviews, design walkthroughs, code review, static analysis, schema checks | System testing, user acceptance testing, usability testing, canary checks, A/B evaluation |

| Typical owners | Developers, senior engineers, QA, architects, security reviewers | QA, product managers, end users, customer-facing teams, data scientists for model evaluation |

| Main artifacts | Reviewed specs, pull request notes, lint reports, static scan reports, architecture sign-off | UAT sign-off, session notes, bug reports, user feedback, production evaluation results |

Why this difference affects budget

The table looks academic until you map it to cost. Early verification is cheaper because it catches mistakes before they spread across downstream environments, integrations, and release plans.

Industry research summarized by Unosquare notes that fixing defects pre-production can be up to 100 times cheaper than post-release, and cites a 2002 NIST estimate of $59.5 billion annually as the cost of poor software quality in the U.S. economy. The same summary also points to IBM's staged defect-cost model, where a defect can move from $1 in verification to $1,000 in production. That's a useful framing for resource planning, even if your own numbers differ by stack and team size. See the verification and validation cost comparison summary.

A simple operating model

If your team is debating whether a test belongs under verification or validation, ask two questions:

- Are we checking against a spec, rule, or engineering standard? That's usually verification.

- Are we checking against user value, real usage, or business behavior? That's validation.

A green CI pipeline proves less than many teams think. It proves conformance to the checks you wrote. It doesn't prove that users want the outcome.

That distinction keeps release meetings honest.

How V&V Applies to a Standard Software Feature

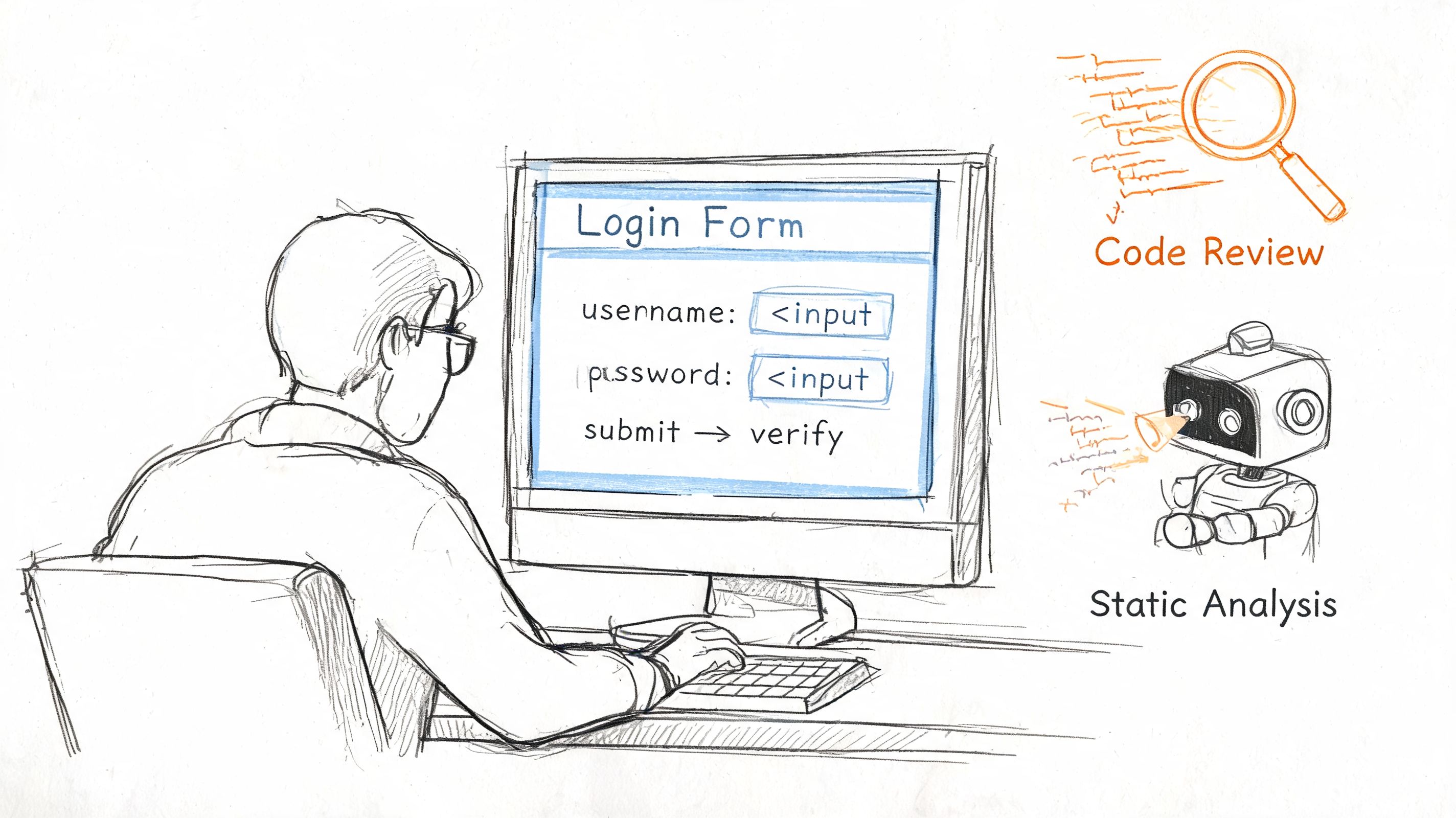

Take a simple example. Your team is building a login flow for a SaaS app with email, password reset, and lockout behavior after repeated failed attempts.

What verification looks like

Before anyone worries about live user behavior, the team verifies that the feature matches the intended design and implementation standards.

A senior engineer reviews the pull request. They check password handling, error states, naming conventions, and whether the lockout logic matches the requirement. A static analysis tool runs in CI. The frontend team compares the form states against the approved Figma screens. Security reviewers confirm the reset flow doesn't expose account existence through careless messaging.

A lightweight scorecard helps here:

| Verification check | Owner | Evidence |

|---|---|---|

| Requirement matches implementation | Engineer or QA lead | PR comments and linked ticket |

| UI states match approved design | Frontend engineer or designer | Screenshot review |

| Static checks pass | CI pipeline | Build log |

| Error handling follows security rules | Security reviewer or senior engineer | Review notes |

This is still verification even if some checks are automated. The common thread is that the team is checking whether the feature was built correctly according to known expectations.

What validation looks like

Now the feature is running in a staging or pre-release environment. Validation starts when real behavior matters.

A QA engineer tests complete sign-up and login journeys. A product manager asks a non-technical teammate to reset a password without instructions. Someone intentionally enters a wrong password several times and checks whether the lockout experience is understandable, not just technically correct. The team confirms whether the flow supports the business need of secure, low-friction user access.

If a user can't tell why access failed, the feature may pass verification and still fail validation.

Mini-case using the same feature

I've seen teams verify login forms very well and still miss obvious validation issues:

- Case one. The code and UI matched the spec, but the password reset email language confused users, so support tickets spiked.

- Case two. The lockout rule worked exactly as designed, but product hadn't validated how often legitimate users mistyped passwords on mobile.

- Case three. Error handling was secure, but the sign-in path added friction for sales demos because test accounts expired too aggressively.

All three are common because teams often treat “working” as “successful.” It isn't.

A practical split of responsibilities

For a standard feature, a clean split usually works well:

- Developers own code reviews, static checks, unit-level conformance, and implementation fidelity.

- QA owns scenario coverage, negative testing, and release confidence.

- Product owns whether the workflow solves the user's problem.

- Design owns whether the interaction is understandable.

That operating model scales because each group answers a different question.

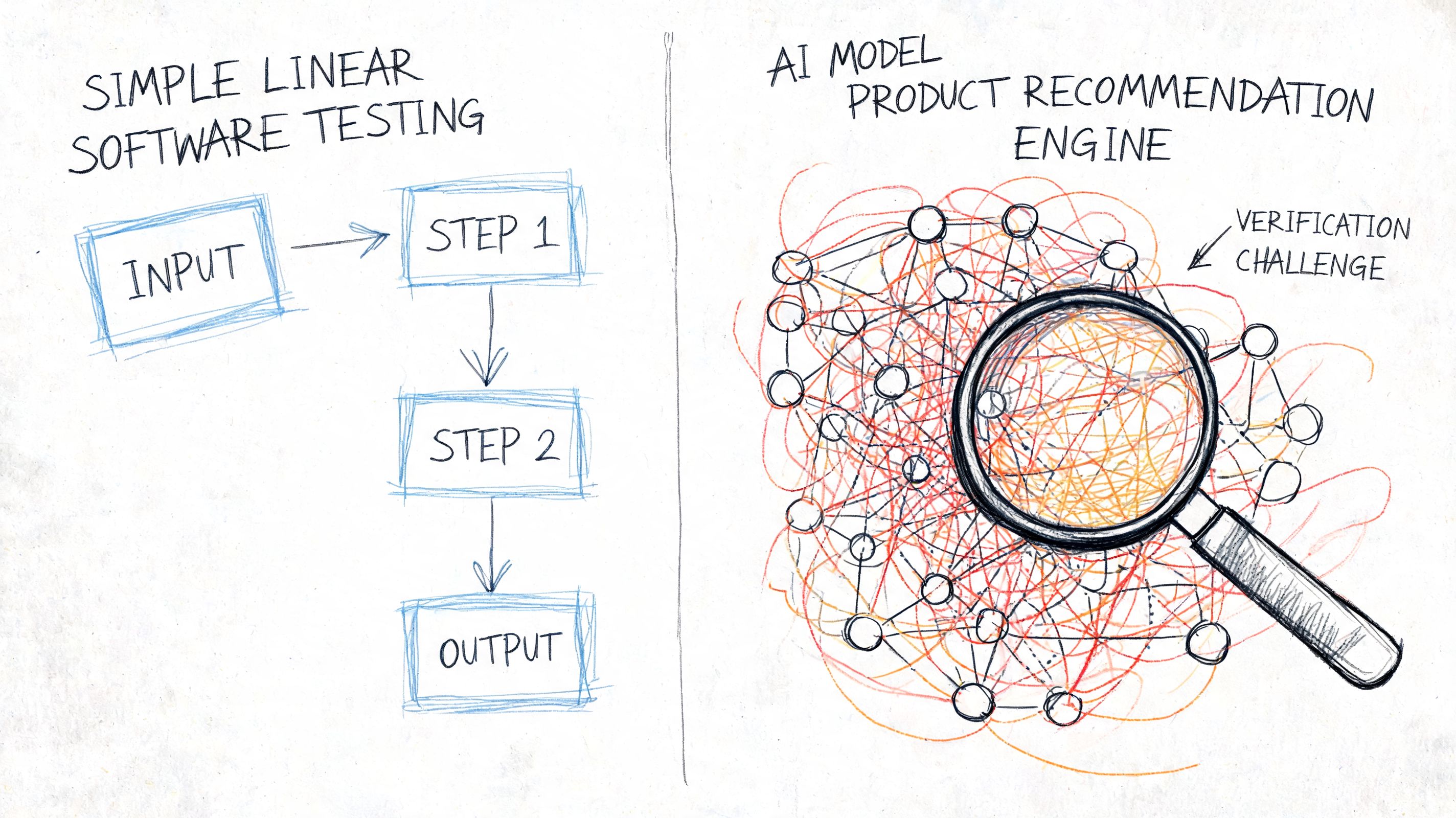

Why V&V Is Different and Harder for AI Models

A standard feature is deterministic most of the time. An AI system often isn't. That's why validation vs verification software testing gets much harder once your product includes recommendations, classifiers, forecasts, or large language model behavior.

Consider a product recommendation engine. The API may return valid JSON. The pipeline may train successfully. The deployment may complete without incident. None of that proves the recommendations are relevant, stable, fair enough for your use case, or resilient to changing behavior in production.

Verification for AI is broader than code review

In AI projects, verification still includes code quality, but it also extends into data and pipeline reliability.

Teams should verify things like:

- Dataset contracts such as schema consistency, null handling, and feature naming

- Training reproducibility through versioned data, fixed seeds where appropriate, and traceable configs

- Pipeline correctness so feature engineering, model packaging, and deployment steps execute as intended

- Evaluation wiring to confirm the right datasets and thresholds are used in the right environments

A practical mini-example looks like this:

verification_gates:data_schema_check: requiredtraining_config_review: requiredmodel_registry_tag: requiredci_static_checks: passfeature_pipeline_contract_test: passThat config doesn't validate business value. It verifies that the model system is built correctly and can be reproduced and operated safely.

Validation for AI happens in the real world

Many teams underinvest in this area. Validation for AI means checking whether model behavior remains useful under live conditions, not just on offline benchmarks.

The challenge is significant. A testrigor summary states that Gartner projects 75% of enterprise AI projects will fail to meet objectives by 2025 due to poor validation of model performance in live environments, and notes that unvalidated models can see a 40% accuracy drop within three months. The same source stresses that verification for AI must include static checks on datasets and pipelines, while validation must evaluate drift and live performance. See the AI testing distinction in this overview.

That matches what operators see in production. Recommendation quality shifts when inventory changes. Fraud models degrade when user behavior adapts. LLM outputs vary by prompt framing, retrieval context, and temperature settings. If you need a concise explanation for stakeholders, this ChatGPT answer variability guide is a helpful way to explain why model outputs can't be treated like fixed application responses.

What good AI validation looks like

For AI, validation should include at least these layers:

| Validation layer | Example question |

|---|---|

| Offline behavior | Does the model perform acceptably on held-out data? |

| Workflow fit | Do recommendations or predictions help the user complete a real task? |

| Production behavior | Does performance hold up after deployment as data changes? |

| Risk review | Are there harmful or unacceptable outputs for key user segments? |

Teams working on model-backed products should also adopt continuous performance testing for production systems, because static release testing won't catch the whole problem space.

A useful artifact is a model release scorecard:

- Verified inputs: data schema, feature definitions, training config, registry version

- Validated outcomes: business task success, qualitative review, drift watch, rollback trigger

- Owners: MLOps for gates, data science for evaluation, product for usefulness, QA for scenario coverage

Later-stage review benefits from seeing the mechanics in motion:

The biggest AI testing mistake isn't skipping one more benchmark. It's assuming an offline pass means the live system is safe, useful, and durable.

The Business Impact of a Balanced V&V Process

Engineering teams often discuss verification and validation as QA terminology. Leadership teams should treat them as cost controls.

When verification is weak, defects travel downstream into integration, release prep, support, and incident response. When validation is weak, entire features or model behaviors require redesign after users touch them. Both forms of rework consume roadmap time. Only one tends to be visible on a sprint board.

The NASA lesson still applies

The cleanest historical example comes from aerospace. The formal separation between verification and validation was pioneered by NASA during the Apollo era, after mission-critical reliability became paramount. The distinction was shaped in part by failures such as the 1962 Mariner 1 loss, which the Tricentis summary says cost $18.5 million. The same source notes that a post-Apollo study found verification caught 60% of defects pre-integration and reduced lifecycle costs by 40 to 50%. That's the best concise historical proof that balanced V&V isn't process theater. It's risk management with measurable payoff. See the NASA-driven history of verification and validation.

What this means for a CTO

A balanced V&V strategy changes how you allocate people and decisions:

- Verification reduces avoidable engineering waste. Fewer defects reach expensive environments and customer-facing workflows.

- Validation reduces product waste. Fewer polished features miss the market, the workflow, or the deployment reality.

- Together they shorten feedback loops. Teams learn sooner whether they implemented the spec correctly and whether the spec was worth implementing.

Operational advice: Budget review time and user validation time separately. If both are folded into “testing,” the process usually favors whatever the release train can measure fastest.

Where teams go wrong

Most failures come from one of three habits:

- Teams over-index on verification. They have strong CI, code review discipline, and architecture standards, but little direct evidence that users want the outcome.

- Teams over-index on validation. They chase user feedback after the fact while shipping brittle code and fragile pipelines.

- Teams assign no clear owner. Engineering assumes product will validate. Product assumes QA will. QA assumes analytics will tell the story later.

A balanced process doesn't require bureaucracy. It requires clarity.

A simple leadership question set

Ask these in every release review:

- What did we verify before implementation merged?

- What did we validate with real users, stakeholders, or live behavior?

- What evidence would trigger rollback, redesign, or retraining?

If your team can't answer all three quickly, quality risk is already accumulating.

A Practical Checklist for Your Engineering Team

Teams generally don't need another theory deck. They need a repeatable release checklist that separates build quality from product fit.

Verification checklist

Use this before merge or before model promotion:

- Spec check completed. Someone confirms the ticket, acceptance criteria, and implementation still match.

- Peer review done. A reviewer checks code, edge cases, naming, error handling, and security implications.

- Static tools pass. Linters, type checks, static analysis, and schema checks run automatically.

- Traceability exists. The team can point from requirement to implementation to test evidence.

For product teams that need better scenario writing, this guide on creating test cases for product teams is a practical companion.

Validation checklist

Use this before release and after release:

- User workflow tested. Real people can complete the core task without hand-holding.

- Negative scenarios covered. Failure states are understandable and acceptable.

- Business outcome defined. The team knows what success looks like in production.

- Monitoring ready. Logs, alerts, and product signals are in place to catch regressions and drift.

If you need a broader operating baseline, this guide on quality assurance for software testing is a useful internal benchmark for team setup.

A lightweight template

Copy this into your sprint doc or release ticket:

| Release item | Verification owner | Validation owner | Evidence |

|---|---|---|---|

| Feature or model name | Engineering lead | Product or QA lead | Links to PR, tests, UAT notes, monitoring |

| Critical risk | Reviewer | Validator | Pass, fail, or follow-up |

| Rollback trigger | Platform or MLOps | Product or incident lead | Defined before release |

Good teams don't just collect test results. They define who can say “ready” and what evidence backs that decision.

How to Implement a V&V Strategy Next Week

Start small. Teams can improve validation vs verification software testing in one sprint if they stop treating it like a giant process rewrite.

Step 1

Run a one-hour audit of your current release flow. List every quality gate from ticket creation to launch. Mark each one as verification or validation. Organizations often discover they're strong on one and vague on the other.

Step 2

Pilot one lightweight improvement in each category on the next sprint:

- Add or tighten one verification gate, such as a stricter pull request checklist, schema validation, or static analysis rule.

- Add one validation loop, such as a three-person UAT session, canary review, or post-release user feedback check.

If your regression coverage is still manual and slow, this guide to automating regression testing is a sensible next move.

Step 3

Schedule a 30-day review with engineering, QA, product, and if relevant, MLOps. Ask three questions:

- Which issues did verification catch earlier than before?

- Which validation findings changed product or model decisions?

- Which release criteria are still based on assumption rather than evidence?

Keep the process lean. The goal isn't more ceremony. It's fewer surprises.

A strong V&V strategy looks boring from the outside. Builds pass for the right reasons. Releases are predictable. AI systems are monitored with intention. Product teams know what “ready” means. That's what mature quality looks like.

If you need senior engineers who already know how to structure verification gates, validation loops, and AI release controls, ThirstySprout can help. You can Start a Pilot with vetted AI and MLOps talent, or See Sample Profiles to review the kind of operators who have shipped production systems without leaving quality to chance.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.