TL;DR: Your AI Project Playbook

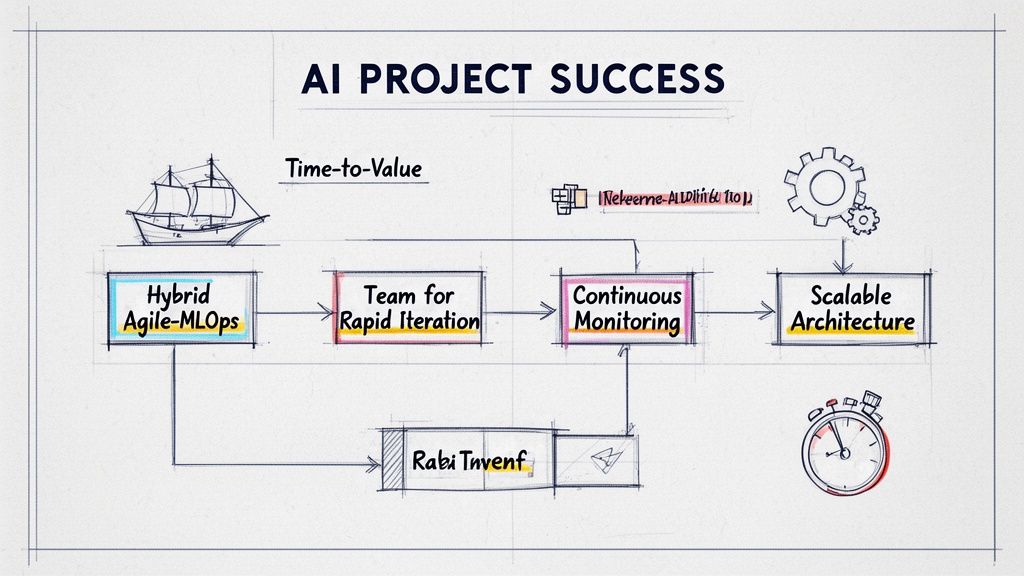

- Use a Hybrid Agile-MLOps Model: Manage software development with Agile/Scrum and ML model experimentation with Kanban. This prevents bottlenecks where a great model can't be deployed.

- Focus on Business Impact Metrics: Ditch vanity metrics like "95% model accuracy" in the lab. Instead, target outcomes like "a 20% reduction in support ticket response time within 60 days."

- Build a Lean, Cross-Functional Team: For an AI feature like RAG search, start with a core team: one ML Engineer (optimizes the model), one MLOps Engineer (automates deployment), and one Backend Engineer (builds the API).

- Adopt a Starter Tech Stack: Get to market faster with a pragmatic stack:

LinearorJirafor project management,GitHubfor version control,MLflowfor experiment tracking, andPrometheus+Grafanafor monitoring. - Mitigate Risks Proactively: Address technical debt with a "20% time" rule for refactoring, manage cloud costs with FinOps principles, and maintain stakeholder trust with simple, automated dashboards.

Who This Guide Is For

This guide is for technical leaders who need to deliver AI features on time and on budget.

- CTO / Head of Engineering: You need a repeatable process to turn AI experiments into scalable, reliable products without getting stuck in R&D purgatory.

- Founder / Product Lead: You're responsible for scoping AI features, defining success, and ensuring the engineering team is building something customers will actually use.

- Engineering Manager: You need to choose the right project management methodologies and team structure to keep your AI and software engineers productive and unblocked.

If you need to ship a production-grade AI product in the next 3–6 months, this framework will help you get there.

The AI Project Software Engineering Framework

Traditional project management fails for AI because it can't handle the inherent uncertainty of research. A successful project software engineering approach for AI requires a hybrid model that blends predictable software delivery with flexible experimentation.

This framework breaks the process into five iterative phases, ensuring that real-world performance data feeds directly back into your development cycle.

Here is the step-by-step process:

- Phase 1: Ideation & Scoping: Define success with a business metric, not a technical one. Anchor the project to a goal like "increase user retention by 5%."

- Phase 2: Prototyping & Experimentation: Run short (1-2 week) feasibility sprints. The only goal is to answer: "Is this technically possible with our data?" Fail fast if the answer is no.

- Phase 3: MVP Development: Build the core feature with production-quality code. Focus on creating a clean, tested, and maintainable foundation for one key user workflow.

- Phase 4: MLOps Integration: Automate everything. Use a CI/CD pipeline (e.g., GitHub Actions) to test and deploy application code and ML models together as a single unit.

- Phase 5: Monitoring & Iteration: Track performance against the business metrics defined in Phase 1. Use data on latency, model drift, and user engagement to decide what to build or fix next.

Adopting this disciplined loop is how you create a reliable engine for AI innovation. For a more detailed walkthrough, our AI implementation roadmap offers a great next step.

Practical Examples of AI Project Engineering in Action

Theory is good, but execution is better. Here are two real-world examples of how to apply this thinking.

Example 1: Methodology Selection Matrix for a Hybrid Team

You can't force an ML research task into a rigid two-week sprint. The solution is a hybrid approach where you match the methodology to the work. Use this matrix to structure the conversation with your team leads.

By using this rubric, you empower your application teams with Scrum's structure while giving your ML engineers Kanban's flexibility. This pragmatic approach is a core tenet of our guide on Agile vs. DevOps.

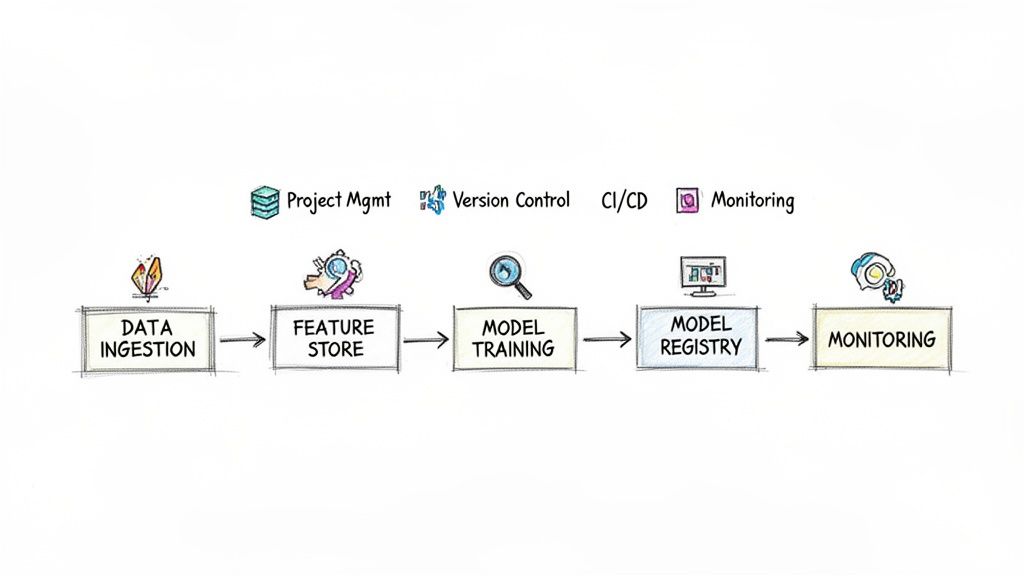

Example 2: Architecture for a Starter MLOps Pipeline

Your tech stack directly impacts your team's speed. This reference architecture shows how to connect open-source tools to create an automated MLOps pipeline that gets you from raw data to a monitored, production-ready model without over-engineering.

The workflow:

- Data Ingestion & Feature Store: Raw data is cleaned and loaded into a feature store to ensure consistency between training and real-time serving.

- Model Training & Validation: A CI/CD pipeline (e.g., GitHub Actions) triggers a training job. The new model is automatically validated against a test dataset.

- Model Registry: The validated model is versioned and stored in a registry like MLflow for auditability.

- Deployment & Serving: The approved model is deployed as a scalable API endpoint.

- Monitoring: Dashboards in Grafana, fed by Prometheus, track model accuracy, latency, and business KPIs in real time, alerting you when it's time to retrain.

The Deep Dive: Why Project Software Engineering Matters for AI

If you've managed an AI project like a standard software build, you've felt the pain. Traditional project management breaks down because it expects a predictable, linear process. AI development is fundamentally different—it's about navigating uncertainty.

Think of it this way: building a typical web app is like constructing a car on an assembly line. The blueprints are set and the process is known. Building an AI product is like designing the entire factory for a car that has never been built before. You're managing messy data, unpredictable models, and vague feedback.

This is where a disciplined project software engineering approach makes the difference. It provides the structure to manage that uncertainty and turn an experimental model into a reliable business asset.

From Research Project to Business Asset

Without a strong engineering foundation, AI initiatives stall. They become fascinating "science projects" that produce impressive demos but never create real business value. A project software engineering mindset shifts your focus from pure exploration to shipping products that solve problems and impact the bottom line.

This shift is critical. It’s how you de-risk your investment and find product-market fit faster. By embedding solid engineering from the start, you create a system that can:

- Validate ideas with quick, measurable experiments.

- Automate deployment to move from prototype to production without friction.

- Monitor performance in the real world to see what’s working.

The Growing Demand for Custom Solutions

The market reflects this need for structured AI development. The custom software development market is projected to grow from $53.02 billion in 2025 to $334.49 billion by 2034, a compound annual growth rate (CAGR) of 22.71%. This trend shows businesses are moving beyond off-the-shelf solutions to build a competitive edge. You can discover more insights about these software development statistics to see the full market shift.

For any AI company, this is a clear signal: a strong internal engineering capability is essential. A project software engineering approach provides the framework to build these valuable, custom solutions efficiently.

Your AI Project Engineering Checklist

Use this checklist to ensure your AI projects stay on track and are set up for success from day one.

Phase 1: Scoping & Setup

- Define one primary business metric for success (e.g., "reduce customer churn by 10%").

- Choose a hybrid project methodology (e.g., Scrum for app dev, Kanban for ML research).

- Assemble a lean, cross-functional team with clear roles (ML, MLOps, Backend).

- Select a pragmatic starter stack (

Jira/Linear,GitHub,MLflow,Grafana). - Set up budget alerts for your cloud environment.

Phase 2: Development & Deployment

- Run time-boxed (1-2 weeks) experiments to validate technical feasibility.

- Develop the MVP with clean, tested, and documented code.

- Build an automated CI/CD pipeline that deploys app code and models together.

- Implement a feature store to ensure consistency between training and inference.

- Document all architecture and deployment decisions in a shared space like Notion.

Phase 3: Monitoring & Iteration

- Create a monitoring dashboard to track both system health (latency, errors) and model performance (drift, accuracy).

- Schedule regular (e.g., bi-weekly) reviews to analyze performance against business goals.

- Dedicate a fixed portion of engineering time (e.g., 20%) to paying down technical debt.

- Establish a clear process for prioritizing the next iteration based on performance data.

- Automate stakeholder reporting with a simple, data-driven dashboard.

What to Do Next

- Assess Your Current Process: Use the checklist above to audit your current AI project engineering practices. Identify the single biggest bottleneck holding your team back.

- Define a Pilot Project: Choose a small, high-impact AI feature to build using this framework. Define a clear business metric and a 4-week timeline.

- Assemble Your A-Team: Whether you build in-house or augment your team, ensure you have the core roles covered: ML Engineer, MLOps Engineer, and Backend Engineer.

Ready to build a high-performing AI team that can navigate these challenges? ThirstySprout connects you with senior, vetted AI and MLOps engineers who have experience shipping and scaling real-world products.

References & Further Reading

The Modern AI Project Lifecycle: A breakdown of the five phases from ideation to production.

Building Your AI Engineering A-Team: A video guide on assembling the right talent.

Decision-Making Frameworks for Engineering Leaders: A guide to making better, faster technical and process decisions.

decision-making frameworksUnderstanding MLOps: The official AWS overview of Machine Learning Operations.

MLOpsBuilding Effective Cross-Functional Teams: Strategies for creating collaborative and high-performing teams.

cross-functional team buildingFinOps and Cloud Financial Management: An introduction to managing your cloud costs effectively.

What is FinOps and Cloud Financial Management

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.