The product design and development services market was valued at USD 17.06 billion in 2023 and is projected to reach USD 32.93 billion by 2030, which tells you this is no niche spend. Product development consulting services are a strategic partnership to help you build, validate, and launch products faster, and the core decision isn’t whether to use them. It’s which engagement model fits your stage, whether you’re shipping an MVP or hardening an AI feature for scale.

If you’re reading this, you probably have one of three problems. Your team is good but overloaded. Your roadmap includes AI work your current engineers haven’t productionized before. Or you know hiring full-time will take too long, and the business can’t wait.

My advice is simple. Bring in outside help when the risk of getting it wrong is higher than the cost of expert support. That usually means model deployment, regulated workflows, messy data pipelines, product discovery for a new AI feature, or a launch window that can’t slip.

When to Use Product Development Consulting

A founder asks for an AI feature in one quarter. Your engineers are already tied up on platform work. Product wants a demo for customers, security wants auditability, and nobody agrees on scope. That’s the point where external help stops being “extra” and becomes a sensible operating decision.

The market shift is already clear. The global product design and development services market was USD 17.06 billion in 2023 and is projected to reach USD 32.93 billion by 2030 at a 10.08% CAGR, reflecting rising demand for outside expertise to manage complexity and move faster, according to Grand View Research on product design and development services.

The short version

- Use consultants for high-risk gaps: architecture, AI validation, MLOps, compliance-sensitive workflows, and launch planning.

- Don’t use them to avoid making decisions: if your team can’t name the user, success metric, and deadline, no consultant will save you.

- Pick the engagement to match the bottleneck: strategy advisor for scoping, fractional expert for critical oversight, project team for execution.

- Require knowledge transfer: if the vendor leaves and your team can’t run the system, you bought dependency, not advantage.

Practical rule: Hire external experts to remove uncertainty, not to decorate a roadmap.

Who should use this approach

This is for startup founders, CTOs, Heads of Product, and engineering leaders who need to make delivery decisions in weeks. It fits teams that are:

- Launching an MVP: You need faster validation and tighter scope.

- Adding AI to an existing SaaS product: You need specialist skills without a full reorg.

- Fixing delivery risk: Your models work in notebooks but not in production.

- Planning resourcing: You need to decide between consulting, hiring, or a hybrid model.

If your problem is broader product operating discipline, this companion guide on product management consulting is also worth reviewing.

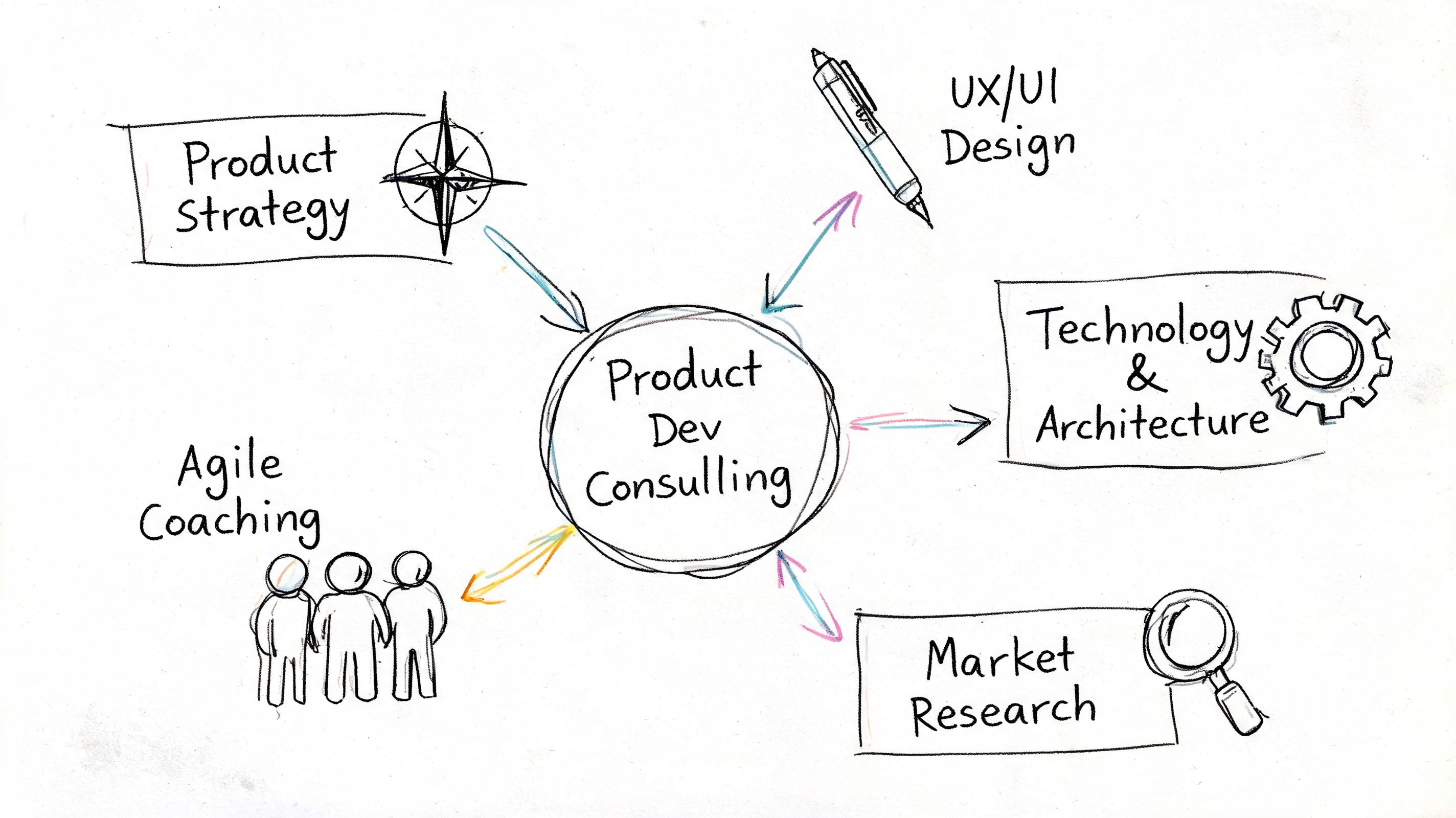

A Breakdown of Consulting Service Types

Not all product development consulting services solve the same problem. Founders often bundle everything into “we need a dev shop.” That’s usually how budgets get wasted.

You should think in terms of lifecycle stages. Early work is about deciding what to build. Middle-stage work is about building it correctly. Late-stage work is about making it reliable, governable, and scalable.

Strategy and discovery consulting

This is the right service when you don’t yet have a crisp product definition. Good consultants in this category pressure-test the user problem, narrow scope, define success criteria, and reduce waste before engineering starts.

Typical deliverables include:

- Problem framing: target user, workflow, pain point, and business constraint

- Requirements shaping: what must exist for launch versus what can wait

- Feasibility review: data availability, model approach, compliance constraints

- Roadmap decisions: build now, defer, or kill

For AI products, discovery should also include whether you need a model at all. In some cases, a rules engine, search workflow, or human-in-the-loop process is the better first release. Teams doing market sizing or user signal validation can also benefit from tools like GoldMine AI's research capabilities to speed up the research side before engineering work begins.

MVP and delivery consulting

This is execution-focused. You already know what you want to build, and you need outside capacity or a team that has shipped something similar.

Use this when:

- your internal team is overloaded

- the feature is adjacent to your core stack but not inside your team’s strongest area

- you need a thin, testable release instead of a full platform build

The deliverables are straightforward. Working software, technical design docs, sprint plans, test coverage expectations, and launch readiness criteria.

UX and product design consulting

A lot of AI products fail because the workflow is clumsy, not because the model is weak. Good UX consulting makes model output usable, reviewable, and trustworthy.

For AI features, I’d expect design consultants to handle:

- Confidence presentation: make uncertainty visible without confusing users

- Fallback states: what the product does when the model output is weak

- Human review loops: approval, edit, reject, and audit actions

- Onboarding design: how users learn what the AI can and can’t do

AI and MLOps consulting

Teams usually underestimate the work. Building a model demo is one job. Running a model in production is another.

According to Instinctools on MLOps consulting services, over 80% of machine learning projects fail to reach production, often because teams lack proper MLOps. The same source notes that expert consultants implement automated pipelines that reduce deployment time from months to weeks and cut model drift rates.

If your team has notebooks, manual handoffs, and unclear ownership between data science and engineering, you don’t have an AI product yet. You have an experiment.

A strong MLOps consultant should own or shape:

| Service type | Best used for | Typical deliverables |

|---|---|---|

| Data and model pipeline setup | moving from experiments to repeatable delivery | versioned datasets, training pipelines, deployment workflows |

| Platform integration | fitting ML into your existing stack | API contracts, CI/CD steps, environment strategy |

| Monitoring and governance | keeping models reliable after launch | drift alerts, rollback paths, audit logs, performance dashboards |

| Production hardening | improving resilience and operability | canary deployments, testing strategy, failure handling |

If you’re weighing delivery models, this comparison of managed services vs staff augmentation helps clarify where consulting ends and embedded talent begins.

Choosing Your Engagement Model

The wrong engagement model creates friction even when the people are good. A sharp consultant with the wrong operating setup will still slow you down.

I’d sort the options into four practical models. Fractional expert, contract project, full external team, and retained advisor. Each works. Each fails in predictable ways too.

Side-by-side comparison

| Model | Best fit | Main upside | Main risk |

|---|---|---|---|

| Fractional expert | early-stage teams, architecture reviews, AI strategy, MLOps oversight | fast access to senior judgment without full-time cost | weak execution if nobody internal owns follow-through |

| Contract project | well-defined feature, MVP, migration, prototype | clear scope and accountability | bad outcome if scope is vague |

| Full external team | new product line, parallel roadmap, aggressive timeline | highest delivery speed when internal capacity is thin | can drift from internal standards if governance is weak |

| Retained advisor | ongoing product or platform decisions | continuity and executive-level guidance | low leverage if there’s no committed internal execution team |

Fractional works best when the bottleneck is judgment

A startup usually doesn’t need a massive consulting firm. It needs one person who has made the next set of mistakes already and can prevent them.

Use a fractional consultant when:

- you need architecture review before committing engineering time

- you’re choosing between retrieval-augmented generation, fine-tuning, or rules

- your team needs senior oversight on security, infra, or ML operations

- leadership needs someone who can translate technical risk into business trade-offs

This model is especially useful for MLOps, platform, and AI product scoping.

Contract projects work when scope is clean

If you can describe the problem in one page, contract delivery can work well. If your project description still sounds like “build us an AI assistant,” stop and tighten the brief first.

A decent pre-contract brief should include:

- User and workflow

- Inputs and outputs

- Definition of done

- Constraints such as compliance, latency, or toolchain

- Named internal owner

Operator note: Consultants can rescue execution. They can’t rescue fuzzy thinking.

For startups tightening infrastructure before product launches, this practical guide to DevOps strategies for startups is useful context when you’re shaping scope.

Full team and retained models suit bigger commitments

A full external squad makes sense when you need a cross-functional unit. Product, design, backend, frontend, data, and MLOps. This is common when a company wants to build a net-new capability while the internal team protects the core platform.

A retained advisor is different. This person should be helping you make recurring decisions. Vendor evaluation, architecture governance, team design, release readiness, and roadmap sequencing.

If you’re still deciding whether to outsource at all, start with the strategic question in this guide to build vs buy software. That choice should happen before vendor selection.

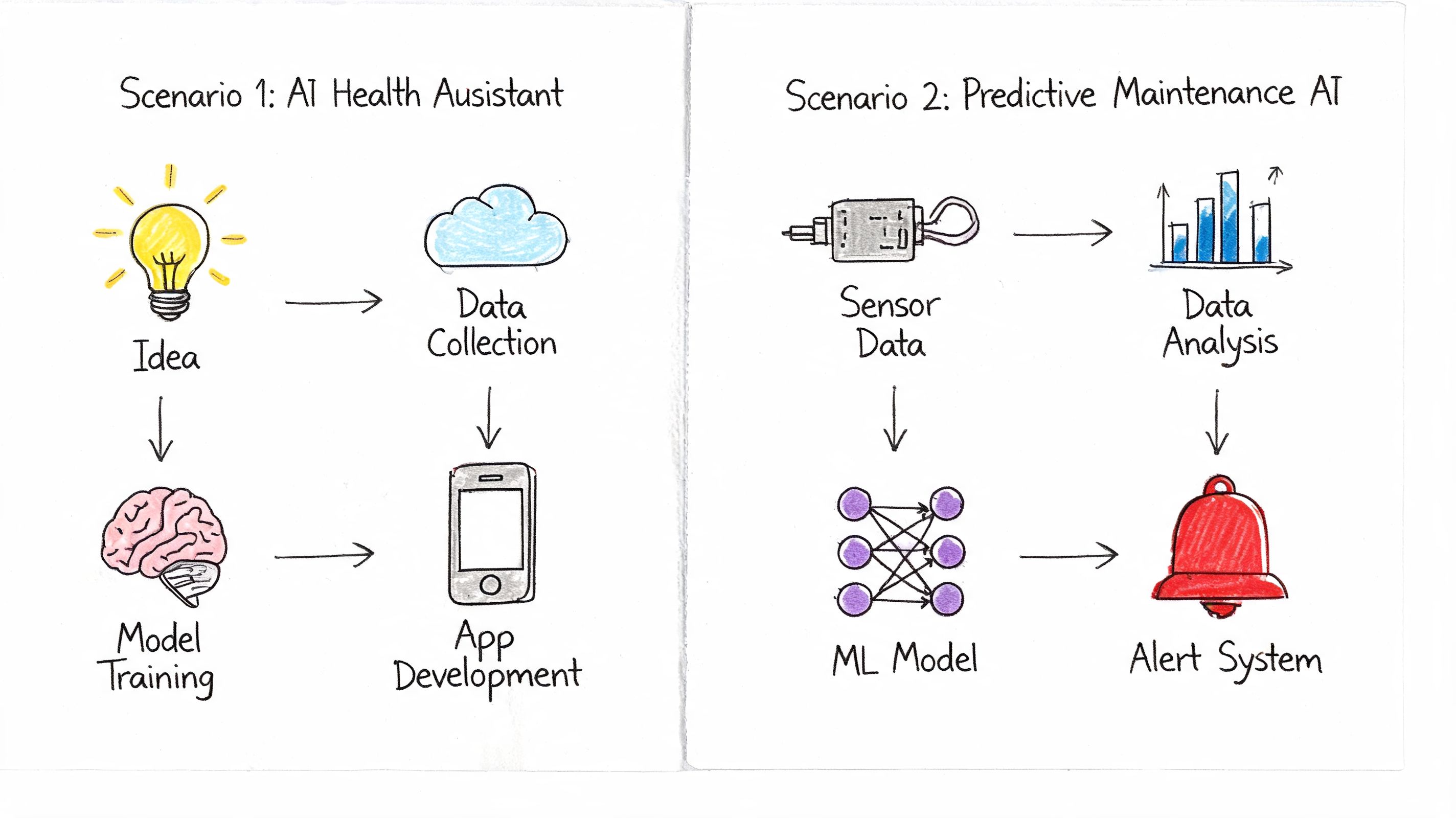

Practical Examples Two AI Product Scenarios

Theory is cheap. The operating model only matters if it changes how a team ships.

Below are two realistic scenarios I’d expect to see in the field. These aren’t vanity case studies. They’re examples of how to structure consulting support to reduce risk without bloating the org.

Scenario one Seed-stage fintech shipping an AI workflow MVP

The company has a small engineering team and wants to launch an AI feature that summarizes customer support tickets and suggests next actions for operations staff. The pressure comes from sales. They need a live workflow, not another prototype.

The mistake would be hiring a big full-service firm for six months. The smarter move is narrower.

They bring in:

- A fractional AI product consultant to scope the workflow, define guardrails, and make architecture decisions

- Contract engineers for the application layer and integrations

- A part-time designer to handle review flows, confidence cues, and operator approval states

The first two weeks focus on problem framing. Which tickets qualify, what data can be used, what should be suggested, and when a human must approve. By week three, the team has a thin architecture and a prioritized backlog.

A simple working model looks like this:

| Workstream | External role | Internal owner |

|---|---|---|

| Scope and risk | fractional consultant | CTO or Head of Product |

| App integration | contract backend and frontend engineers | engineering manager |

| Prompt and output QA | consultant plus internal SME | operations lead |

| Launch review | consultant | CTO |

This setup works because the company buys judgment where it lacks experience and execution where it lacks bandwidth. It doesn’t outsource product ownership.

Scenario two Series B SaaS team fixing unreliable ML delivery

This company already has a model in production. The model quality is acceptable, but releases are brittle, rollback decisions are painful, and nobody fully trusts the predictions after deployment. Product wants more AI features, but engineering knows the foundation is shaky.

That’s an MLOps consulting problem, not a generic software staffing problem.

A specialist team comes in to standardize deployment, monitoring, and traceability. They implement GitOps-based release workflows, formalize model versioning, and set up monitoring for drift and service behavior.

According to Blackthorn Vision on MLOps consulting services, without formal MLOps, production ML systems suffer from untraceable predictions, causing compliance failures in 70% of regulated sectors. The same source notes that implementing CI/CD via GitOps and monitoring for concept drift can lead to 40-60% improvements in model uptime and ROI.

That matters because the business problem isn’t “our model could be better.” It’s “our delivery system is not dependable enough to support the roadmap.”

A realistic architecture handoff for this kind of engagement might look like:

mlops_stack:experiment_tracking: MLfloworchestration: Kubeflowdeployment_strategy: GitOps with ArgoCDmonitoring:metrics: Prometheusdashboards: Grafanagovernance:model_registry: enabledaudit_trail: enabledThe internal team keeps ownership of product logic and customer-facing priorities. The consultants own the pipeline redesign and platform hardening. By the end of the engagement, the key output isn’t just cleaner infrastructure. It’s a repeatable release path the internal team can run.

Your best consulting engagements leave behind a system, a playbook, and a team that’s stronger than before.

Understanding Pricing Models and Calculating ROI

Teams often ask the wrong pricing question first. They ask, “What’s the rate?” The better question is, “What failure or delay are we paying to avoid?”

That matters because product development consulting services aren’t just a labor purchase. They’re a speed, risk, and capability purchase. And in AI, delay is expensive. You keep paying salaries while the product waits, competitors move, and customer learning stalls.

The broader market is large enough to make this obvious. The product engineering services market was valued at USD 1.276 trillion in 2024, and specialized consulting helps companies capture more of that value by optimizing development, reducing risk, and launching products more efficiently, according to NMS Consulting on product development consulting services.

The three pricing models you’ll see most

| Pricing model | Good fit | Watch out for |

|---|---|---|

| Hourly or daily | advisory, short discovery, fractional support | incentives can drift if scope isn’t controlled |

| Fixed price | clear MVP, migration, defined deliverables | change requests get messy fast |

| Retainer or value-based | ongoing strategic support, recurring guidance | vague expectations if outcomes aren’t defined |

Hourly pricing is fine for audits, architecture review, and product scoping. Fixed price is better for contained builds. Retainers work when you expect recurring involvement from someone senior.

A practical ROI frame

Use this four-part test before signing anything:

Speed gain

Does external help let you ship sooner, validate sooner, or unblock a major dependency?Risk reduction

Does the consultant lower the chance of rework, compliance issues, unstable releases, or bad architectural decisions?Hiring avoidance

Are you solving a short-term or specialist need that doesn’t justify a full-time hire?Knowledge transfer

Will your internal team be more capable after the engagement ends?

Cheap consulting is expensive if your team has to rebuild the work later.

A useful parallel is how leaders think about advisory budgets in adjacent operating areas. This breakdown of budgeting for OKR consulting is helpful because it frames spend around business outcomes, not just service hours. That’s the same mindset you want here.

What I’d approve

I’d approve external consulting spend when the engagement does one of these things clearly:

- Removes a critical bottleneck

- Prevents a known failure mode

- Accelerates customer learning

- Builds internal capability in a specialist area

I would not approve it for vague “innovation support.” If nobody can explain the expected operating change, the spend is premature.

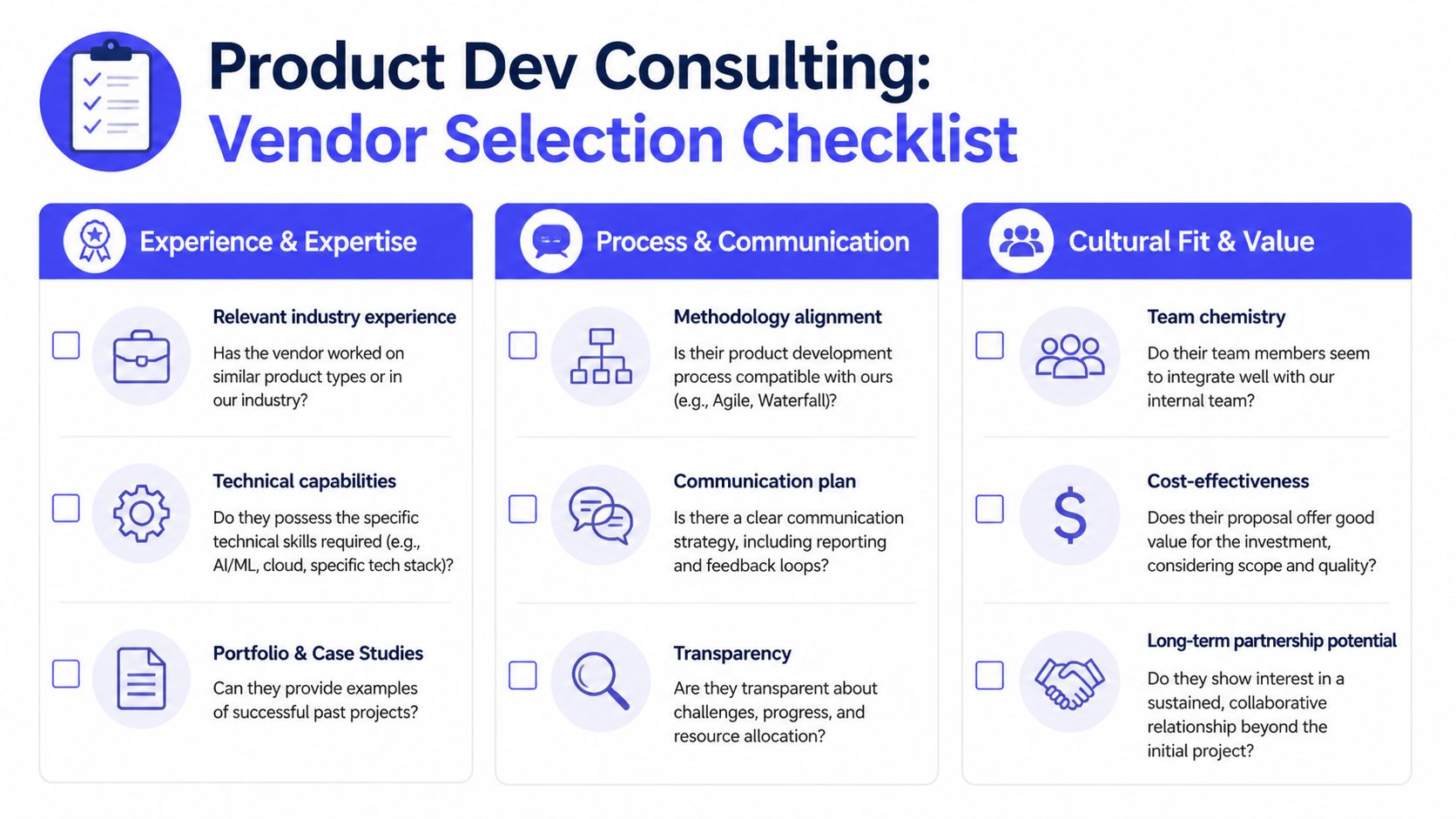

Your Vendor Selection Checklist

Vendor selection goes wrong when teams buy polished decks instead of operating fit. You don’t need the prettiest proposal. You need the partner who can integrate with your team, make decisions under uncertainty, and leave behind better systems than they found.

The hiring environment makes this harder. Delve’s product development consulting page notes that AI hiring demand surged 40% in 2025, and 62% of Series A-D startups reported 3-6 month delays in assembling AI teams. The same source argues that agile talent networks offering fractional or contract AI experts can cut that time to days. That’s a real advantage when your roadmap can’t wait for a full hiring cycle.

The checklist I’d actually use

Experience and expertise

Relevant product history

Ask whether they’ve built products like yours, not just “worked in AI.” An LLM proof-of-concept team is not automatically a good fit for a regulated fintech workflow.Technical depth in your stack

Look for named tools and platform fluency. Kubernetes, MLflow, Kubeflow, ArgoCD, SageMaker, Vertex AI, Azure ML, React, Python, Terraform. Specifics matter.Evidence of shipping

Ask for architecture examples, delivery artifacts, and what they handed over. A serious team can describe design trade-offs, not just outcomes.

Process and communication

Operating cadence

Require weekly decision reviews, clear owners, and a written risk log. If they can’t tell you how they escalate blockers, pass.Documentation habit

You want ADRs (architecture decision records), deployment notes, runbooks, and handoff docs. Otherwise knowledge disappears when the engagement ends.Transparency under pressure

Ask how they handle a missed milestone or a failed release. The answer tells you more than the pitch.

Cultural fit and value

Embedded behavior

Can they work in your Jira board, your GitHub flow, and your sprint rituals, or do they force their own process every time?Bias toward transfer, not dependence

The best vendors teach while they build.Flexible resourcing

You may need one expert now and a small squad later. Traditional firms often struggle here. Talent networks are usually better at giving you targeted expertise without forcing a giant engagement.

Ask one blunt question in every vendor interview: “If this project gets messy in week three, who makes the call and how fast do we hear about it?”

Five interview questions worth asking

- What part of our scope looks underdefined to you right now?

- Which risks would you tackle in the first two weeks?

- What would you refuse to build the way we currently described it?

- How do you transfer ownership back to internal teams?

- What tooling do you use for model versioning, deployment, and monitoring?

Those questions force the vendor to think like an operator, not a salesperson.

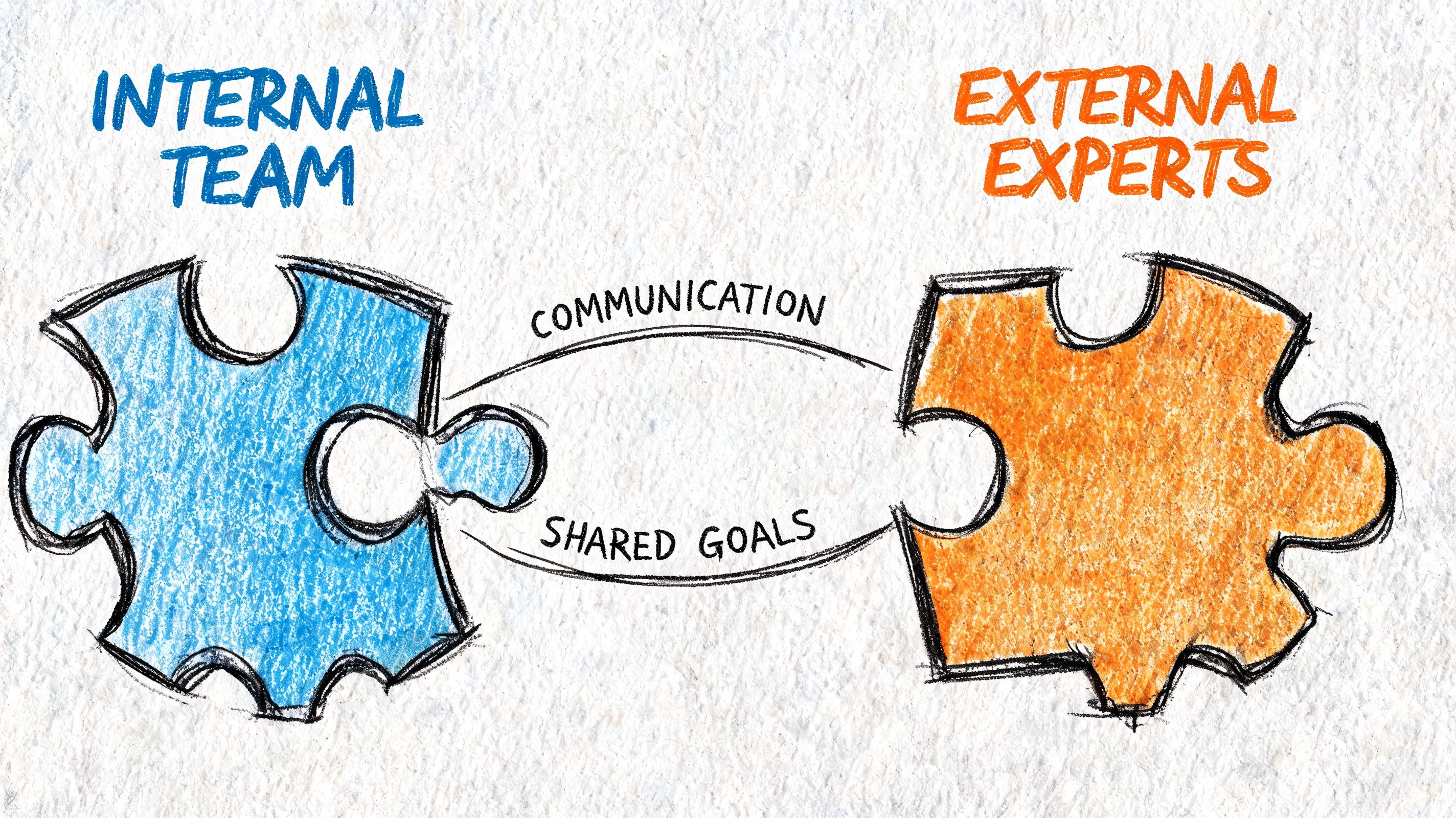

Integrating External Experts with Your Internal Team

Most consulting failures don’t start with technical incompetence. They start with sloppy integration. The vendor has one backlog, your team has another, and nobody owns the seams.

You avoid that by treating external experts like part of the delivery system on day one.

Week one setup

Give them the same operating context your internal leads have. That means access, goals, and decision rights. Not just a kickoff deck.

I’d require this in the first week:

- One shared Slack or Teams channel for daily communication

- Access to Jira, GitHub, docs, dashboards, and staging

- A named internal owner for product and another for technical review

- A written definition of done for the engagement

- A standing review cadence for roadmap, blockers, and risks

Here’s a lightweight onboarding scorecard you can use:

| Area | Must have by end of week one |

|---|---|

| Access | repo, ticketing, environments, documentation |

| Context | product goals, user workflow, constraints, current architecture |

| Ownership | single decision-maker, escalation path, approval flow |

| Delivery | backlog, sprint cadence, reporting format |

| Handoff | documentation expectations, code review rules, training plan |

Shared rituals beat status updates

Don’t run separate meetings for internal and external people unless there’s a legal reason. Separate meetings create politics and lag.

Instead, run shared rituals:

- Joint sprint planning with one backlog

- Midweek blocker review with the actual decision-makers

- End-of-sprint demo tied to user value, not just ticket completion

- Retro focused on friction between teams, tools, and ownership

A short explainer on team operating patterns can help align expectations before kickoff:

Avoid the two common failure modes

The first failure mode is treating consultants like isolated implementers. They ship code, but they never fully understand user context or platform constraints.

The second is the opposite. You pull them into every meeting, create too many reviewers, and slow them to a crawl.

The right balance is simple:

- Include them in product-critical conversations

- Exclude them from low-value internal churn

- Review decisions fast

- Keep one shared source of truth

External experts should feel embedded in the work, not trapped in your org chart.

A simple operating charter

Use this for any consulting engagement longer than a few weeks:

- Mission: one sentence on what must change by the end of the engagement

- Scope guardrails: what is explicitly out

- Decision owners: product, engineering, security, data

- Communication rhythm: daily async, weekly sync, sprint demo

- Success evidence: the artifact or system behavior that proves the work is done

If you don’t write this down, both sides will invent their own version.

What to Do Next A 3-Step Plan

You don’t need a huge procurement exercise to get started. You need a tighter decision loop.

Step 1 Scope the real bottleneck

Use the checklist from this article and identify the constraint that’s slowing you down. Be honest about whether the problem is product definition, engineering capacity, model operations, UX, or hiring lag.

Write the brief in plain English. User, workflow, business outcome, technical constraints, and what success looks like. If you can’t do that in one page, your scope still isn’t ready.

Step 2 Align internally before talking to vendors

Pull the CTO, product lead, and whoever owns budget into one short meeting. Decide three things:

- What you need help with right now

- Which engagement model fits

- Who will own the vendor internally

This prevents the classic mess where leadership wants strategy, engineering wants hands-on delivery, and procurement buys something in the middle that satisfies nobody.

Step 3 Start small and force clarity fast

Don’t begin with a giant statement of work unless the need is already obvious and stable. Start with a scoped discovery sprint, architecture audit, or narrow execution milestone.

That gives you signal quickly. You’ll see how the external team communicates, how they reason about trade-offs, and whether they can work inside your operating model. If the fit is strong, expand from there.

The companies that get the most from product development consulting services do one thing consistently. They use outside experts to remove a specific risk or accelerate a specific decision. They don’t outsource ownership. They gain an advantage.

If you need senior AI talent without waiting through a long hiring cycle, ThirstySprout helps startups and enterprises hire vetted AI engineers, MLOps specialists, and fractional experts in days. You can start with one specialist or a full remote AI team. If you’re ready to move, start a pilot or see sample profiles and scope the engagement around your roadmap, stack, and delivery timeline.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.