TL;DR: How to Build Production-Ready AI

- Define a measurable business problem first. Don't "build an AI"; instead, aim to "reduce ticket resolution time by 30%." A specific metric is non-negotiable.

- Secure high-quality data before writing code. Your AI is only as good as its data. For a pilot, you need at least 1,000+ clean, relevant examples (e.g., support tickets, documents).

- Start with the simplest model. Use a pre-trained API like GPT-4 for general tasks. Only fine-tune an open-source model (like Llama 3) if you have a niche task and 10,000+ labeled examples.

- Launch a 2–4 week pilot. The goal is a fast proof-of-concept to test your riskiest assumptions, not a polished product. This de-risks the project before committing a large budget.

- Recommended Action: Start a 2-week pilot with one senior AI engineer to validate your use case and data readiness.

Who This Guide Is For

- CTO / Head of Engineering: You need to decide on the architecture and team structure for a new AI feature.

- Founder / Product Lead: You're scoping an AI initiative and need to define the budget, timeline, and business case.

- Technical Lead / Staff Engineer: You're responsible for the technical execution and need a practical framework to avoid common pitfalls.

This guide is for operators who need to deliver business value within weeks, not months. It skips the hype and gives you a step-by-step framework for building AI that actually works in production.

A 5-Step Framework for Building an AI System

Building AI is a disciplined engineering process, not a research experiment. Forget vague goals and focus on a single, high-impact use case. This five-step framework connects every technical choice directly to a measurable business outcome.

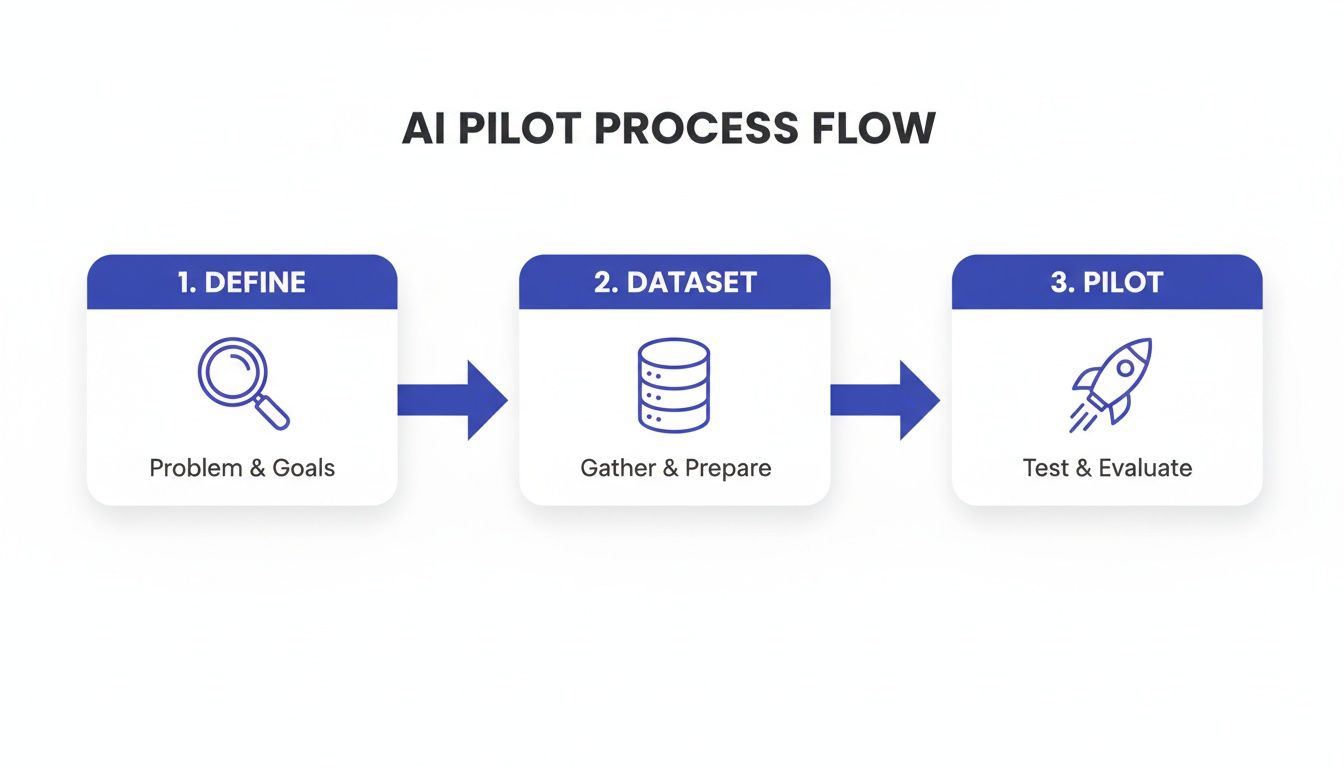

Alt text: A three-step AI pilot process flow diagram showing define, dataset, and pilot phases.

- Define the Business Problem: Translate a business need into a specific, machine-readable task.

- Source & Prepare Data: Identify, collect, and clean the dataset required for your pilot.

- Choose the Model & Architecture: Select the right model (API vs. open-source) and system design.

- Build the MLOps Pipeline: Create the infrastructure to deploy, monitor, and maintain your model.

- Run a 2-Week Pilot: Test your core assumptions with a small-scale experiment to get a clear go/no-go signal.

Following this sequence is your best defense against wasting time and money. You only commit to the next phase after validating the previous one.

Practical Examples: From Goal to Architecture

Abstract frameworks are useful, but real-world examples make them actionable. Here are two common scenarios that show how to apply the process.

Example 1: AI for Customer Support Ticket Classification

A B2B SaaS company wants to reduce response times by automatically routing support tickets.

- Business Goal: Classify 5,000 inbound support tickets per day with 95% accuracy to route them to the correct team (e.g., 'Billing', 'Technical', 'Sales').

- ML Task: This is a multi-class classification problem.

- Data Requirement: At least 5,000 historical Zendesk tickets, each with a clear, human-assigned category label.

- Model Choice: Start with a fine-tuned, smaller open-source model like

DistilBERT. It's fast, cheap, and excellent for classification tasks. A large language model (LLM) would be overkill. - Pilot Success Metric: The pilot model correctly classifies a test set of 500 tickets with ≥95% precision and recall for the top 3 categories.

- Business Impact: A 95% accuracy rate will reduce mis-routed tickets, directly cutting resolution time by an estimated 20–30% and improving customer satisfaction.

Example 2: Architecture for an Internal Knowledge Base Q&A Bot

A company wants to build an internal bot to answer employee questions using its documentation.

- Business Goal: Reduce time spent by engineers searching for internal documentation by 50%.

- ML Task: This requires Retrieval-Augmented Generation (RAG). The system must find relevant documents and then use an LLM to synthesize an answer.

- Data Requirement: Access to the company’s Confluence space and Google Drive, containing at least 1,000 documents (guides, policies, post-mortems).

- Ingestion: A Python script periodically scans Confluence/Drive for new/updated docs.

- Chunking & Embedding: Docs are split into 512-token chunks. An embedding model (like

text-embedding-3-small) converts them to vectors. - Vector Store: Vectors are stored in a database like Pinecone or an open-source alternative like Weaviate.

- Retrieval & Generation: When a user asks a question, the system retrieves the top 5 most relevant chunks and passes them to an LLM (GPT-4 from OpenAI or a self-hosted Llama 3) to generate the final answer.

- Business Impact: Faster access to information directly translates to increased developer productivity and faster onboarding for new hires.

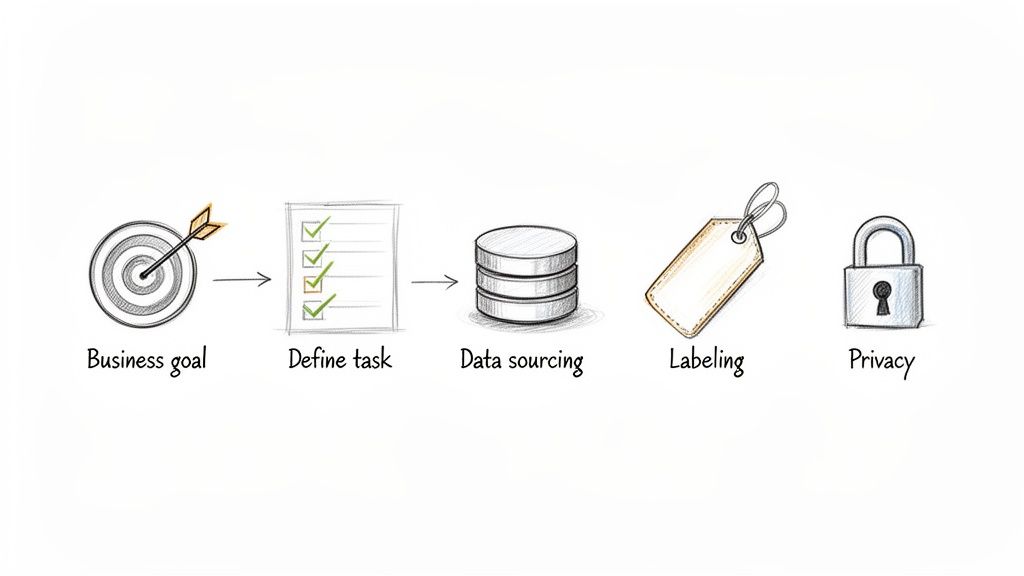

- Sourcing: Where will we get the data? (e.g., our CRM, logs, public datasets).

- Quantity & Quality: Do we have enough for a pilot (thousands of examples, minimum)? Is it clean?

- Labeling: Is the data labeled? If not, who will label it, and what are the guidelines?

- Privacy & Compliance: Does the data contain Personally Identifiable Information (PII)? What are our duties under GDPR or CCPA?

- Proprietary Foundation Models (e.g., OpenAI's GPT-4, Anthropic's Claude 3): Access via API. Best for general tasks and rapid prototyping. You get top-tier performance instantly but pay per use and have limited control.

- Open-Source Models (e.g., Llama 3, Mistral): Self-host for full control over data, cost, and customization. Ideal for fine-tuning on specific tasks. Our guide on how to fine-tune LLMs covers this.

- Custom-Trained Models: Build from scratch. Offers ultimate control but requires deep expertise, massive data, and significant time and cost. Rarely the right choice for a first project.

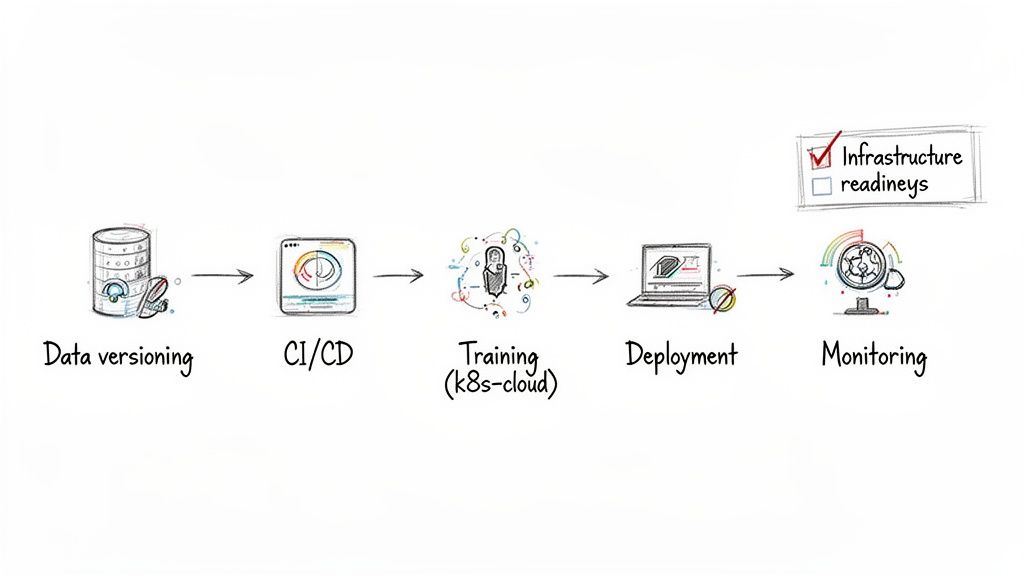

- Version Control: For code (Git), data (DVC), and models (Git-LFS).

- CI/CD Pipelines: To automatically test and deploy new model versions.

- Scalable Infrastructure: Using managed services like Amazon SageMaker or container platforms like Kubernetes to handle compute demands.

- AI/ML Engineer: The model builder. They experiment with frameworks like PyTorch and TensorFlow to create a functioning, predictive model.

- MLOps Engineer: The production expert. They build the automated pipelines using tools like Docker and Kubernetes to ensure the model runs reliably at scale.

- Data Engineer: The data pipeline builder. They ensure a steady flow of clean, high-quality data to fuel the entire system.

- AI Product Manager: The strategist. They own the "why," defining the business problem and success metrics, and ensuring the final product delivers value.

- Book a 20-Minute Scoping Call. Pressure-test your use case with an expert who has seen hundreds of AI projects succeed and fail. This can save you months of wasted effort.

- Review Sample Profiles of Vetted Talent. Understand what "good" looks like. Seeing the profiles of proven AI engineers and MLOps experts helps you calibrate your expectations and write a better job description.

- Launch a Risk-Free 2-Week Pilot. The fastest way to get a go/no-go decision is to build a small proof-of-concept. With one or two senior engineers, you can validate your data, test your core assumptions, and get a realistic estimate for the full project.

- Talent: Salaries for your core AI team.

- Infrastructure: Cloud compute costs for training and inference, often $5k–$50k+ per month.

- API Costs: If using proprietary models, your costs scale directly with usage.

- Maintenance: The ongoing work to fight model drift and keep the system healthy.

- Technical Metric: The model generates email drafts with a 90% acceptance rate.

- Business Metric: Each accepted draft saves a sales rep 10 minutes.

- ROI Calculation: If your team sends 500 AI-assisted emails a week, you save 83 hours of work. Multiply that by your sales team's average hourly cost to get a clear dollar value.

- Software Build vs. Buy Analysis: ThirstySprout Guide

- How to Fine-Tune LLMs: ThirstySprout Guide

- MLOps Best Practices: ThirstySprout Guide

- Timeline of AI History: Wikipedia

- Primary CTA: Start a Pilot

Alt text: Diagram illustrating the sequential steps for building an AI system: business goal, define task, data sourcing, labeling, and privacy.

The Deep Dive: Trade-Offs, Pitfalls, and Best Practices

Step 1: Defining the Problem and Assembling Your Data

I've seen more AI projects die from a fuzzy business goal than from any technical hiccup. Before you write a single line of code, you must translate a high-level goal into a specific, measurable machine learning task.

An objective like "improve customer satisfaction" is a recipe for wandering in the dark. A sharp goal like "auto-classify 80% of inbound support tickets with 95% accuracy" gives your team a clear target.

The hard truth: A project without a specific, machine-readable success metric is a research project, not a product initiative. The goal is to get from a vague idea to a clear, testable hypothesis that a pilot can prove or disprove in weeks.

Your data strategy is your AI strategy. The modern AI era arguably kicked off when AlexNet won the 2012 ImageNet challenge, fueled by a massive, high-quality dataset. That moment proved that large-scale, relevant data is the real unlock for AI performance. You can read more in this history of artificial intelligence developments.

Before your team goes any further, answer these questions:

Step 2: Choosing Your Model and Architecture

With your problem framed and data in hand, it's time to pick the engine. The right choice is the simplest one that gets the job done. This decision has a huge ripple effect on your budget, timeline, and capabilities.

Alt text: Diagram comparing AI model development approaches: pre-trained (GPT), fine-tune (Llama), and train from scratch, with cost, data, and time factors.

Your options fall into three main buckets:

This choice is a key part of your initial software build vs. buy analysis and determines whether you can get a prototype running in days or months. Beyond just picking the main algorithms, exploring various relevant models can spark new ideas for your architecture.

Step 3: Building Your AI Factory with MLOps

An AI model on a laptop is worthless. To turn it into a reliable product, you need Machine Learning Operations (MLOps). MLOps is the engineering discipline of deploying, monitoring, and maintaining ML models in production.

Without MLOps, you're signing up for slow updates, inconsistent deployments, and models that silently degrade. The guiding principle of MLOps is simple: automate everything you can.

Your MLOps foundation must include:

Defining your infrastructure in code (Infrastructure as Code best practices) is key to achieving the consistency that MLOps promises. For a deeper look, see our guide on MLOps best practices.

Step 4: Assembling Your High-Impact AI Team

Technology is only half the battle. Without the right people, your AI project is dead in the water. For most initiatives, you need a small, cross-functional "pod" of four key specialists.

Alt text: A hand-drawn diagram illustrating an MLOps workflow from data versioning to monitoring.

The core team includes:

The massive scale of modern models has created a huge talent bottleneck. You're not just looking for keywords on a resume; you're looking for operators who have shipped and maintained production AI systems. You can learn more about how we got here by exploring the history of artificial intelligence.

Checklist: AI Project Scoping Template

Use this checklist to assess the feasibility of any new AI initiative before committing significant resources. A "No" on any of these is a major red flag.

| Stage | Key Question | Yes/No | Required Role |

|---|---|---|---|

| Problem Definition | Is the business problem specific and measurable (e.g., "reduce X by Y%")? | Product Lead, Domain Expert | |

| Data Strategy | Do we have access to 1,000+ clean, labeled data points for a pilot? | Data Scientist, Data Engineer | |

| Pilot Scoping | Is there a minimum viable experiment we can run in 2-4 weeks? | Tech Lead, ML Engineer | |

| Metric Alignment | Does our technical success metric (e.g., accuracy) directly map to the business goal? | All Stakeholders | |

| Risk Assessment | Have we identified and planned for data privacy and compliance risks? | Legal, Product Lead |

What To Do Next: Your 3-Step Action Plan

Reading is good, but doing is better. Here are three concrete steps you can take this week to move your AI project from idea to reality.

Ready to start building? We help you launch a pilot with world-class remote AI engineers in under two weeks.

Start a Pilot or See Sample Profiles to take the next step.

Frequently Asked Questions

Here are answers to the most common questions I get from founders and engineering leaders.

What is the most common mistake when building a first AI product?

Building a solution in search of a problem. A team gets captivated by a new model, builds a technically impressive demo, and then discovers it has zero business impact.

Always start with a genuine, painful operational bottleneck. From day one, anchor your project to a concrete metric, like "reduce customer onboarding time by 40%." The goal isn't to "use AI"; it's to solve a business problem. If a simpler method works, do that first.

How much does it realistically cost to build and maintain an AI system?

It varies, but here are some realistic ranges. A 2–4 week pilot to prove the concept typically costs $15,000 to $40,000.

Taking that pilot to a full production system and maintaining it for a year can range from $250,000 to over $1 million. This covers:

Your choice of model architecture is the biggest cost driver. Self-hosting an open-source model has a higher setup cost but can be cheaper at scale than pay-per-call APIs.

How do I measure the ROI of an AI project effectively?

You measure Return on Investment (ROI) by connecting the AI's technical performance directly to the business metric you defined at the start.

For example, you build a tool to help your sales team draft emails:

This direct link makes it easy to justify the project's value to the rest of the company.

References & Further Reading

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.