Before you hire your next AI engineer, ask a simpler question than “Can they train a model?” Ask whether they can manage memory under pressure, debug a bad pointer at 2 a.m., and explain why a tiny C mistake can take down an inference path. That's still where strong systems engineers separate themselves from framework users.

C remains a staple interview topic for systems and embedded roles, and interview prep resources document 100+ actively circulating C interview questions across foundational and advanced topics. That matters for AI teams more than many CTOs expect. The engineers who own tokenizers, inference runtimes, data plane services, device-side integrations, or performance-critical extensions still need to reason about memory layout, lifetime, and code execution cost at a low level.

That's also why generic c programming interview questions often miss the point. Most lists test syntax recall. Strong hiring loops test whether a candidate can reason about pointers, dynamic allocation, structs, unions, arrays, and ownership. Interview guides consistently converge on those topics, especially memory-related concepts that expose real engineering depth rather than surface familiarity (InterviewBit's C interview guide highlights pointers, direct processor interfacing, and dynamic memory management as core areas).

If you're hiring for AI infrastructure, model serving, or MLOps-adjacent systems work, use C questions as a proxy for speed, reliability, and operational risk. A candidate who can explain undefined behavior, allocation failure handling, and data representation usually ramps faster on performance-sensitive code. If you're also budgeting the broader platform team around these hires, this DataEngineeringCompanies.com guide to MLOps pricing helps frame the delivery trade-offs around build versus buy.

1. Pointers and Memory Management

The fastest way to tell whether a C candidate can handle production-grade systems work is to ask about pointers, then keep pushing until they move past textbook definitions.

A weak answer sounds like “a pointer stores an address.” A strong answer explains ownership, aliasing, dereferencing risk, and what happens when multiple parts of a system believe they own the same allocation. That's the difference between someone who can write a benchmark and someone who can keep an inference server alive.

A practical question set looks like this:

- Swap correctly: Ask them to write a function that swaps two integers using pointers, then explain why pass-by-value won't work in C.

- Handle failure paths: Ask what happens when code dereferences

NULL, and what defensive checks belong in library code versus hot paths. - Reason about leaks: Ask for a concrete example of a memory leak and how they'd catch it before production.

What good answers sound like

Good candidates initialize pointers, check assumptions before dereferencing, and describe a consistent ownership model. Better ones will volunteer that “allocate, use, free” is not enough if ownership isn't documented.

Practical rule: If a candidate can't describe who frees memory in a simple API, don't trust them with high-throughput model-serving code.

For AI teams, this topic maps directly to custom kernels, native extensions, tokenizer paths, and low-latency services. Teams that care about raw speed often compare language choices, but the primary bottleneck is usually whether the engineer understands the trade-off between control and safety. That's the same trade-off discussed in this breakdown of the fastest computer language.

One more hiring signal matters here. Broad interview resources repeatedly emphasize pointers and memory behavior because they expose real technical depth, not just syntax recall. If your recruiters need help spotting that depth before a final panel, this guide offers practical advice for tech recruiters.

A useful follow-up in the interview is: “You free a pointer, then another part of the code still uses it. What class of bug is this, and how would you narrow it down?” Candidates who think in terms of lifetime and ownership boundaries are usually the ones you want.

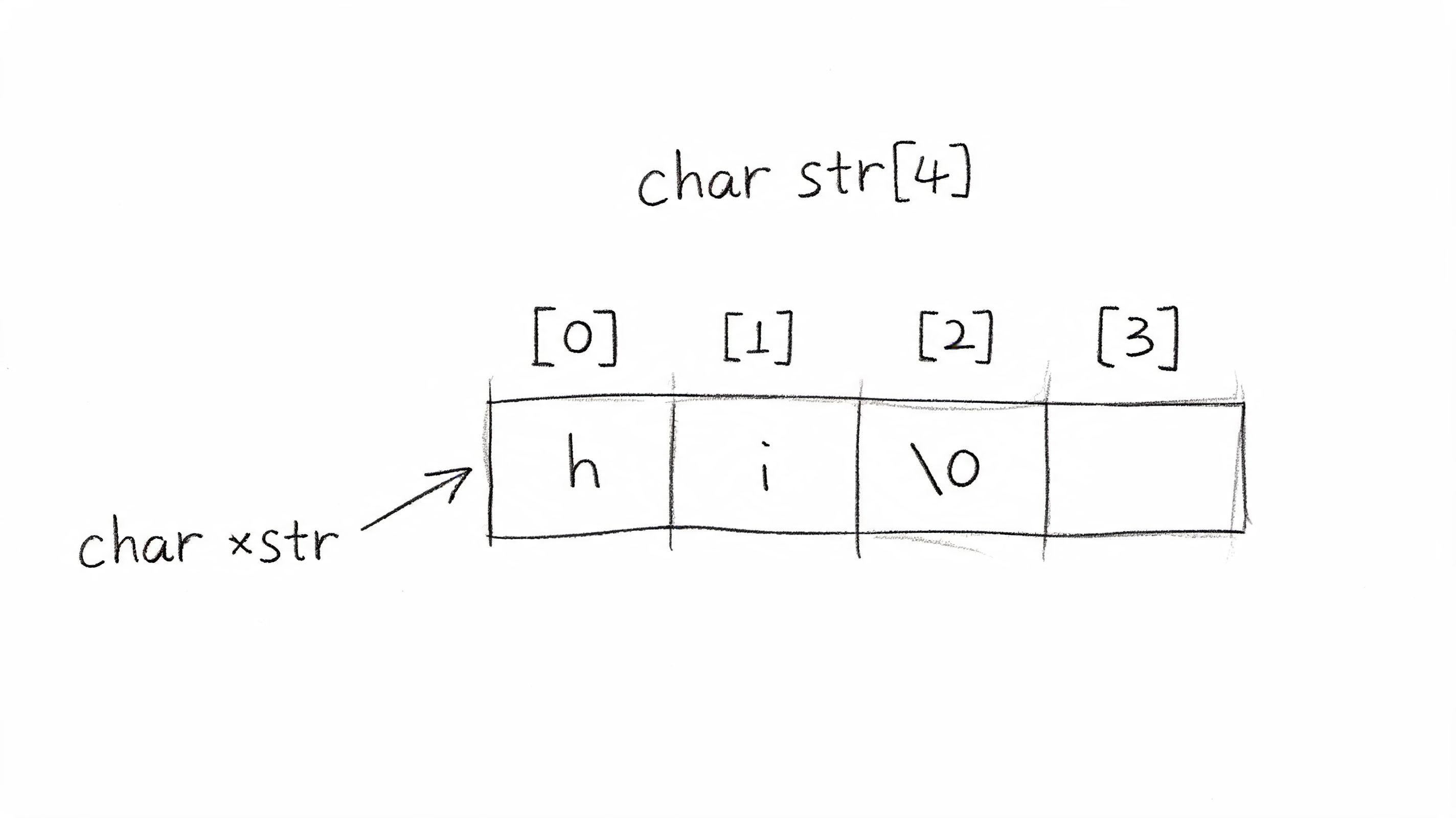

A short visual explanation helps when calibrating interviewers across the team.

2. Arrays and Strings

Arrays and strings look basic. They aren't basic when your team processes token streams, binary payloads, prompts, and serialized metadata at scale.

A candidate who understands contiguous memory usually writes cleaner parsing and preprocessing code. A candidate who doesn't will treat strings as harmless, then ship unsafe copies, off-by-one bugs, and brittle buffer handling.

Start with a question that sounds easy: “What's the difference between char str[10] and char *str?” The answer tells you whether they understand storage, mutability constraints, array decay, and memory ownership. Then ask them to reverse a string in place and explain where buffer overflow risk enters the implementation.

A realistic AI-team scenario

Suppose your team owns a tokenizer preprocessor written in C for throughput. It receives variable-length input, normalizes text, and writes into a destination buffer. Ask the candidate how they'd validate lengths, preserve the null terminator, and avoid writing past the destination.

Good candidates usually mention:

- Array length discipline: Use

sizeofwhere it applies, and don't confuse compile-time array size with pointer size after decay. - Safe writes: Prefer bounded operations such as

snprintfand explain their limits instead of blindly trusting “safe” names. - Null terminator awareness: Reserve space for the terminator and verify assumptions on every write path.

Teams often discover too late that “just string handling” was actually input validation, memory safety, and data quality all mixed together.

This category also aligns with how mainstream interview resources organize C prep. W3Schools groups its interview content around basics, loops, arrays and strings, pointers and memory, then functions and structures. That convergence is useful because it reflects a common baseline interview pattern rather than one company's trivia set (see the topic breakdown in the W3Schools C interview questions guide).

One interview pattern works well here. Give a short parsing task with one intentional trap, such as a destination buffer that's almost large enough. Then ask the candidate to narrate the edge cases before they code. You'll learn more from that than from a polished implementation copied from memory.

3. Structures and Unions

If pointers reveal whether a candidate can handle memory, structs and unions reveal whether they can model data cleanly without wasting space or creating ambiguity.

That matters in AI systems more than it seems. Native model metadata, device buffers, token records, feature blobs, and checkpoint headers all depend on predictable layout. An engineer who can't reason about padding, alignment, and shared storage will eventually create serialization bugs that are painful to diagnose.

Use a design-heavy prompt instead of a quiz. Ask them to define a LayerConfig for a neural network runtime. Include fields like type, dimensions, activation mode, and optional parameters. Then ask how they'd model variants without bloating every instance.

What to probe

A strong candidate will distinguish a struct from a union in practical terms. Struct members each get their own storage. Union members share storage, so only one interpretation is valid at a time. The best answers include the trade-off: unions save space, but they increase correctness risk unless you pair them with a clear discriminator.

A good follow-up set:

- Padding awareness: Ask how member ordering affects size.

- Serialization caution: Ask when packed layouts are worth the portability cost.

- Variant safety: Ask how they'd document which union field is currently valid.

Here's a mini-case worth using in interviews. Your inference service sends a compact binary request descriptor from one process to another. The candidate uses a union to represent either integer quantization parameters or floating-point scaling parameters. Ask what can go wrong if another engineer reads the wrong field after deserialization. The strongest candidates talk about protocol versioning, explicit tags, and layout documentation.

Clean data representation lowers two costs at once. Runtime overhead and debugging overhead.

This is one of those c programming interview questions where candidates often expose their maturity indirectly. Juniors talk about syntax. Seniors talk about ABI stability, field ordering, and how to prevent misuse by the next engineer who touches the code.

4. Dynamic Memory Allocation and Deallocation

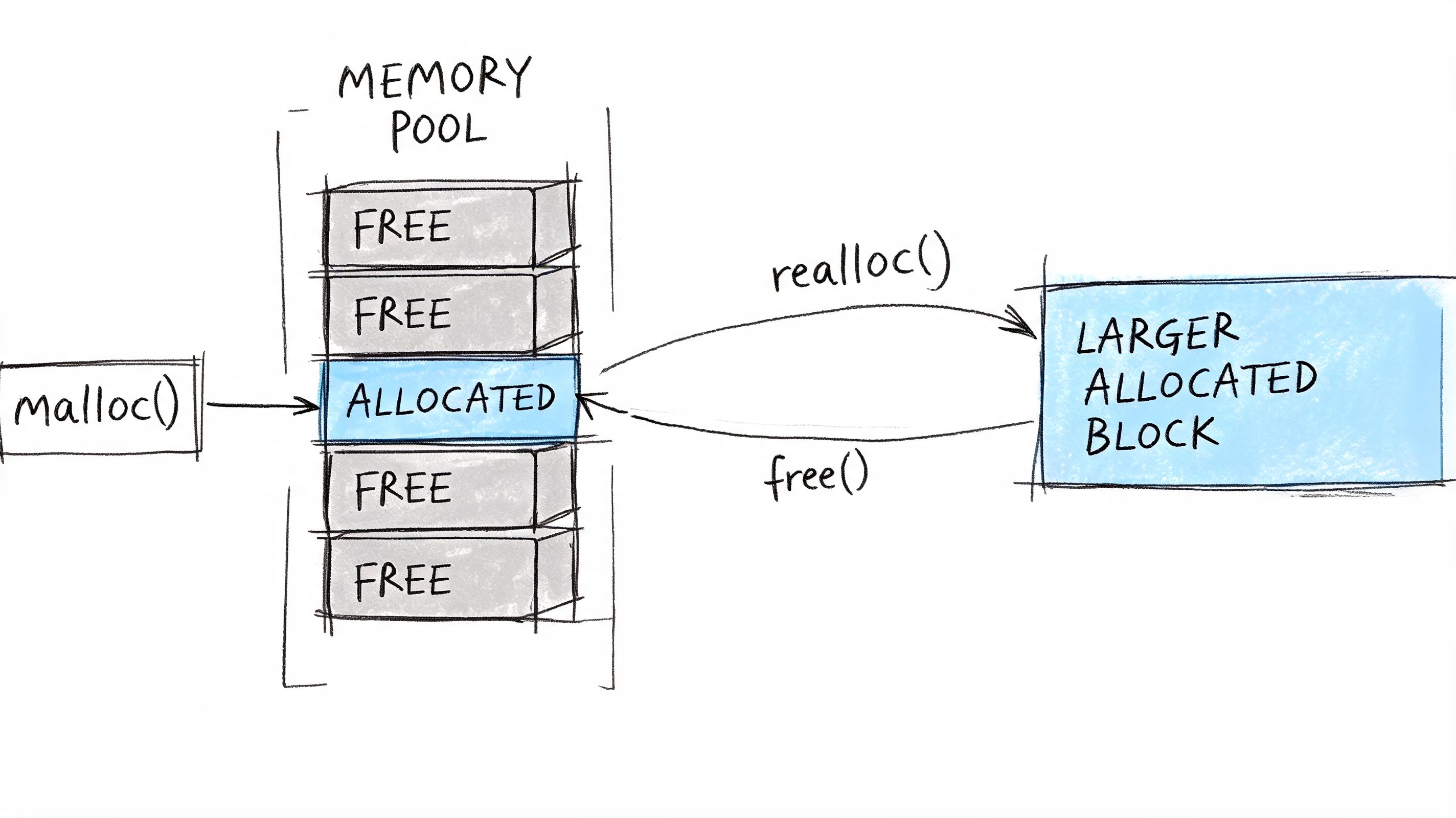

Most C interview loops ask about malloc, calloc, realloc, and free. That's necessary, but it isn't sufficient. In hiring, the useful signal is whether the candidate can make allocation decisions under uncertain input sizes without turning the codebase into a leak farm.

This matters for AI systems because tensor shapes change, request sizes vary, and preprocessing pipelines rarely know all dimensions at compile time. If a candidate has only memorized function signatures, they won't handle production pressure well.

Use a task like: “Allocate a 2D array for a variable-size batch, then clean up correctly if allocation fails halfway through.” That immediately tests error handling, cleanup order, and whether they understand partial initialization.

The answers that reduce risk

Good candidates check the return value of every allocation before use. Better candidates explain what happens to the original pointer on realloc failure and avoid patterns that lose the old address before the resize succeeds.

A compact interviewer rubric:

- Failure handling: They never assume allocation succeeds.

- Cleanup discipline: They can unwind partially completed work without leaking.

- Allocation strategy: They can explain when a pool allocator or preallocation makes more sense than repeated small allocations.

For a high-performance team, this topic maps cleanly to service stability and cloud cost. Repeated small allocations in a hot path create avoidable overhead. Sloppy deallocation creates leaks that force earlier restarts or more expensive overprovisioning. If you want adjacent interview prompts for evaluating this level of engineering discipline, this set of technical interview questions for engineers is a useful companion.

One practical mini-case. A candidate builds an embedding ingestion service in C that resizes a buffer every time a new record arrives. Ask how they'd redesign it for steadier throughput. Strong answers mention growth strategy, reuse, and fragmentation concerns instead of just switching to realloc everywhere.

5. Function Pointers and Callbacks

Function pointers are where many candidates who looked solid on basics start to wobble. That's useful, because real systems work often depends on pluggable behavior.

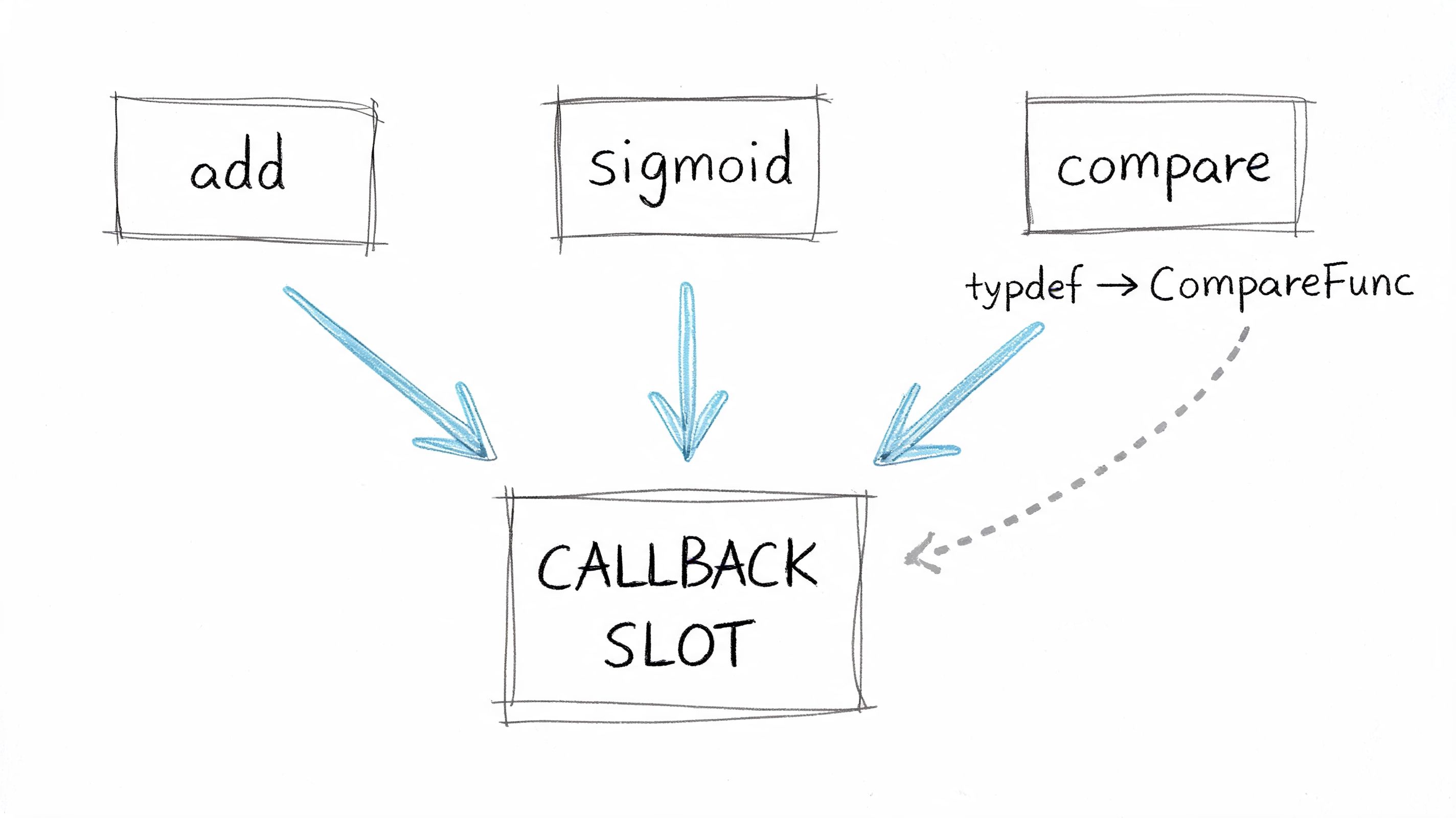

In AI infrastructure, callbacks show up in dispatch layers, metric hooks, plugin architectures, custom comparators, and runtime-selected operations. If someone can't declare and use a function pointer confidently, they'll struggle with extensible low-level code.

A good interview prompt is to ask for a generic sort wrapper with a comparison callback. Then change the requirement. Ask how they'd build a small plugin interface for selectable activation functions or scoring functions. You're testing whether they understand signatures, indirection, and maintainability, not just syntax.

Where this shows up in real teams

Consider an evaluation runner that needs to swap between different scoring behaviors at runtime. A clean C implementation might use function pointers to route to BLEU-style scoring, custom ranking logic, or a binary pass/fail evaluator without changing the caller.

Look for candidates who simplify declarations with typedef, keep signatures explicit, and warn about callback overhead in tight loops. Weak candidates can sometimes write the declaration but can't explain when the indirection is worth it and when a simpler static path is better.

A practical scoring note:

- Clear declaration: They make the signature readable.

- Correct use: They pass and invoke the callback correctly.

- Design judgment: They know when flexibility is worth the complexity.

Don't give full credit because someone survived the syntax. Give credit when they can defend the design.

One strong follow-up is: “How would you debug a crash caused by the wrong callback signature being passed through several layers?” The answer tells you whether they think in terms of interface contracts or just local code snippets.

6. File I/O and Stream Operations

A lot of AI systems still fail in boring ways. Corrupt checkpoints. Partial writes. Misread binary files. Silent truncation. That's why file I/O belongs in c programming interview questions for infrastructure hires.

Ask something operational, not academic: “Read a large binary file in chunks and handle errors without corrupting state.” Then ask what changes if the file stores model weights instead of plain text.

Buffering, file modes, and failure handling move from theory to business risk at this stage. A weak engineer treats persistence as an afterthought. A strong one treats it as a reliability boundary.

A production-shaped prompt

Suppose a service periodically writes model artifacts or intermediate embeddings to disk. Ask the candidate how they'd open files, choose text versus binary mode, buffer reads, and guarantee the file gets closed even on failure.

Good answers often include:

- Mode correctness: Use binary mode for binary artifacts to avoid unwanted translation behavior.

- Chunked reads: Read in predictable blocks rather than trying to slurp large files blindly.

- Atomic mindset: Write in a way that reduces the chance of leaving corrupted output behind.

A practical example is checkpoint writing. If an update process crashes midway through a write, your team may lose the last good artifact if it wrote directly to the target path. Candidates who think operationally will mention temporary files, verification, and careful rename strategies.

Another useful follow-up is buffering. Ask what setvbuf changes and when they'd tune buffering for large sequential reads. You don't need a perfect API recital. You want to hear whether they understand that I/O behavior can dominate throughput just as much as CPU code.

7. Preprocessor Directives and Macros

Preprocessor questions are useful because they expose whether a candidate writes maintainable C or clever C.

Macros can reduce repetition and enable portability. They can also hide bugs, duplicate side effects, and make debugging miserable. A lot of candidates know how to write #define MAX(a,b) and very few can explain why it's dangerous in the wrong hands.

Use a simple prompt first. “Write a max macro.” Then ask what breaks when one argument has side effects. Candidates who understand macro expansion will immediately discuss multiple evaluation and the value of static inline as a safer alternative in many cases.

What this tells you about engineering judgment

For AI and systems teams, macros often appear in platform detection, compile-time feature flags, logging gates, and optimized code paths. You want engineers who use them sparingly and document the intent.

A good answer usually includes:

- Parentheses discipline: Wrap parameters and the full expression.

- Header hygiene: Use header guards and explain why duplicate inclusion hurts builds.

- Better alternatives: Prefer

static inlinefunctions when type and semantics allow it.

One realistic mini-case is CPU feature specialization. A team wants one code path for a generic build and another for hardware-specific acceleration. Ask how they'd use conditional compilation without scattering unreadable macro logic across the codebase. Strong candidates talk about containment, build-time flags, and keeping the interface stable.

Clever macro tricks usually age badly. Clear compile-time boundaries don't.

This section is less about “can they use the preprocessor” and more about “will they leave your codebase better than they found it.”

8. Type Casting and Type Conversions

Casting questions can sound dry. In practice, they sit right in the middle of numerical correctness, interoperability, and subtle undefined behavior.

That's highly relevant for AI systems. Data moves between int, float, compact numeric formats, and raw byte buffers all the time. A candidate who treats casting as harmless syntax often introduces silent precision loss or invalid pointer assumptions.

Ask a numerical question first: “What happens when you cast a float to an int?” Then move to systems behavior: “When is casting a void * back to another pointer type safe?” The second question usually separates people who know examples from people who understand representation and alignment.

A scenario worth using

Suppose your team ingests quantized tensors from one component and passes them to another that expects a different type layout. Ask the candidate how they'd document and validate conversions so the receiving code doesn't misinterpret the bytes.

Good answers mention precision loss, promotion rules, and alignment before dereference. Better answers also warn against casually casting away const, because that's often a code smell and sometimes a bug factory.

A tight evaluator checklist:

- Numeric awareness: They know some conversions lose information.

- Pointer caution: They don't treat all pointer casts as interchangeable.

- API discipline: They'd document assumptions instead of burying them in code.

One practical observation from interviews. Engineers with strong ML backgrounds sometimes understand precision trade-offs but underweight pointer correctness. Traditional systems engineers often have the opposite profile. This question helps you spot that gap early.

9. Variable Scope, Storage Classes, and Lifetime

This category sounds academic until you've had to debug stale state in a shared service.

Scope and lifetime govern whether data is local, shared, persistent, or accidentally global. That has direct consequences for multithreaded inference services, feature flags, module boundaries, and testability. Engineers who misuse static or lean on global state often create systems that are fast to prototype and expensive to maintain.

A strong interview prompt is: “What's the difference between static and extern, and when would you use each?” Then ask for a failure mode caused by bad scope decisions.

What you want to hear

Candidates should explain that scope controls where a name is visible, while lifetime controls how long storage exists. Then they should connect that to code organization. File-private state can be useful. Hidden global mutation usually isn't.

A realistic mini-case is an inference worker with a file-level static cache used by multiple request paths. Ask what could go wrong once concurrency or testing enters the picture. Strong answers mention hidden coupling, state contamination, and the need for explicit ownership.

Useful interview signals include:

- Restraint with globals: They prefer passing state where practical.

- Intentional static use: They use file-private functions and data to narrow visibility.

- Shared-state awareness: They can explain why hidden mutable state becomes a risk under load.

One simple extension makes this a better systems question. Ask how they'd redesign a module that depends on extern globals so it becomes easier to test. Good candidates usually move toward explicit dependency injection, even in plain C.

10. Error Handling and Assertions

Strong C engineers don't just write the happy path. They define the failure path early.

That's especially important for AI platforms where one bad allocation, one failed file open, or one malformed request can cascade into dropped jobs or broken serving. Production systems need predictable behavior under stress, not perfect benchmark code.

This is also where modern interview content is often too shallow. Available interview lists still lean heavily on basic memory and syntax prompts, while more senior concerns such as buffer overflows, lifetime bugs, integer overflow, strict aliasing issues, safer APIs, sanitizers, and fuzzing are underrepresented. That gap is worth fixing if you care about production risk (this analysis of common C interview content highlights the gap between typical question lists and modern memory-safety concerns).

A better senior-level prompt

Ask the candidate to write a small function that allocates memory, opens a file, and returns a status code. Then inject failures at each step. You'll quickly see whether they can preserve errno, unwind cleanly, and use assertions appropriately.

A practical scorecard for this section:

- Return-value discipline: They check

malloc,fopen, and system-call results. - Assertion judgment: They use

assertfor invariants during development, not as a substitute for runtime error handling. - Recovery thinking: They describe what the caller can do next after an error.

Production code needs explicit failure contracts. “This should never happen” isn't a contract.

A useful AI-team mini-case is a distributed inference worker that loses access to a dependency. Ask whether the process should crash, retry, degrade, or fail fast for one request. The best candidates think in service-level terms, not just line-level terms. Adjacent disciplines matter too, especially if your org treats reliability and testing as separate concerns. For broader process support, this guide on quality assurance for software testing is a good complement.

10-Point Comparison of C Interview Topics

| Topic | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes ⭐📊 | Ideal Use Cases 📊 | Key Advantages & Tips ⭐💡 |

|---|---|---|---|---|---|

| Pointers and Memory Management | High, low-level, error-prone; requires careful discipline | High, tooling (Valgrind), skilled engineers, careful testing | ⭐ High performance and fine-grained memory control | Systems programming, inference servers, embedded ML | ⭐ Enables optimizations; 💡 init pointers, document ownership, use memory pools |

| Arrays and Strings | Medium, conceptually simple but bounds-risky | Low, contiguous memory, must size buffers correctly | ⭐ Fast O(1) access; efficient text handling | Tokenization, preprocessing, fixed-size buffers in pipelines | ⭐ Efficient access; 💡 use safe string functions, account for null terminator |

| Structures and Unions | Medium, requires layout and alignment thinking | Moderate, watch padding, cross-platform consistency | ⭐ Organized data; unions give compact variants | Serialization, model configs, compact parameter layouts | ⭐ Improves readability; 💡 order members to reduce padding, document layouts |

| Dynamic Memory Allocation and Deallocation | Medium–High, runtime failures and fragmentation risks | High, heap usage, may need custom allocators or pools | ⭐ Flexible, scalable data structures; allocation overhead possible | Variable batch sizes, dynamic tensors, edge deployments | ⭐ Enables scalability; 💡 check returns, use pools, profile fragmentation |

| Function Pointers and Callbacks | Medium, tricky syntax and type contracts | Low, minimal runtime cost but needs clear function signatures | ⭐ High modularity and runtime flexibility | Pluggable activations, optimizers, plugin systems in ML frameworks | ⭐ Supports extensibility; 💡 typedefs, document signatures, profile hot paths |

| File I/O and Stream Operations | Low–Medium, API simple, error handling verbose | Moderate, I/O bandwidth, buffering, disk space | ⭐ Persistence and efficient dataset/checkpoint handling | Dataset loaders, checkpointing, logging systems | ⭐ Enables persistence; 💡 use binary mode, atomic writes, tune buffers |

| Preprocessor Directives and Macros | Medium, easy for simple uses, risky when complex | Low runtime cost; impacts compile-time and readability | ⭐ Zero-overhead compile-time configuration and specialization | Platform-specific builds, feature flags, performance tuning | ⭐ Powerful at compile time; 💡 prefer inline funcs, parenthesize params, document macros |

| Type Casting and Type Conversions | Low–Medium, common but may hide subtle bugs | Low, affects precision and alignment, minimal CPU cost | ⭐ Enables interoperability; beware precision loss | Quantization, mixed-precision inference, API interfacing | ⭐ Flexible typing; 💡 minimize casts, track precision, verify alignment |

| Variable Scope, Storage Classes, and Lifetime | Medium, conceptual but critical for correctness | Low, affects memory lifetime and thread safety | ⭐ Controlled visibility and lifetime, better modularity | Large codebases, multithreaded inference servers, shared libs | ⭐ Prevents leakage; 💡 minimize globals, use static for file-local, document assumptions |

| Error Handling and Assertions | Low–Medium, patterns required for robust systems | Moderate, logging, monitoring, error-tracking systems | ⭐ Improved resilience and debuggability in production | Production ML systems, distributed inference, fault-tolerant services | ⭐ Improves reliability; 💡 check returns, use asserts in dev, save errno, log context |

Your Next Steps for Hiring C-Level Talent

Hiring engineers with deep C knowledge is still a strategic advantage when you're building fast, efficient AI systems. Not every AI engineer needs to write low-level C every week. But the people who own inference performance, native integrations, edge deployments, data-plane reliability, or performance-sensitive tooling need to reason at that level with confidence.

The hiring mistake I see most often is using generic c programming interview questions as a memorization filter. That gets you candidates who can define a pointer, list storage classes, and recite malloc versus calloc. It doesn't tell you whether they can debug a use-after-free, design safe ownership boundaries, or choose a data representation that won't create long-term maintenance risk.

A better interview loop maps C fundamentals to the actual work your team does. If the role touches inference servers, spend more time on memory ownership, fragmentation, and error handling. If the role touches tokenization or preprocessing, focus on arrays, strings, bounds safety, and file I/O. If the role sits near runtime internals or plugin systems, function pointers, structs, unions, and compile-time configuration matter more.

You should also separate baseline competence from senior judgment. Baseline competence means the candidate can explain core constructs and write correct small programs. Senior judgment means they can explain trade-offs under production constraints. They know when a macro is too clever, when a callback path adds unnecessary complexity, when a packed struct becomes a portability hazard, and when a hot path should avoid repeated allocation entirely.

A simple scorecard works well in practice:

- Correctness under pressure: Do they produce safe, compilable logic for small tasks?

- Memory reasoning: Can they explain ownership, lifetime, aliasing, and cleanup?

- Operational awareness: Do they think about corruption, partial failure, and recovery?

- Design judgment: Can they choose the simpler interface when flexibility isn't worth the cost?

- AI-systems relevance: Can they connect low-level choices to throughput, reliability, and debugging cost?

If you use that rubric, your interview loop becomes more predictive and less noisy. You'll make fewer expensive hiring mistakes. You'll also identify the engineers who can contribute to core infrastructure quickly, which matters when shipping timelines are tight and AI platform work is already competing with product deadlines.

One final point. There's a difference between someone who can answer C questions and someone who can help your AI team move faster with less risk. The second person usually talks in concrete trade-offs. They explain what fails, how it fails, and what they'd simplify. That's who you want in the room when latency spikes, memory usage climbs, or a native module starts corrupting data.

If you want a broader companion set for evaluating AI specialists beyond low-level systems depth, this guide on interviewing pre-vetted machine learning specialists is a useful next read.

If you're hiring for AI infrastructure, MLOps, or performance-critical backend work, ThirstySprout can help you meet vetted senior engineers who've shipped production AI systems and can contribute quickly. Start a Pilot or see sample profiles if you need systems-minded talent without a long hiring cycle.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.