You're probably dealing with this already. One team says “we need an API,” another says “make it REST,” and your ML engineers are asking whether they should skip both and use gRPC for model serving.

That confusion matters more than it sounds. In an AI or SaaS company, the API style you choose affects onboarding speed, observability, cache behavior, partner integrations, and the kind of engineers you need to hire. It also shapes where your architecture stays simple and where it starts fighting your workload.

API vs REST API The Essential Guide for Tech Leaders

If you want the short answer, here it is.

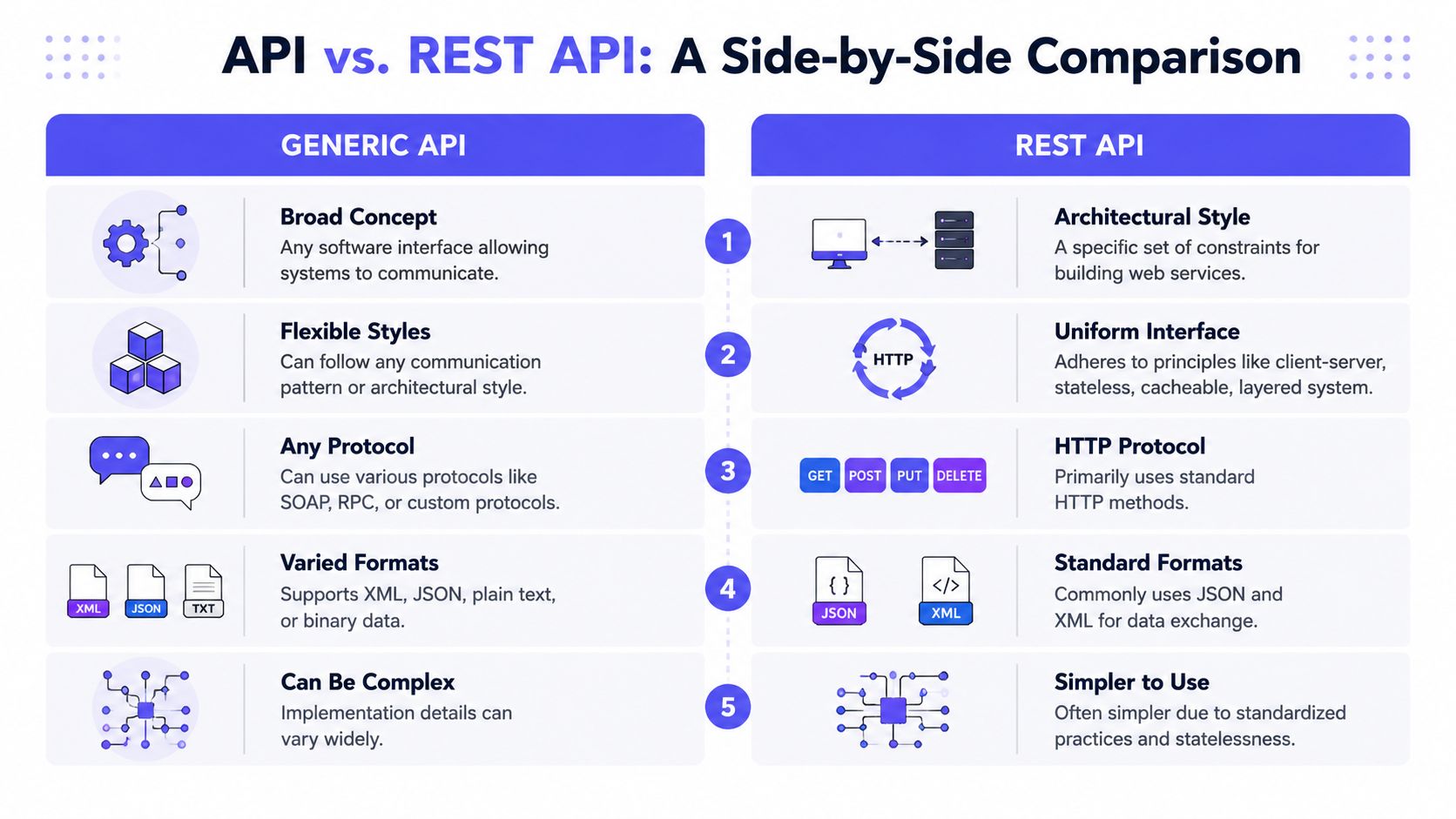

- An API is the broad category. A REST API is one specific architectural style for building APIs over HTTP.

- Choose REST when predictability matters more than maximum flexibility. That usually means public APIs, partner integrations, and cross-team platform boundaries.

- Choose another style when workload shape matters more than REST purity. Internal inference services, streaming systems, and tightly coupled service-to-service calls often need different trade-offs.

- Treat this as a hiring decision too. If your architecture leans on REST, hire engineers who understand resource modeling, OpenAPI, caching, and operational maturity. If it leans on high-performance internal APIs, hire for protobuf, schema discipline, and low-latency systems design.

This guide is for CTOs, founders, heads of engineering, and platform leads deciding how their systems should communicate. It's especially relevant if you're building AI products where one API may serve external customers while another moves features, embeddings, or inference requests inside your stack.

Early in a company's life, teams often default to whatever ships fastest. That works until the same interface has to support customer integrations, internal orchestration, and ML workloads with very different latency profiles. Then “API vs REST API” stops being a wording issue and becomes an architecture issue.

Here's the practical framing I use with leadership teams:

| Decision area | Generic API | REST API |

|---|---|---|

| Scope | Any interface between software systems | A constrained HTTP-based API style |

| Best fit | Specialized internal workflows | Public, partner, and cross-team interfaces |

| Main strength | Flexibility | Predictability |

| Main risk | Inconsistent patterns | Misfit for stateful or ultra-low-latency paths |

| Hiring signal | Need engineers who can reason from first principles | Need engineers who can design stable contracts and operate them at scale |

Bottom line: if outside developers or multiple internal teams must consume the interface, REST is usually the safer default. If a narrow internal workload has hard latency or state constraints, don't force REST where it doesn't fit.

Understanding an API and a REST API

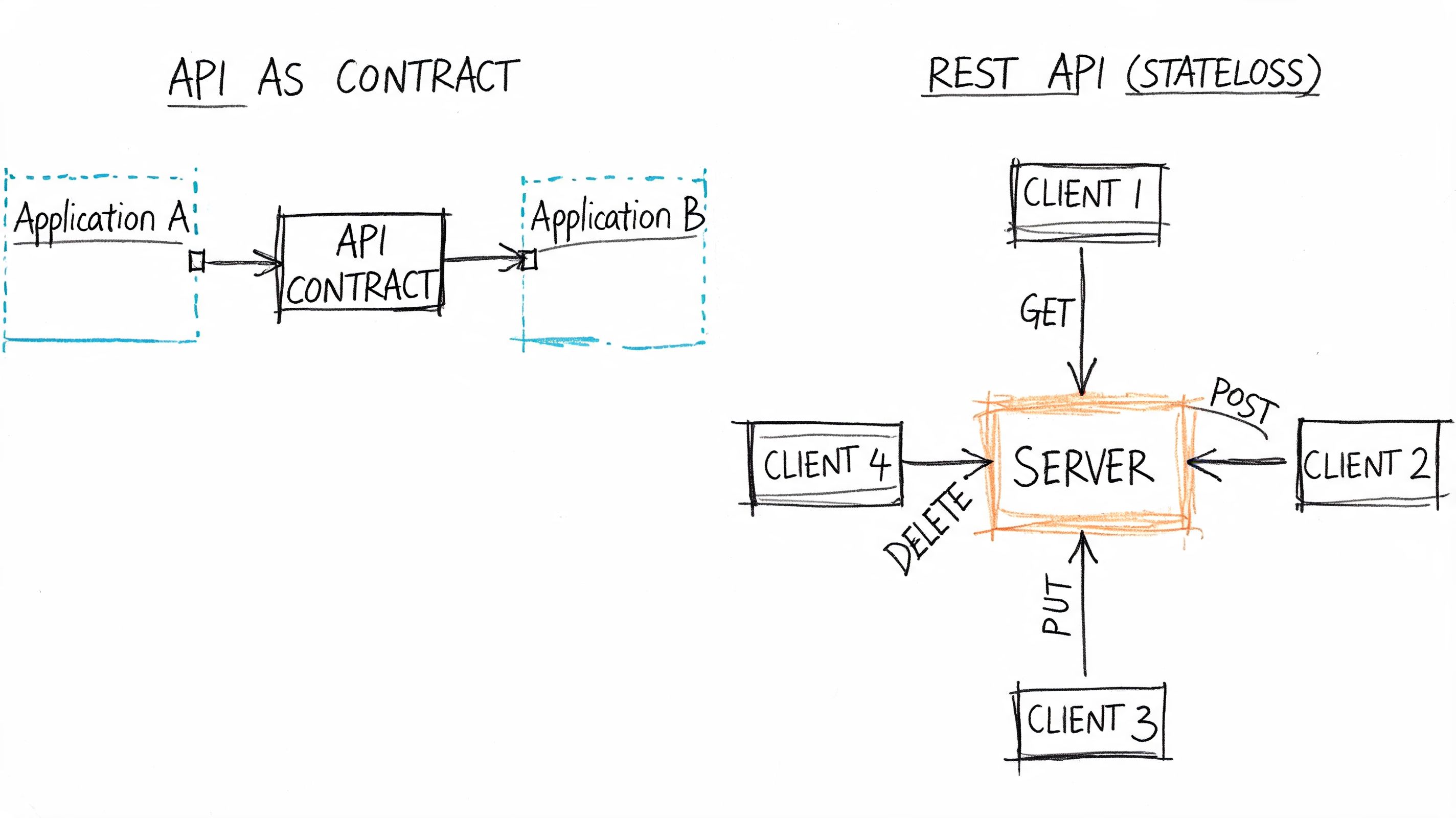

An Application Programming Interface, or API, is a contract that lets one piece of software talk to another. That contract could be HTTP endpoints, a library function, a message bus schema, or a database driver. API is the umbrella term.

REST, short for Representational State Transfer, is a narrower idea. It's a set of constraints for designing networked APIs, usually over HTTP. A REST API isn't a different thing from an API. It's a specific way to build one.

A simple analogy helps. API is the idea of a language. REST is one grammar for using that language. You can communicate without that grammar, but once many people need to read, write, and maintain the conversation, shared rules become valuable.

The broad concept versus the constrained style

A generic API can look like almost anything:

- RPC-style endpoints such as

/predictor/search - SOAP services with strict XML contracts

- GraphQL with a single query endpoint

- Message-based APIs over queues or event streams

REST narrows the design. It pushes you toward resource-oriented URLs, stateless requests, standard HTTP verbs like GET, POST, PATCH, and DELETE, and a uniform interface. That consistency is why teams can document, monitor, and onboard around REST faster than around a pile of custom endpoint conventions.

Industry analysis notes that REST APIs have achieved near-universal dominance as the standard integration protocol for modern software systems, with REST becoming the default architecture for companies offering API-based integrations. That's one reason this distinction matters in hiring and platform planning, not just in theory, as described in this payments-focused view of modern API integration patterns.

Why this matters for tech leaders

REST's dominance has a second-order effect. The toolchain is mature. Engineers expect it. Vendors optimize for it. Documentation platforms, gateways, test tools, and SDK generators all work more smoothly when the contract follows standard HTTP semantics.

If you want a concise companion read on naming and standards, John Pratt's overview of API standards is a useful reference because it explains the standards mindset without overcomplicating the topic.

For teams designing their first external interface, a practical starting point is to create an API with a stable contract and clear ownership. That discipline matters more than whether your first version is elegant.

REST API vs Generic API A Side-by-Side Comparison

The most useful way to evaluate api vs rest api is to stop asking which one is “better” and start asking what the constraints buy you.

Comparison table

| Criteria | Generic API | REST API |

|---|---|---|

| Design model | Anything from RPC to custom HTTP patterns | Resource-oriented HTTP design |

| State handling | Can be stateful or stateless | Stateless by design |

| HTTP usage | May use HTTP loosely | Uses standard verbs and status semantics |

| Documentation | Often custom and uneven | Fits OpenAPI and common tooling well |

| Caching | Depends on custom behavior | Aligns with standard HTTP caching patterns |

| Observability | Often needs custom rules | Easier to monitor with standard API tooling |

| Scaling pattern | Depends on implementation | Works well with horizontal scaling patterns |

| Best fit | Internal specialized systems | Shared platforms and external integrations |

A technical distinction worth emphasizing is that REST constrains APIs to a resource-oriented, stateless HTTP design using standard verbs and a uniform interface. That directly affects caching, observability, and horizontal scaling. For AI and data-intensive SaaS platforms, REST APIs are typically easier to document, cache, and monitor at scale, as explained in this HTTP API versus REST API breakdown.

What statelessness gives you

In practice, statelessness means each request carries what the server needs to process it. The server doesn't rely on stored client session context to understand the call.

That has operational value:

- Load balancers can route requests freely

- Autoscaling works more cleanly

- Retries are easier to reason about

- Incident debugging is simpler because request context is explicit

Statelessness isn't just a design principle. It reduces operational coupling between nodes, which is why platform teams like it.

The trade-off is that not every workload is naturally stateless. Some ML-serving and conversational systems need session context, warm state, or sticky routing. REST doesn't prohibit those patterns, but it doesn't make them especially elegant either.

A related decision often comes up when teams compare resource-based APIs with more query-driven patterns. If your product team is debating flexibility versus standardization in frontend-heavy applications, this guide on GraphQL vs REST for product and platform teams is a useful companion.

Here's a solid primer if you want a quick visual explanation before debating implementation details:

What a uniform interface buys your team

Uniformity sounds abstract until you're operating a platform used by five internal teams and ten partners.

With REST, engineers can infer behavior:

- GET retrieves

- POST creates or triggers work

- PATCH updates part of a resource

- DELETE removes

That predictability lowers the cognitive load for every new consumer. It also helps your gateway, CDN, and monitoring stack make smarter assumptions without custom instrumentation for every endpoint.

Generic APIs can be perfectly valid. But once every service invents its own nouns, verbs, and response model, the cost moves from coding into maintenance.

How API Choices Impact Real-World Systems

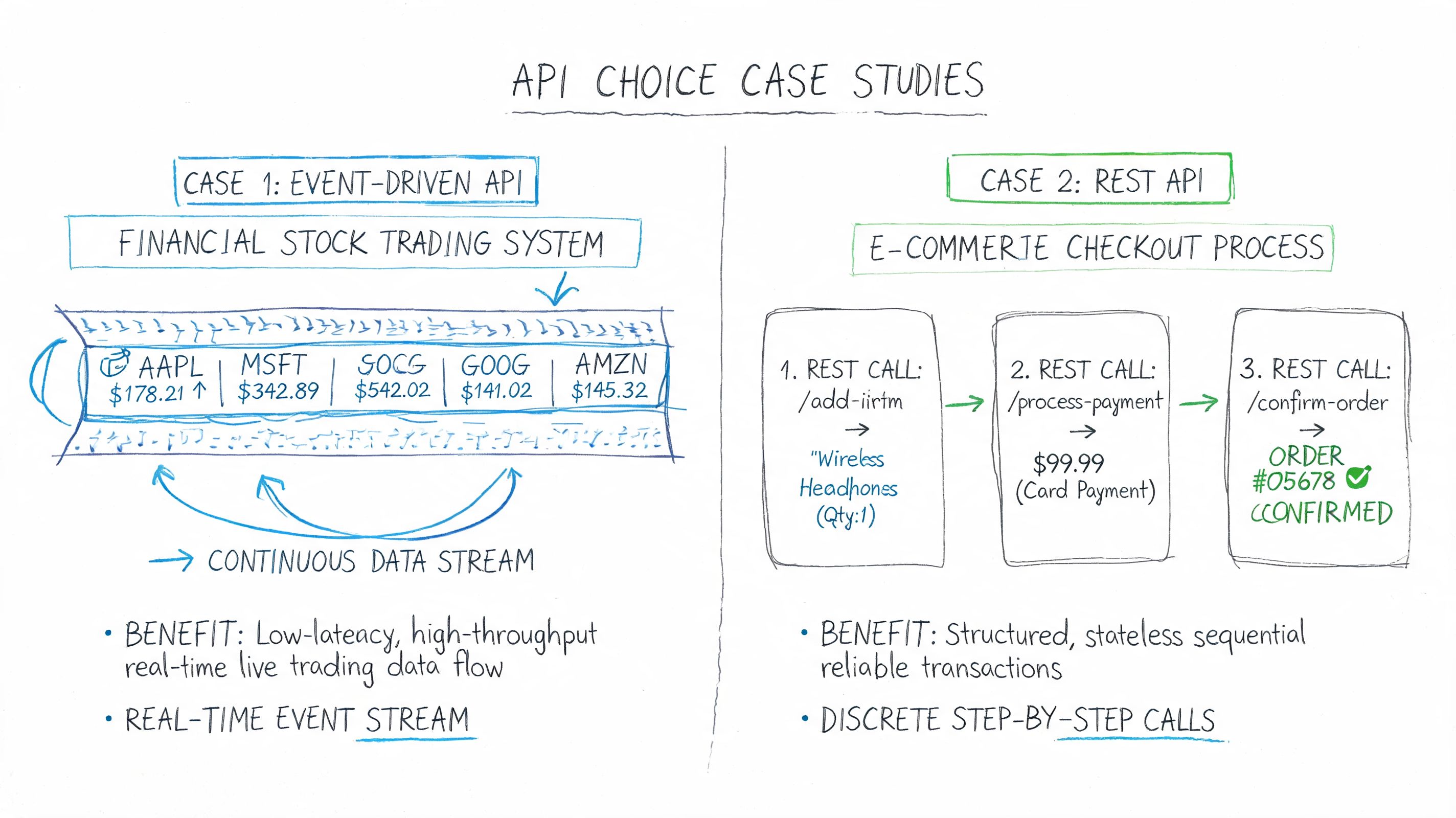

Architecture choices become clearer when you map them to actual workloads.

Example one internal ML inference service

An internal fraud-scoring service often starts with a simple endpoint like this:

@app.post("/predict")def predict():payload = request.get_json()features = build_features(payload)score = model.predict(features)return {"score": score}That endpoint is not especially RESTful. It's RPC-style. The URL describes an action, not a resource.

For an internal model-serving path, that may be the right choice. The consumer is another trusted service, not an external developer. The contract is narrow. The team cares more about low overhead, strict schemas, and operational control than about resource purity.

Many generic web articles fall short in their explanations. They celebrate REST's statelessness but skip the reality that statelessness can create friction in ML workflows requiring session persistence or model state management. For low-latency inference, teams often need to weigh REST against gRPC or message queues, especially in latency-sensitive fintech and personalization systems, as noted in this analysis of web API versus REST trade-offs.

If the endpoint exists only for service-to-service inference, optimize for serving behavior first and REST semantics second.

Example two public B2B SaaS integration API

Now compare that with a public billing or customer data API. Here a resource-oriented structure wins:

GET /v1/customers/{id}GET /v1/customers/{id}/ordersPOST /v1/ordersPATCH /v1/orders/{id}

That shape is easier for partners to learn because the model mirrors business entities. It also works cleanly with OpenAPI, SDK generation, and support workflows.

A representative OpenAPI fragment looks like this:

paths:/v1/customers/{id}/orders:get:summary: List orders for a customerparameters:- name: idin: pathrequired: trueschema:type: stringresponses:"200":description: Successful responseWhat works and what doesn't

The anti-pattern is forcing one style everywhere.

What usually works:

- REST for external and shared contracts

- RPC or gRPC for tightly scoped internal compute paths

- Events or queues for asynchronous workloads

- OpenAPI for anything many engineers must consume

What usually fails:

- Public APIs built like private RPC calls

- Model-serving endpoints exposed to partners without stable versioning

- Internal platforms with dozens of custom endpoint conventions

- A “REST-first” rule that ignores workload shape

Performance Security and Scalability Implications

Senior leaders usually care less about style labels and more about whether the interface will hold up under production pressure.

Performance is mostly an implementation problem

The common mistake is blaming REST for performance problems that really come from framework choices, serialization overhead, or poor batching.

A benchmark comparing REST-style APIs and GraphQL implementations found that implementation choices often mattered more than the REST versus generic API distinction. In that benchmark, Fastify with REST achieved roughly three times the throughput of Express with REST when fetching many entries, which is a strong reminder that stack choice is critical for performance. The benchmark is available in this framework comparison thesis on REST and GraphQL performance.

That doesn't mean transport doesn't matter. It does. JSON payloads are verbose compared with binary formats, and repeated request-response patterns can hurt high-frequency internal traffic. But before replacing REST, fix the basics:

- Pick a high-throughput framework

- Keep payloads lean

- Batch where it makes sense

- Cache aggressively on safe reads

- Separate public API needs from internal serving needs

Security depends on discipline, not style

REST works well with established web security patterns such as HTTPS, token-based auth, and gateway enforcement. That's one reason security teams often prefer it for external interfaces. The conventions are familiar, and the ecosystem around them is mature.

Still, REST is not automatically secure. Teams still need to validate inputs, lock down authorization, control secrets, and test their exposed surface regularly. If your security lead wants a concise operational checklist for external applications, this guide on how to secure your SaaS application is a practical supplement.

Scalability is where REST earns its keep

REST's biggest infrastructure advantage is how well it aligns with horizontal scaling. Stateless requests are easier to distribute across pods or instances. Standard HTTP semantics work naturally with gateways and CDNs. Monitoring systems can infer useful signals from status codes, routes, and methods with less custom setup.

For Node.js teams, response caching often becomes the fastest practical win. If you're tuning an API layer rather than redesigning the protocol, this guide on caching in Node.js for production services is worth reviewing with your backend lead.

Practical rule: if an API has to be understandable, monitorable, and supportable across many teams, REST usually lowers operating cost even when it isn't the absolute fastest option.

Checklist When to Choose REST vs Another API Style

Most organizations don't have one API problem. They have several. Public integrations, internal service calls, event-driven pipelines, and frontend aggregation all impose different constraints.

One market reality matters here. APIs are everywhere, but only 15% of all APIs are publicly available, while 85% remain private or partner-restricted, including 58% private and 27% partner-restricted, according to this analysis of public versus private API availability. That's why internal versus external consumers should drive the style decision.

API style decision matrix for AI and SaaS teams

| Use case | Recommended style | Rationale | Hiring implication for AI teams |

|---|---|---|---|

| Public API for customers or partners | REST | Familiar patterns, stable contracts, broad tooling support | Hire engineers strong in OpenAPI, versioning, auth, and developer experience |

| Internal high-frequency service-to-service calls | gRPC or RPC-style API | Better fit for strict schemas and low-overhead calls | Hire backend or ML platform engineers who understand protobuf, service contracts, and latency tuning |

| Frontend data aggregation across many resources | GraphQL | Flexible query shape for changing UI needs | Hire engineers who can model schemas, control query cost, and design resolvers carefully |

| Async model pipeline or event processing | Event-driven API or queue | Better for decoupling and retries in background workflows | Hire data and MLOps engineers with streaming and workflow orchestration experience |

| Admin or platform CRUD interface | REST | Resource model is clear and operationally simple | Hire full-stack or platform engineers who know HTTP semantics and governance |

The shortlist I'd use in an architecture review

Who consumes the API

If the answer is “many teams” or “external developers,” default toward REST.What the latency path looks like

If the workload is inference-heavy or chatty between services, test whether a non-REST pattern fits better.How much governance you need

REST helps when documentation, discoverability, and consistency matter more than local optimization.What skills you can hire for

The best architecture on paper still fails if your team can't operate it.

Building Your AI Team with the Right API Skills

A candidate who says “REST uses HTTP verbs” knows the vocabulary. A candidate who can explain when not to use REST understands systems.

For hiring, I'd screen for judgment, not certification language.

Interview questions that reveal real depth

- Design judgment: “Describe a case where you chose an RPC-style endpoint like

/predictinstead of a resource-oriented REST design. What did you gain and what did you give up?” - Scalability thinking: “How does statelessness affect load balancing, retries, and horizontal scaling?”

- ML systems awareness: “If your model-serving path has strict latency requirements, when would you keep REST and when would you move to gRPC or async messaging?”

- Operational maturity: “How would you document, version, and observe an API used by both internal teams and external partners?”

- Security fundamentals: “What security controls belong in the API gateway versus in the application code?”

What strong answers sound like

Strong engineers talk about trade-offs in plain language. They mention OpenAPI, idempotency, auth boundaries, schema discipline, caching, and the difference between external developer experience and internal performance paths. They don't treat REST as dogma.

If you're building hiring channels, it helps to benchmark against firms that specialize in technical staffing for AI-heavy environments. For example, this overview of an AI talent placement agency is useful for comparing how specialist recruiters frame engineering depth versus generic staffing.

The immediate next steps are simple:

- List your API surfaces by consumer type. Public, partner, internal sync, internal async.

- Pick the style per surface, not per company. One standard everywhere sounds clean but often creates unnecessary friction.

- Hire for the architecture you need to run. That means platform-minded backend engineers for shared REST contracts and ML infrastructure engineers for low-latency serving paths.

If you're hiring for API-heavy AI products, ThirstySprout can help you find senior AI engineers, MLOps specialists, and backend platform talent who've already shipped production systems. Start a Pilot, review vetted profiles, and build the team that matches your architecture instead of forcing your architecture to fit whoever is available.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.