TL;DR

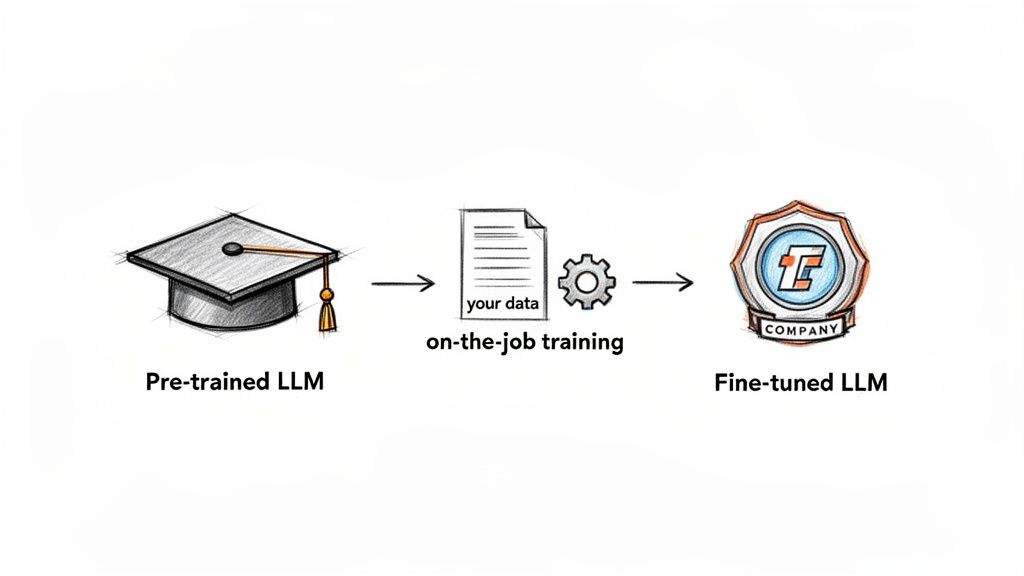

- What is it? LLM fine-tuning is like giving a brilliant, generalist AI model specialized on-the-job training with your company’s private data. This adapts its core logic to master a specific skill, style, or format.

- When to use it? Only fine-tune when you need to teach the model a new behavior (e.g., generating perfect JSON or mimicking your brand voice). For simply providing new knowledge, Retrieval-Augmented Generation (RAG) is faster and cheaper.

- How it works: Use Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA to update a small fraction of the model's parameters. This delivers 90% of the benefit at 10% of the cost of full fine-tuning.

- Key to success: Your project's success depends on a small, high-quality dataset (500-5,000 examples) of clean, representative data. Quality over quantity is non-negotiable.

Who this is for

This guide is for technical leaders who need to ship meaningful AI features in weeks, not months. You are likely a:

- CTO, Head of Engineering, or Founder making the call on architecture and budget.

- Product Lead scoping the timeline and business impact of a new AI feature.

- Staff Engineer or AI Architect responsible for the technical decision: fine-tune, RAG, or just better prompts?

This is a pragmatic playbook for operators accountable for connecting technical choices to business outcomes like reduced time-to-market, lower costs, or a better customer experience.

alt text: Diagram illustrating the LLM fine-tuning process, starting with a pre-trained base model, adding a small set of custom training data, and resulting in a specialized, fine-tuned company LLM.

Quick Framework: When to Fine-Tune vs. RAG vs. Prompt Engineering

Choosing the right customization path is your first critical decision. Over-engineering is expensive, but under-engineering won't solve the problem. Use this decision tree.

- Start with Prompt Engineering: Can you get the desired output by improving your instructions? For many style and formatting tasks, mastering prompt engineering is the fastest and cheapest solution.

- Move to RAG for Knowledge Gaps: Does the model need access to recent or proprietary information to answer questions? RAG is the best choice for querying knowledge bases (docs, wikis, support tickets). It "grounds" the model in facts, reducing hallucinations.

- Adopting a complex style or brand voice consistently.

- Mastering a highly specific, structured format (e.g., generating valid JSON for an API).

- Learning a nuanced skill like domain-specific sentiment analysis.

- Data Curation: A financial expert labeled 10,000 sentences from market news and earnings calls as

positive,negative, orneutral. This high-quality dataset was the key. - Efficient Fine-Tuning: They used LoRA (Low-Rank Adaptation) to keep costs down. Training took less than 3 hours on a single A100 GPU, costing under $500 total.

- Business Impact: The fine-tuned model achieved 94% accuracy on their financial test set, crushing the generic model's 72%. This became a core, sellable feature of their analytics platform, creating a clear market differentiator.

- Data Prep: They created a "gold standard" dataset of 5,000 examples, pairing structured product data (material, fit, color) with descriptions written by their best copywriters.

- Training & Evaluation: A Mistral 7B model was fine-tuned on this data. A human review panel scored outputs for brand alignment alongside automated metrics.

- Deployment & Monitoring: The model was deployed as a microservice integrated into their Product Information Management (PIM) system. A feedback loop allowed copywriters (now editors) to flag poor outputs, which were then used to improve the dataset for the next retraining cycle.

- Data Preparation: High-quality data annotation requires subject matter experts, who can cost $50–$150/hour. A 5,000-example dataset can easily cost $5,000–$15,000.

- Expertise: You need senior AI engineers to run experiments and a solid MLOps pipeline to manage the model in production.

- Catastrophic Forgetting: The model can become so specialized it "forgets" general reasoning skills.

- Model Drift: The model's performance degrades as real-world data patterns change over time, requiring continuous monitoring and retraining.

- Data Privacy: Fine-tuning on proprietary data creates a risk of exposing sensitive information. Rigorous data scrubbing and strong AI governance best practices are mandatory.

- Define a specific business KPI to improve (e.g., reduce ticket resolution time by 20%).

- Confirm that prompt engineering and RAG are insufficient.

- Select a base model (e.g., Llama 3 8B) based on cost, performance, and license.

- Set a budget for data prep, compute, and MLOps.

- Collect 500-5,000 raw data examples that mirror the production environment.

- Scrub all Personally Identifiable Information (PII) and de-duplicate records.

- Have a subject matter expert label and format data into prompt/completion pairs. Research shows data curation impacts fine-tuning efficiency more than data volume.

- Split data into an 80% training set and a 20% validation set. Use one of the best data pipeline tools to automate this.

- Choose a PEFT method (LoRA is a safe default).

- Configure the training environment (e.g., using Hugging Face's TRL library).

- Run at least three training experiments, tracking hyperparameters and results.

- Log all artifacts (model weights, data version, metrics) for reproducibility.

- Evaluate the model on the held-out validation set.

- Conduct qualitative human review for brand voice, safety, and bias.

- Perform adversarial testing ("red teaming") to identify failure modes.

- Compare performance against the original base model and business KPIs.

- Deploy the best model as a versioned API endpoint.

- Set up monitoring to track performance and detect data drift.

- Build a feedback loop for users to flag bad outputs.

- Schedule periodic retraining (e.g., quarterly) to maintain performance.

- Scope your use case: Use the framework above to decide if fine-tuning is truly necessary for your business goal.

- Estimate your data prep cost: Identify who will label your data and how long it will take. This is your biggest upfront investment.

- Plan a small pilot: Start with a 2-week pilot project to fine-tune a model on just 500 high-quality examples to prove the value before committing more resources.

Fine-tuning embeds a new skill into the model's core logic. If you just need the model to know new facts, RAG is almost always the better, more scalable option.

Decision Matrix

Practical Examples: Fine-Tuning in Production

Theory is great, but real-world results are what matter. Here are two examples of how focused teams use fine-tuning to create a competitive advantage.

Example 1: Fintech Startup Builds a Niche Sentiment Analyzer

A small fintech startup needed to outperform generic sentiment analysis tools, which often misunderstand financial news. A general model might see "volatile market" as negative, missing the context crucial for traders.

Their goal was a sentiment model that understood financial jargon. They chose Llama 3 8B, a powerful yet manageable base model.

How They Did It:

LoRA Configuration Snippet:

This sample config using Hugging Face's PEFT library shows how LoRA is set up. Key parameters likercontrol the adapter's complexity, whiletarget_modulesapplies the changes to specific parts of the model architecture.from peft import LoraConfig# Configuration for LoRA fine-tuninglora_config = LoraConfig(r=16, # Rank of the update matriceslora_alpha=32, # Alpha parameter for scalingtarget_modules=["q_proj", "v_proj"], # Apply LoRA to query and value projectionslora_dropout=0.05,bias="none",task_type="CAUSAL_LM")

Example 2: E-commerce Company Automates On-Brand Product Descriptions

An online fashion retailer was spending too much time writing product descriptions manually, leading to an inconsistent brand voice. They needed to generate descriptions that were not only accurate but also captured their chic, playful personality.

Their MLOps Pipeline:

Business Impact: The time to get a product description live dropped from 20 minutes to under 2 minutes, enabling them to scale their product catalog 10x faster while maintaining brand consistency.

Deep Dive: Methods, Trade-offs, and Risks

Fine-tuning isn't a single technique but a spectrum of methods, each with different implications for cost, time, and performance. Choosing the right one is a key technical leadership decision.

Full Fine-Tuning vs. Parameter-Efficient Fine-Tuning (PEFT)

Full Fine-Tuning updates every single parameter in the base model (Llama 3, GPT-4, Mistral). It offers the highest potential performance but is extremely expensive and risks "catastrophic forgetting," where the model loses general capabilities. It's overkill for 99% of use cases.

Parameter-Efficient Fine-Tuning (PEFT) is the modern, practical alternative. PEFT methods freeze the base model's weights and train a small number of new parameters. You get most of the customization benefits at a fraction of the cost.

One of the most popular PEFT methods is Low-Rank Adaptation (LoRA). It injects small, trainable "adapter" layers into the model, cutting compute costs by up to 90%. Today, over 65% of enterprise AI deployments rely on PEFT methods like LoRA. You can dig deeper into these LLM fine-tuning techniques on lakera.ai.

The Hidden Costs and Risks

The real cost of fine-tuning goes far beyond GPU hours.

Checklist: Your 5-Phase Fine-Tuning Project Plan

Use this checklist to run a disciplined fine-tuning project from start to finish. This structured process helps de-risk your investment and accelerates time-to-value.

-> Download this checklist as a PDF/CSV

Phase 1: Strategy & Scoping (1 week)

Phase 2: Data Preparation (2-3 weeks)

Phase 3: Training & Experimentation (1-2 weeks)

Phase 4: Evaluation & Safety (1 week)

Phase 5: Deployment & MLOps (Ongoing)

What to do next

Ready to get the right expertise on your team? At ThirstySprout, we connect you with elite AI/ML engineers who can run this entire playbook for you.

Start a Pilot | See Sample Profiles

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.