It’s Monday morning. Sales wants an AI copilot in the product this quarter. Your engineers are already overloaded. A vendor says they can ship everything fast. A candidate with “LLM” on their resume wants a top-tier salary. If you make the wrong call now, you burn six figures and lose a quarter.

Founders get trapped here because they ask broad questions about AI and get polished, useless answers. One advisor pushes data scientists. Another pushes ML engineers. Your team wants to stitch together APIs and prompt templates and hope it holds up in production. Six weeks later, you have demos, not a system the business can trust.

The standard has changed. Competitors are already putting AI into workflows, support, search, forecasting, and internal tools. You do not need a research team. You need clear hiring criteria, the right operating model for your stage, and a realistic budget tied to shipped outcomes.

This guide is built for operators. It answers the 10 questions about AI that decide whether your AI function produces value or turns into expensive confusion. You’ll get direct recommendations on who to hire, how to interview them, when to use contractors or fractional leaders, when to buy instead of build, and how to avoid creating cloud spend, security risk, and maintenance burden you did not plan for.

You’ll also get concrete interview questions, scorecard examples, and budget ranges you can use today.

The goal is simple. Help you make sound hiring and strategy decisions in weeks, avoid the wrong early hires, and build an AI team that ships production systems instead of collecting experiments.

1. What skills and experience should I look for when hiring AI engineers?

Organizations often hire the wrong AI engineer because they optimize for buzzwords instead of shipped systems. You don’t need someone who has taken a few model courses and can demo a notebook. You need someone who can move from messy data to production behavior you can trust.

Start with three filters. Can they build models or AI workflows? Can they write solid software? Can they operate what they ship after launch? If one of those is missing, you’re hiring a partial contributor, not an AI engineer.

What good looks like

A strong AI engineer should be able to explain a real production project with specifics. For example, a recommendation system engineer from a product team like Mailchimp should be able to discuss feature pipelines, offline evaluation, rollout guardrails, and how they handled cold start users. An engineer from a fintech environment like Intuit should be able to explain model risk, data quality controls, and how they shipped under compliance constraints.

Ask for shipped work, not certificates.

- Look for production depth Ask what happened after deployment. Did latency spike, did data drift, did users misuse the feature, did costs rise?

- Check software quality Review GitHub repos or internal code samples for tests, structure, documentation, and deployment readiness.

- Match domain exposure A fintech AI engineer and a marketing AI engineer may both be talented, but domain context changes failure modes.

- Test communication In remote teams, clear writing matters almost as much as technical depth.

Practical rule: Hire people who can explain trade-offs, not just architecture diagrams.

Mini scorecard you can use

Use a simple hiring rubric with four categories scored red, yellow, or green.

- Modeling judgment Can they choose between RAG, fine-tuning, classification, ranking, or rules?

- Engineering maturity Can they package, test, deploy, and monitor systems?

- Business understanding Can they connect technical choices to user value and risk?

- Ownership Have they handled incidents, poor outputs, or model regressions themselves?

A junior candidate might impress you with model terminology. A senior one will tell you where the system failed and how they fixed it. That’s who you want.

2. How do I build an AI team quickly without months of recruitment?

Your founder wants an AI feature live this quarter. Product is pushing. Sales already mentioned it in deals. If you open five full-time roles at once, you will burn 8 to 12 weeks on recruiting and still lack the one person who can make the system work.

Build around the immediate constraint.

Early-stage teams rarely need an "AI department." They need one senior operator who can define the use case, choose the stack, set evaluation criteria, and stop the team from building an expensive demo that fails in production. After that, hire around the actual blocker.

Fastest team shape for speed

Use a staged build, not a hiring spree.

Start with one senior hire on a fractional or contract basis. This person should own architecture, scope, risk, vendor decisions, and a hiring plan. In many companies, that is a senior ML engineer with production experience. In others, it is an AI architect or MLOps lead. Titles matter less than shipped systems.

Then add the smallest team that can ship.

- Week 1: Fractional lead

Defines use case, evaluation plan, stack, security constraints, and a 90-day roadmap. - Week 2 to 4: Execution hire

Usually an ML engineer or LLM engineer focused on the main bottleneck. - After initial prototype: Reliability hire

Add MLOps or data engineering support when usage, data pipelines, or model monitoring start breaking. - Cross-functional coordination: Product owner

Use a strong PM or engineering manager if legal, support, design, and backend all need to move together.

That sequence saves money because each hire has a job to do on day one.

Hiring sequence I recommend for Seed to Series A

If you are adding AI to an existing SaaS product, use this order:

- Bring in a fractional senior expert for 4 to 8 weeks.

- Have them produce a system brief, risk list, interview loop, and scorecard.

- Hire one builder tied to the launch blocker.

- Keep specialists off the org chart until the problem proves they are needed.

Example. A support platform wants an assistant over internal docs and tickets. The first hire is not a research scientist. It is a senior person who can define retrieval quality, fallback logic, human handoff, prompt versioning, and cost controls. The second hire is the engineer who wires that system into the product. A third hire only appears if traffic grows, latency gets ugly, or evaluation starts failing.

What to ask the fractional lead to deliver

Do not hire an advisor for vague "AI strategy." Pay for specific outputs.

Ask for these four deliverables in the first month:

- A one-page system architecture with clear build vs. buy decisions

- An evaluation plan with target metrics, test cases, and failure thresholds

- A hiring scorecard for the first full-time AI role

- A 90-day staffing plan with budget ranges by role type

If they cannot produce that quickly, they are not the right lead.

For founders still sorting out role boundaries, this breakdown of machine learning engineer vs data scientist responsibilities will help you avoid hiring the wrong first person.

Budget ranges you can use today

Use market-tested bands and move fast.

- Fractional senior AI lead: $8,000 to $25,000 per month

- Contract ML or LLM engineer: $100 to $200 per hour

- Full-time senior ML engineer: $180,000 to $300,000 total annual cash compensation in major US markets

- MLOps or platform support, part-time or contract: $6,000 to $15,000 per month

You do not need all of them at once. In many cases, one strong fractional lead plus one execution engineer is enough to get from concept to production pilot.

Simple scorecard for the first 60 days

Judge this team on operating outcomes, not resume prestige.

- Week 2: Clear use case, architecture choice, evaluation plan

- Week 4: Working prototype on real company data

- Week 6: Cost, latency, and failure modes documented

- Week 8: Pilot live with monitoring and rollback path

If you miss these checkpoints, your issue is usually poor scoping, not lack of headcount.

Build the smallest team that can ship, measure, and fix problems. Add specialists only after the product earns them.

3. What's the difference between machine learning engineers and data scientists?

A lot of AI hiring mistakes come from using these titles like they’re interchangeable. They’re not. If you confuse them, you’ll hire someone strong at analysis when you needed someone strong at shipping.

A data scientist usually starts with questions. What patterns exist in the data? Which variables matter? How should we test a hypothesis? A machine learning engineer starts with systems. How does this model run in production? How will we serve it, monitor it, version it, and keep it working?

Who to hire first

If you’re an early-stage company trying to launch AI features, hire the machine learning engineer first. Shipping beats analysis at that stage. You need someone who can turn an idea into a reliable workflow that plugs into your product.

Use this simple distinction:

- Hire a data scientist when you need experimentation, statistical analysis, forecasting logic, or decision support.

- Hire a machine learning engineer when you need model-backed product features, inference pipelines, monitoring, and deployment reliability.

For a deeper role breakdown, see this guide on machine learning engineer vs data scientist.

Real-world split in practice

In a recommendation team, the data scientist may test ranking approaches and analyze engagement behavior. The ML engineer builds the feature store, model service, deployment pipeline, and rollback logic.

In fintech, the data scientist may design a fraud model and tune thresholds. The ML engineer gets that model into production, wires up data pipelines, and sets alerts for drift and failure.

If the output has to live inside your product with uptime expectations, you probably need an ML engineer.

A lot of founders ask questions about AI as if “smart model work” is the same as “reliable product work.” It isn’t. One role finds patterns. The other turns patterns into a system customers can use without your team babysitting it.

4. How do I hire LLM specialists when demand far exceeds supply?

Your product lead wants an AI assistant in six weeks. Your CTO candidate says you need an “LLM engineer.” A consultant says you need prompt engineers. All three may be wrong.

Hire for the system you need to ship. “LLM specialist” is not a useful job definition by itself. One candidate may be strong at retrieval pipelines and evaluation. Another may be good at model serving and latency control. Another may know how to call an API and write polished demos. Those are very different hires, with very different value.

Your first screen is simple. Ask what production LLM system they owned, what broke, and what they changed. If they cannot explain failure modes, eval design, cost tradeoffs, and rollout decisions in detail, keep looking.

What to test in interviews

Skip vague questions about “AI passion” or model buzzwords. Test judgment under real product constraints.

Use interview questions like these:

- RAG vs fine-tuning: What problem would make you choose retrieval over fine-tuning, and what would make you change your mind?

- Model selection: How would you choose between a frontier API model and an open-weight model for a regulated workflow?

- Cost control: What are the first three ways you would cut token spend in a production assistant?

- Evaluation: How would you measure answer quality, citation quality, refusal quality, and hallucination rate before launch?

- Failure handling: What should the product do when retrieval returns weak context or no context at all?

- Security: How would you prevent sensitive documents from appearing in answers for users without permission?

Strong candidates answer with tradeoffs, metrics, and system design choices. Weak candidates stay at the prompt level.

Mini-case and scorecard

Give them a case that looks like your business, not a whiteboard puzzle.

Example: you are building a finance copilot that answers internal policy questions. Answers must cite company documents. Wrong answers create compliance risk. Ask the candidate to design the stack, define the eval plan, and explain how they would launch it safely.

A strong answer usually includes permission-aware indexing, chunking strategy, retrieval testing, document freshness rules, citation checks, fallback behavior, human review for high-risk queries, prompt versioning, and offline eval sets before production traffic. A weak answer usually defaults to “use a better model” or “tune the prompt.”

Use a scorecard with four categories and rate each one from 1 to 5:

- Application design: Can they design the workflow end to end, including retrieval, context assembly, user permissions, and fallback paths?

- Model and vendor judgment: Can they explain when to use hosted models, open models, or a hybrid stack?

- Evaluation rigor: Can they define test sets, failure classes, acceptance thresholds, and review loops?

- Production reliability: Can they handle latency, logging, rollback, monitoring, and abuse controls?

Where to find them, and what to pay

Do not insist on candidates with “LLM” in their last job title. That shrinks your pool and lowers quality.

The best candidates often come from adjacent backgrounds: ML engineers who built search or ranking systems, backend engineers who owned inference services, applied scientists who shipped NLP products, and MLOps engineers who supported model deployment at scale. Teach product context. Do not try to teach engineering judgment from scratch.

For early-stage teams, the fastest path is usually one senior builder who has shipped a real LLM workflow, plus contract support for data prep, eval ops, or infrastructure. Expect senior full-time hires to be expensive. Fractional or contract specialists often make more sense for the first 90 days if your scope is still changing.

The hiring market also rewards flexibility. Good LLM specialists are rarely loyal to one vendor or one model family. You want someone who can compare proprietary APIs, open-weight models, and retrieval-first architectures based on cost, privacy, latency, and control. Vendor tribalism is a red flag.

If you remember one rule, use this one. Hire people who can explain how they made an LLM product safer, cheaper, and more reliable after the first launch. That is the difference between a demo builder and an operator.

5. What is MLOps and why is it critical for scaling AI?

Your team ships an AI feature on Friday. By Tuesday, support tickets spike, output quality drifts, latency doubles, and nobody can answer three basic questions: what changed, which users are affected, and how to roll it back safely.

That is the problem MLOps solves.

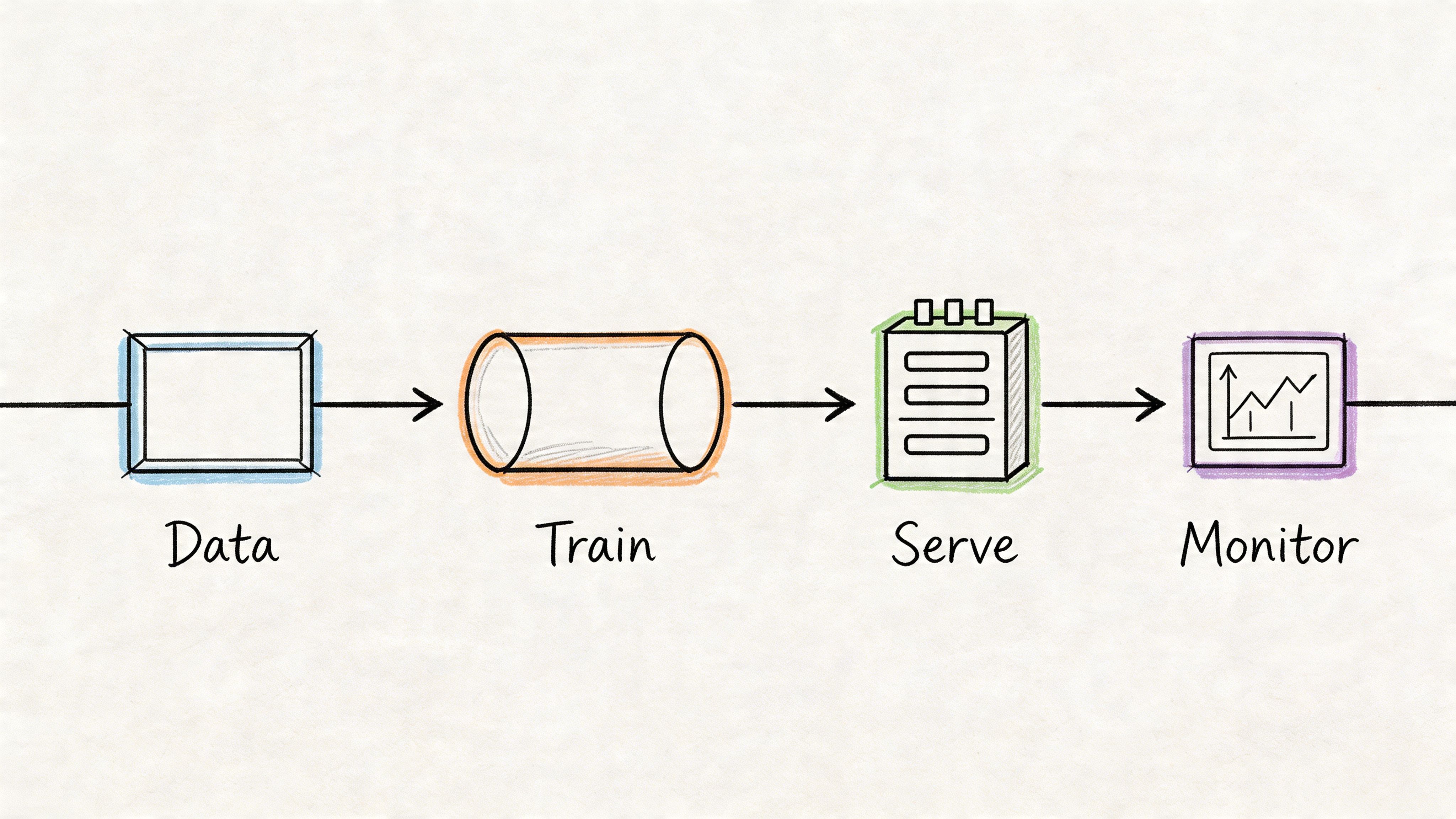

MLOps is the operating system for production AI. It covers the workflows and controls that keep models, prompts, and data pipelines reliable after launch. That means versioning, testing, deployment, monitoring, rollback, data checks, access controls, and retraining rules.

If you cannot reproduce a model release, trace a bad output to a version, or catch quality decay before customers do, your AI function will stall. Engineering time gets burned on incidents instead of shipping.

Here’s the lifecycle your team needs to operationalize:

What MLOps actually covers

Founders often hear "MLOps" and assume it is a platform purchase or a late-stage concern. Wrong. It starts the moment model output affects a customer, a workflow, or a decision.

For practical hiring and budgeting, break it into five responsibilities:

- Data control: Check schema, freshness, null rates, and training-serving consistency.

- Release management: Track model versions, prompts, datasets, configs, approvals, and rollback paths.

- Production serving: Handle latency, autoscaling, secrets, failover, and traffic routing.

- Observability: Monitor uptime, cost, output quality, drift, abuse, and failure patterns.

- Response loops: Trigger human review, disable risky releases, retrain, or revert based on clear thresholds.

That is why MLOps matters. It gives you control over quality, cost, and risk after the demo.

What a lean setup looks like in the first 90 days

Do not build a giant platform team too early. Start with a thin layer of discipline that your existing engineering team can maintain.

A lean MLOps setup for one or two AI features should include a staging environment, basic data validation, a model and prompt registry, structured logs, quality dashboards, alerting, and one rollback procedure that has been tested at least once. If your team cannot describe those pieces in plain English, stop adding new AI features until they can.

Use a simple operator scorecard:

- Can we reproduce the current model or prompt in production?

- Can we compare the current release against the last stable version?

- Can we detect bad outputs before customers escalate them?

- Can we shut off or route around a failing component fast?

- Do we know the unit economics per request, workflow, or customer?

If you want to pressure-test candidates who claim MLOps experience, use a few of these technical interview questions for engineers adapted for production AI systems.

A concrete example

A fraud model performs well in offline testing, then starts missing obvious cases in production. The issue is not the model architecture. An upstream event field changed format, feature values shifted, and nobody had an alert on data quality. Support sees the problem first. Risk sees it later. Engineering spends a week reconstructing the release history.

A basic MLOps layer catches the schema change, flags the drift, routes low-confidence outputs for review, and gives the team a rollback option. That is the difference between a contained incident and a trust problem.

Hiring and budget guidance

Early-stage companies usually do not need a full MLOps department. They need ownership.

For one production AI workflow, assign MLOps responsibility to a strong ML engineer, platform engineer, or senior backend engineer who has operated distributed systems before. Add contract help if you need cloud infrastructure, CI/CD, observability, or data pipeline support. Once you are running multiple models or high-volume LLM workflows, a dedicated MLOps or platform hire starts paying for itself because reliability work stops being part-time cleanup.

Budget for tools, not just people. Logging, monitoring, feature storage, eval pipelines, and cloud costs will show up before you expect them. Founders who ignore that line item end up blaming the model for what is really an operations failure.

This short explainer is worth sharing with your team before you hire for the function:

MLOps is how you turn AI from a promising prototype into a repeatable product capability. Treat it as a required operating discipline, not a cleanup task for later.

6. How do I evaluate AI candidates' technical skills without years of experience with them?

A founder hires the candidate with the best AI resume, gets a polished demo in week two, and learns in month three that nothing can survive real traffic, messy data, or product constraints. That mistake is expensive and common.

You do not need deep personal expertise in every AI stack. You need a hiring process that forces candidates to show judgment under constraints. Evaluate how they reason, what they shipped, and whether they can explain trade-offs in plain English.

Use a three-part process and keep it consistent across candidates. One, run a shipped-project walkthrough. Two, do a role-specific technical interview. Three, assign a short take-home based on an actual problem from your roadmap. This gives you signal on execution, communication, and product sense instead of resume theater.

If you need a baseline interview bank, start with these technical interview questions for engineers and adapt them to the role you are hiring for.

What to ask in the live interview

Start with one project the candidate claims mattered. Then press until you know whether they owned the hard parts or just worked near them.

Ask questions like these:

- For LLM engineers: How would you reduce hallucinations in a support assistant while keeping answers useful and fast?

- For data scientists: How would you prove that better model output improved a business metric, not just an offline benchmark?

- For MLOps engineers: A model looks good in offline evaluation and fails in production. What do you check first, and in what order?

- For data engineers supporting AI: How would you keep training data, feature logic, and inference inputs consistent across environments?

Then ask about failure. Ask what broke after launch, how they detected it, what they changed, and what they would do differently now. Strong operators answer with specifics. Weak candidates drift into abstractions.

A scorecard you can use today

Do not end interviews with vague reactions like “seems sharp.” Score five areas on a simple 1 to 5 scale:

- System design: Can they design an AI workflow that fits your product and constraints?

- Evaluation: Do they know how to measure quality, business impact, and failure modes?

- Data judgment: Can they spot data quality issues, leakage, weak labels, and distribution problems?

- Production thinking: Do they account for latency, monitoring, fallbacks, and cost?

- Communication: Can they explain decisions clearly to product, engineering, and leadership?

A candidate who scores 4 or 5 in one area and 2 in everything else is a specialist. Hire that person only if the gap matches your immediate need.

A practical take-home

Keep the assignment short enough that good candidates will do it. Ninety minutes is a reasonable ceiling.

Example prompt: “Design an internal support assistant for company docs. Show your retrieval approach, evaluation plan, fallback behavior, and the dashboard you would want after launch.”

Review it against this checklist:

- Clear assumptions

- Trade-off discussion

- Realistic evaluation plan

- Sensible failure handling

- Cost and latency awareness

For early teams, this process also helps you decide whether to test someone in a contract-to-hire position before committing to a full-time role. That is often the right move when the candidate looks strong but your scope is still changing.

The rule is simple. Hire for demonstrated judgment, not AI vocabulary. Anyone can talk about models. You need people who can turn messy requirements into a system that works.

7. Hiring models and costs: full-time, contract, fractional. What should I choose and what will it cost?

Choose the hiring model based on continuity and uncertainty. That’s the right frame. Not prestige. Not what other startups are doing. Ask two questions. Is this capability core to our product? Are we still learning what we need?

If the work is core and ongoing, hire full-time. If the work is specialized or time-bound, use contract help. If the direction is unclear and the potential impact is substantial, start with fractional leadership.

The practical decision rule

Use full-time for roles that own systems you’ll keep operating. Use contract talent for narrow builds, audits, migrations, or specialist implementation. Use fractional leaders when you need senior judgment before you can write the right full-time job description.

Mini-case one. A Series A startup wants to embed an AI workflow into its core product. The CTO brings in a fractional AI lead to define architecture and vendor choices, then hires one full-time ML engineer and one backend engineer to build the first release.

Mini-case two. A larger SaaS company already has data and platform teams but lacks retrieval and evaluation expertise. It uses a contractor to build the first RAG system, documents the stack, then decides whether the capability is strategic enough to internalize.

When each model works best

- Full-time Best for product ownership, long-term iteration, institutional knowledge, and cross-team trust.

- Contract Best for audits, migration work, one-off builds, and proving a use case fast.

- Fractional Best for architecture, hiring calibration, technical due diligence, and mentoring early hires.

If you’re considering a trial path before making a permanent hire, this explanation of a contract-to-hire position is a useful baseline.

One caution. Fractional talent is great at direction and review. It’s weaker as the sole execution engine if your team lacks strong internal operators. Use it to reduce expensive mistakes, then convert proven needs into durable roles.

8. How do I assess whether we need to build vs. buy AI capabilities?

A founder approves a six month AI build, hires expensive specialists, and ends up with a weaker version of what an API vendor already shipped. I see this constantly. The right default is simple. Buy first. Build only where owning the capability changes revenue, margin, or defensibility.

Treat this as an operating decision, not an engineering preference. Your team is not choosing between “technical” and “non-technical” paths. You are choosing where to spend scarce time, who owns risk, and how fast you can get to production.

The build vs. buy scorecard

Use a one-page scorecard and force a decision. Score each category from 1 to 5.

- Differentiation. Does this capability directly improve the product in a way customers will notice and pay for?

- Data advantage. Do you have proprietary data, feedback loops, or workflows a vendor cannot easily replicate?

- Performance requirements. Do accuracy, latency, privacy, or control requirements exceed what off-the-shelf tools can handle?

- Economics at scale. Will vendor pricing become unacceptable once usage grows?

- Operational readiness. Do you have people who can evaluate, deploy, monitor, and maintain what you build?

- Time to value. Do you need a result this quarter, or can you wait for a custom system to mature?

Here’s the rule. If differentiation and data advantage score low, buy. If differentiation is high but operational readiness is low, buy now and plan a staged internal build later. If all six scores are high, build.

What to ask in the decision meeting

Don’t let this become a vague strategy debate. Ask direct questions.

- What business metric improves if we own this capability?

- What breaks if the vendor changes pricing, terms, or model behavior?

- What would a good enough vendor version get us in 30 days?

- What would an internal version realistically take in headcount, budget, and maintenance?

- Who will own evaluation, incident response, and ongoing model updates after launch?

If nobody can answer those questions clearly, you are not ready to build.

Budget ranges you can use

Buying is usually the cheaper path for copilots, search, extraction, summarization, and internal productivity tools. Expect software spend plus one engineering owner. Building starts to make sense when usage is heavy, workflows are unique, or mistakes are expensive.

A practical benchmark:

- Buy-first pilot. Low five figures to get a production trial live with vendor tools, integration work, and evaluation.

- Hybrid approach. Mid five figures to low six figures once you add retrieval, routing, guardrails, and custom evaluation.

- Built in-house capability. Six figures and up once you factor in hiring, infrastructure, testing, observability, and ongoing support.

Those ranges are broad on purpose. The important point is this. Founders routinely underestimate operating cost and overestimate the value of owning the stack early.

Three common decisions

For a support copilot, buy the model layer and build the workflow layer. Your advantage comes from your knowledge base, routing logic, QA process, and product integration.

For fraud detection, start with a vendor if speed matters. Build once your transaction patterns, false positive costs, and compliance requirements justify owning the decision logic.

For content generation, buy the model. Build the evaluation harness, templates, approval flow, and product controls. That is where reliability usually comes from.

Buy the commodity. Build the differentiator.

If you’re running a distributed team, your choice also affects how hard the system will be to operate day to day. A bought solution is often easier to document, hand off, and support across time zones. This guide on managing a remote team effectively is a useful reference if remote execution is part of the decision.

The same logic applies if you want to launch a remote business with AI. Start with proven tools, get customer value fast, and internalize only the pieces that create real advantage.

9. How do I integrate remote AI engineers into my company culture and workflows?

Remote AI hires fail for predictable reasons. They aren’t given enough product context. They work in a separate lane from the app team. Nobody documents decisions. Reviews focus on code but ignore model behavior. The engineer looks productive for a month, then the rest of the company realizes they’ve built an isolated system nobody else can operate.

Fix that with process, not slogans.

The operating model that works

Remote AI engineers need the same things every strong remote technical hire needs, plus a little more structure around data, evaluation, and product behavior.

Start with onboarding that explains the business problem, not just the stack. Pair them with one product partner and one engineering partner. Put architecture decisions in writing. Require short design docs before implementation and release notes after deployment.

If your managers need a broader remote operating baseline, this guide on how to manage a remote team covers the fundamentals.

A practical remote integration checklist

- Create overlap hours Make sure they can sync with product and engineering regularly.

- Document decisions Use Notion, GitHub, or your wiki for prompts, model versions, trade-offs, and incident notes.

- Review outputs, not just code AI work needs product and quality review, not only engineering review.

- Use async video Loom works well for model walkthroughs, architecture updates, and incident explanations.

- Assign ownership clearly Every AI workflow should have a named owner for quality and operations.

A founder building a distributed company can also pick up useful operating ideas from this guide on how to launch a remote business with AI.

Mini-case

A remote ML engineer joins a SaaS company to build lead scoring. In the weak version, they get a Jira board and access to data. In the strong version, they get customer segments, sales definitions, known data gaps, current scoring complaints, and examples of bad downstream outcomes. Only one of those setups produces a useful system.

Remote AI work succeeds when context travels well. Your process has to make that happen.

10. What emerging AI roles and specializations should I be hiring for?

You do not need a team full of trendy AI titles. You need the few specialists tied to the bottlenecks already slowing product delivery, model quality, or unit economics.

A common founder mistake is hiring an "AI expert" for a problem that is really an eval gap, a retrieval problem, or an inference cost problem. That creates expensive overlap and vague ownership. Hire specialists only after the pain is visible in missed releases, unreliable outputs, rising cloud spend, or compliance risk.

The short list worth paying attention to is practical.

- LLM evaluator. Owns eval sets, red-team scenarios, reviewer rubrics, pass-fail thresholds, and release gates. Hire this role when your team keeps debating whether output quality is "good enough" with no shared standard.

- RAG specialist. Owns retrieval design, chunking strategy, indexing, ranking, citation quality, and grounding. Hire this role when your assistant answers confidently but pulls the wrong context or misses key documents.

- Inference engineer. Owns latency, routing, batching, caching, fallbacks, and serving cost. Hire this role when model performance is acceptable but response times and margins are getting worse.

- AI product manager. Owns workflow design, user adoption, guardrails, rollout plans, and success metrics. Hire this role when your models work in demos but fail to fit real customer behavior.

- AI governance lead. Owns audit trails, review policies, approval flows, vendor controls, and risk documentation. Hire this role in regulated products or any workflow where a bad answer creates legal or brand exposure.

Do not hire these roles by title alone. Use a scorecard.

Ask three questions first. What is breaking repeatedly? What does that failure cost you each month? Can a strong generalist solve it within one quarter? If the answer to the third question is no, hire the specialist.

Here is the operator's version of that decision:

| Bottleneck | First hire | What good looks like in 90 days |

|---|---|---|

| Output quality is inconsistent | LLM evaluator | Eval suite in place, release criteria defined, regression checks running |

| Answers are ungrounded or miss source material | RAG specialist | Retrieval quality improves, citation coverage rises, bad-answer patterns shrink |

| AI features are slow or too expensive | Inference engineer | Lower latency, lower cost per request, clear routing rules by use case |

| Customers do not adopt the feature | AI product manager | Better workflow fit, cleaner UX, measurable usage tied to business outcomes |

| Compliance or trust risk is growing | AI governance lead | Review controls, auditability, incident process, policy ownership |

You can cover a lot with generalists at the start. A senior ML engineer can usually handle prompt design, retrieval, and basic evals. A strong backend engineer can often take the first pass at model serving and caching. Do that early. Specialize only where the bottleneck is repeated, expensive, and clearly owned.

One area founders underfund is bias and validation coverage. If your product touches hiring, healthcare, finance, education, insurance, or global user bases, assign ownership for dataset coverage, failure analysis, and review policy. If nobody owns that work, it does not happen.

My recommendation is simple. Add emerging AI roles in this order: evaluator first, RAG or inference second based on your product, AI product manager next if adoption is weak, governance lead once trust and compliance risk justify dedicated ownership. That sequence keeps hiring aligned with real operating pain instead of hype.

10-Point AI Hiring & Strategy Comparison

| Item | 🔄 Implementation complexity | ⚡ Resource requirements | ⭐ Expected outcomes | 📊 Ideal use cases | 💡 Key tips |

|---|---|---|---|---|---|

| What skills & experience to look for when hiring AI engineers? | High, varied technical + production experience | Senior ML engineers, MLOps, data engineering; costly recruitment | ⭐⭐⭐⭐ Reduced time-to-production and fewer costly mistakes | Building and scaling production ML systems, mentoring teams | Prioritize shipped projects/GitHub; consider fractional seniors |

| How to build an AI team quickly without months of recruitment? | Medium, process-driven but repeatable | Access to vetted talent pools, fractional/contract hires, remote ops | ⭐⭐⭐ Fast team assembly; shorter time-to-market | Startups needing rapid product launch or gap fills | Use fractional experts first; define clear scopes and onboarding |

| What's the difference between ML engineers and data scientists? | Low–Medium, role clarity required | Distinct hires or multi-skilled engineers depending on stage | ⭐⭐⭐ Clearer role fit, better hiring outcomes | Teams needing both research/insight and production reliability | Define job specs (research vs production); hire ML engineers early |

| How do I hire LLM specialists when demand > supply? | Very high, scarce and fast-evolving skillset | Premium compensation, niche tooling (LangChain, vector DBs) | ⭐⭐⭐⭐ Strong product differentiation for LLM products | LLM-native apps, copilots, retrieval-augmented systems | Test for production LLM experience; consider fractional architects |

| What is MLOps and why is it critical for scaling AI? | Very high, infrastructure + processes | Platform engineers, CI/CD, monitoring, infra (K8s, orchestrators) | ⭐⭐⭐⭐⭐ Scalable, reliable production models and lower ops risk | High-volume predictions, regulated fintech, production LLM serving | Start simple; invest when you have multiple production models |

| How to evaluate AI candidates' technical skills without deep internal expertise? | Medium, requires structured assessment design | Take-homes, expert interviews, portfolio/GitHub review | ⭐⭐⭐ Better hiring signal; fewer false positives | Hiring LLM/ML/MLOps talent when hiring managers lack domain depth | Use real-world take-homes; involve external experts or vetted partners |

| Hiring models and costs: full-time, contract, fractional, which to choose? | Medium, financial and strategic trade-offs | Varies: full-time payroll, contract rates, fractional fees | ⭐⭐⭐ Cost vs continuity optimizations per stage | Early-stage validation, scaling teams, bridging expertise gaps | Early-stage: fractional seniors; core roles should be full-time |

| How to assess whether to build vs. buy AI capabilities? | Medium–High, strategic & technical trade-off analysis | Build: engineering + infra; Buy: API/subscription costs | ⭐⭐⭐ Trade-off between differentiation and speed-to-market | MVPs via APIs; build when data advantage or latency matters | Buy to validate; build when you have unique data or long-term needs |

| How to integrate remote AI engineers into company culture & workflows? | Medium, process and communication-heavy | Async tooling, documentation, overlap hours, onboarding | ⭐⭐⭐ Remote-first talent productivity and retention | Distributed teams, global hiring, 24-hour dev cycles | Document everything, ensure 2–3 hours overlap, pair mentoring |

| What emerging AI roles & specializations should I hire for? | Medium, roles evolving and sometimes ambiguous | Niche specialists (prompt, RAG, inference, safety) or multi-role hires | ⭐⭐⭐ Future-readiness and faster capability building | Scaling LLM products, safety/compliance, inference optimization | Start with multi-skilled engineers; hire specialists when roles mature |

Your Next Steps: From Questions to an Action Plan

Your head of product wants an AI feature this quarter. Your engineering lead is unsure whether you need an LLM engineer, an MLOps hire, or a contractor for 90 days. Your recruiter is getting flooded with candidates who can talk about AI but have never shipped anything useful. That is the moment this article is for.

The 10 questions above are not theory. They are an operator’s playbook for making hiring and strategy decisions with real constraints: timeline, budget, risk, and expected business impact. Use them to decide who to hire, what to test, what to buy, and what to postpone.

Treat AI like a function, not a side project.

The companies getting results are not the ones with the most prototypes. They are the ones with clear ownership, a hiring plan tied to product goals, and a review process that filters out weak candidates before they waste interview time. AI adoption is rising fast, but adoption alone means very little. Plenty of teams can get a demo running. Far fewer can turn that demo into a system that is reliable, monitored, cost-controlled, and tied to a KPI that matters.

Start with three actions.

Name the business problem and assign an owner.

Pick one use case with a clear economic goal: reduce support cost, improve conversion, speed up internal operations, or increase retention. Assign one accountable owner across product, engineering, and operations. If ownership is split across five people, the project will stall.Turn the 10 questions into a hiring and delivery scorecard.

Review your gaps role by role. Do you need model experimentation, production integration, data pipelines, evals, security review, or cost optimization? Write down the exact capability missing today, the level of seniority required, and whether the work is permanent or temporary. This is how you avoid hiring a generalist for a specialist problem, or a full-time employee for a six-week build.Run a scoped pilot with a budget and success metric.

Keep the first project narrow. One use case. One delivery owner. One release path. One metric that determines whether to expand, revise, or stop. Good examples include resolution time, manual hours removed, lead qualification accuracy, or revenue influenced. If you cannot define the metric before the project starts, you are funding exploration, not delivery.

Use a simple 30-60-90 day plan.

Days 1 to 30: define the use case, decide build versus buy, choose the hiring model, and create the interview scorecard.

Days 31 to 60: hire or contract the needed talent, ship a pilot, and instrument evaluation, cost, and reliability tracking.

Days 61 to 90: review business impact, fix weak points in data and workflow design, and decide whether the capability belongs in your long-term roadmap.

Be strict about what you fund. Do not approve AI work because the market is noisy or your competitors are making announcements. Approve it because you can explain the workflow, the system owner, the expected return, and the staffing model in plain English.

If you need to hire senior AI talent fast, ThirstySprout can help you scope the work, choose the right hiring model, and match with vetted engineers in LLMs, machine learning, MLOps, data engineering, and AI product. Start a pilot, see sample profiles, and build your AI team without wasting months on the wrong search.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.