Your dashboards are late, your analysts don’t trust half the tables, and your data scientist keeps asking why feature values change between training and production. That’s usually the moment a CTO starts searching for jobs etl developer and realizes most listings still describe a 2018 role.

The first ETL hire in a scaling company isn’t there to babysit CSV imports. They’re there to make data usable, reliable, and ready for product analytics, finance reporting, and AI systems. If you hire for the old version of the role, you get someone who can move data. If you hire for the current version, you get someone who can build a pipeline foundation your team can ship on.

Your Guide to Hiring ETL Developers

A scaling company usually hits the same wall. Product ships faster than the data layer, finance disputes core numbers, and the ML team starts building workarounds because training data and production data no longer match. At that point, hiring an ETL developer stops being a back-office data decision. It becomes an execution decision.

TLDR

- Hire for ownership of business-critical pipelines. The right ETL developer can stabilize ingestion, enforce data quality, and keep downstream reporting and AI workflows reliable.

- Treat the role as part data engineering, part platform reliability. Strong candidates usually bring SQL, Python, cloud warehouse experience, orchestration, testing, and a habit of designing for failures, backfills, and schema drift.

- Demand for this kind of talent is real. The U.S. Bureau of Labor Statistics projects 15% growth in software developer jobs from 2024 to 2034, with about 287,900 openings each year on average (BLS software developers outlook). That does not measure ETL jobs directly, but it reflects the broader hiring pressure for engineering talent that can build and maintain production systems.

- Your first ETL hire should fix one expensive problem quickly. Good targets include broken source syncs, mistrusted executive dashboards, or slow and inconsistent data delivery into ML pipelines.

- Tie the hire to headcount planning. In regulated environments, role design, succession risk, and skills coverage matter as much as filling the seat. A practical reference point is this guide to strategic workforce planning for banks, especially for leaders balancing near-term delivery against longer-term capability gaps.

Who this is for

This guide is for CTOs, founders, heads of engineering, and data leaders making an early data-platform hire under pressure. The stack usually grew in pieces. Backend engineers wrote a few connectors, analysts patched reporting logic in SQL, and every new integration added one more fragile dependency.

The hiring mistake I see most often is scoping this as a maintenance role. A strong ETL developer should reduce operational drag now and build a data foundation your analytics and AI teams can use six months from now.

That usually changes where you hire, too.

If your local market is thin, expensive, or overloaded with candidates from legacy BI environments, widen the search. Strong ETL talent is often easier to find in distributed global markets if you screen for cloud-native pipeline work, production support habits, and communication discipline instead of filtering by office location or brand-name employers alone.

The Modern ETL Developer Role Explained

The old description of ETL was simple. Pull data from one place, clean it, put it somewhere else.

That’s still true, but it’s not enough. A modern ETL developer sits much closer to production systems, cloud infrastructure, and machine learning workflows than most job descriptions admit. Their job is to make sure operational data arrives where it needs to go, in a form that people and systems can trust.

What the role actually owns

In a healthy stack, the ETL developer usually handles work like:

- Source integration: pulling from PostgreSQL, Salesforce, Stripe, HubSpot, product event streams, vendor APIs, or flat files

- Data transformation: validating schema changes, cleaning nulls, deduplicating records, standardizing business logic

- Pipeline operations: scheduling, retry logic, alerting, backfills, and failure triage

- Target delivery: loading data into Snowflake, BigQuery, Redshift, S3, or downstream feature stores

- Reliability controls: tests, lineage awareness, documentation, and collaboration with analytics and ML teams

If that sounds broader than classic ETL, that’s because the role has stretched. In many startups, the first ETL developer is effectively a focused data engineer with a mandate to fix movement and quality first.

Practical rule: If your biggest pain is trusted movement of data between systems, hire an ETL developer. If your biggest pain is platform-wide architecture across storage, compute, governance, and developer tooling, hire a broader data engineer.

ETL developer versus adjacent roles

A lot of teams mis-hire because they collapse three jobs into one title.

| Role | Primary focus | Best fit |

|---|---|---|

| ETL developer | Moving, transforming, validating, and operationalizing data pipelines | You have specific data flow bottlenecks and need reliability fast |

| Data engineer | Broader data platform design, storage, compute, ingestion patterns, infrastructure | You need architecture and platform ownership across the stack |

| Analytics engineer | Business-facing transformation layer, semantic modeling, warehouse modeling, dbt workflows | Your raw data lands fine, but reporting logic is messy |

The overlap is real, especially in smaller companies. Still, the distinction matters when you write the job description and set expectations.

ETL versus ELT in practice

The ETL versus ELT debate gets framed as ideology. It isn’t. It’s a sequencing decision.

Picture kitchen prep. ETL means you clean and portion ingredients before they enter the fridge your team cooks from. ELT means you bring the raw ingredients in first, then prepare them inside a more powerful kitchen.

Use ETL when upstream cleanup, masking, validation, or transformation needs to happen before the destination system should ever see the data. Use ELT when your warehouse is strong enough to absorb raw data and your team benefits from transforming later for flexibility.

A sensible default for scaling SaaS teams is hybrid. Ingest broadly, transform where it makes operational sense, and keep quality gates where bad data would create product or compliance risk.

A modern ETL developer isn’t valuable because they know the acronym. They’re valuable because they know where transformation should happen, where it shouldn’t, and what breaks when you guess wrong.

Core Skills for High-Impact ETL Developers

The fastest way to waste a hiring cycle is to ask for every data tool you’ve ever heard of. Most high-impact ETL hires are strong in a narrower set of skills that directly affect reliability, speed, and maintainability.

The technical screen should mirror that reality.

What matters most

The core stack usually starts with SQL and Python. SQL tells you whether a candidate can think in sets, joins, grain, and data correctness. Python tells you whether they can build reusable transforms, handle API ingestion, write tests, and manage edge cases that GUI tools often hide.

Cloud-native orchestration now matters just as much. Modern ETL developers need to work with tools like AWS Glue and Azure Data Factory, usually orchestrated with Apache Airflow. Benchmarks cited in this ETL orchestration analysis show that poor orchestration can lead to latency spikes of up to 10x during peak loads, and stronger pipeline design can help reduce downstream ML model drift by 25% to 40% (cloud ETL orchestration benchmarks).

That’s the business case. This isn’t about fashionable tooling. It’s about whether your pipelines survive peak traffic and whether downstream models keep learning from stable features.

The skill categories to assess

Foundational engineering skills

These are essential:

- SQL depth: window functions, joins at the right grain, incremental logic, idempotent loads

- Python fluency: API work, transformation code, packaging, testing, error handling

- Linux and shell basics: file movement, scheduling awareness, debugging job environments

- Data modeling: understanding fact tables, dimensions, slowly changing logic, and business entity definitions

A candidate who is weak here will struggle no matter how many tools they list on LinkedIn.

Pipeline and orchestration skills

This category separates hobbyists from operators.

- Airflow or equivalent orchestration: DAG design, retries, dependencies, secrets handling

- Batch and streaming awareness: knowing when Kafka or similar event pipelines are justified

- Monitoring mindset: alerts that catch failures early, not after finance closes the month

- Backfill safety: reprocessing without duplicating or corrupting data

For a useful technical baseline, this walkthrough of ETL with Python is close to what a good candidate should already be comfortable discussing.

Cloud and platform skills

You don’t need a cloud architect. You do need someone who can work inside your actual stack.

Look for familiarity with:

- AWS: Glue, S3, Lambda, Redshift, IAM basics

- Azure: Data Factory, Blob Storage, Synapse

- GCP: Dataflow, BigQuery, Cloud Storage

- Warehouse targets: Snowflake, BigQuery, Redshift, or Databricks

The best candidates can explain trade-offs. For example, when to use a managed connector and when custom ingestion is worth the maintenance burden.

ETL Developer Skill Matrix

| Skill Category | Junior Developer | Mid-Level Developer | Senior Developer |

|---|---|---|---|

| SQL | Writes solid queries and simple transforms | Handles complex joins, incremental models, performance tuning | Designs robust data logic, reviews query strategy, prevents grain errors |

| Python | Scripts basic ingestion and cleanup | Builds reusable modules, handles APIs, writes tests | Structures maintainable pipeline code, enforces standards, debugs hard failures |

| Orchestration | Understands scheduled jobs | Builds and monitors DAGs with retries and dependencies | Designs resilient workflows and failure recovery patterns |

| Cloud tools | Can work inside one platform with guidance | Operates managed ETL services independently | Chooses service patterns based on cost, scale, and reliability |

| Data modeling | Understands tables and relationships | Builds sound warehouse models for team use | Sets modeling standards that support analytics and ML |

| Stakeholder work | Takes scoped tasks | Clarifies requirements with analysts and engineers | Translates business needs into durable pipeline design |

What doesn’t move the needle

Be careful with resumes that lead with long tool inventories but show little ownership. “Used Informatica, Talend, SSIS, dbt, Snowflake, Kafka, Spark” sounds impressive until you ask what they designed, what failed, and how they fixed it.

A better signal is specific operational judgment. Ask what they do when an API schema changes unexpectedly on a Friday, or how they’d stop duplicate loads after a partial backfill.

How to Source and Vet ETL Developer Talent

Teams source badly before they vet badly. They post a generic role, screen for keyword matches, then wonder why every finalist feels either too junior or too narrow.

The fix is straightforward. Define the job around one business outcome, source in channels where operators show up, and test real judgment instead of memorized trivia.

Start with the role brief, not the requisition

A weak ETL job post looks like this: “Need 5+ years of ETL, SQL, Python, AWS, Spark, Snowflake, Talend, SSIS, Kafka, dbt, and strong communication skills.”

A useful one sounds more like this:

We need one engineer to stabilize product-event ingestion, reduce reporting breakage, and build reliable loads from application databases and third-party APIs into our warehouse. The first 90 days include taking ownership of our Airflow jobs, improving test coverage on transformation code, and partnering with analytics on trusted source tables.

That version attracts people who’ve solved the problem you have.

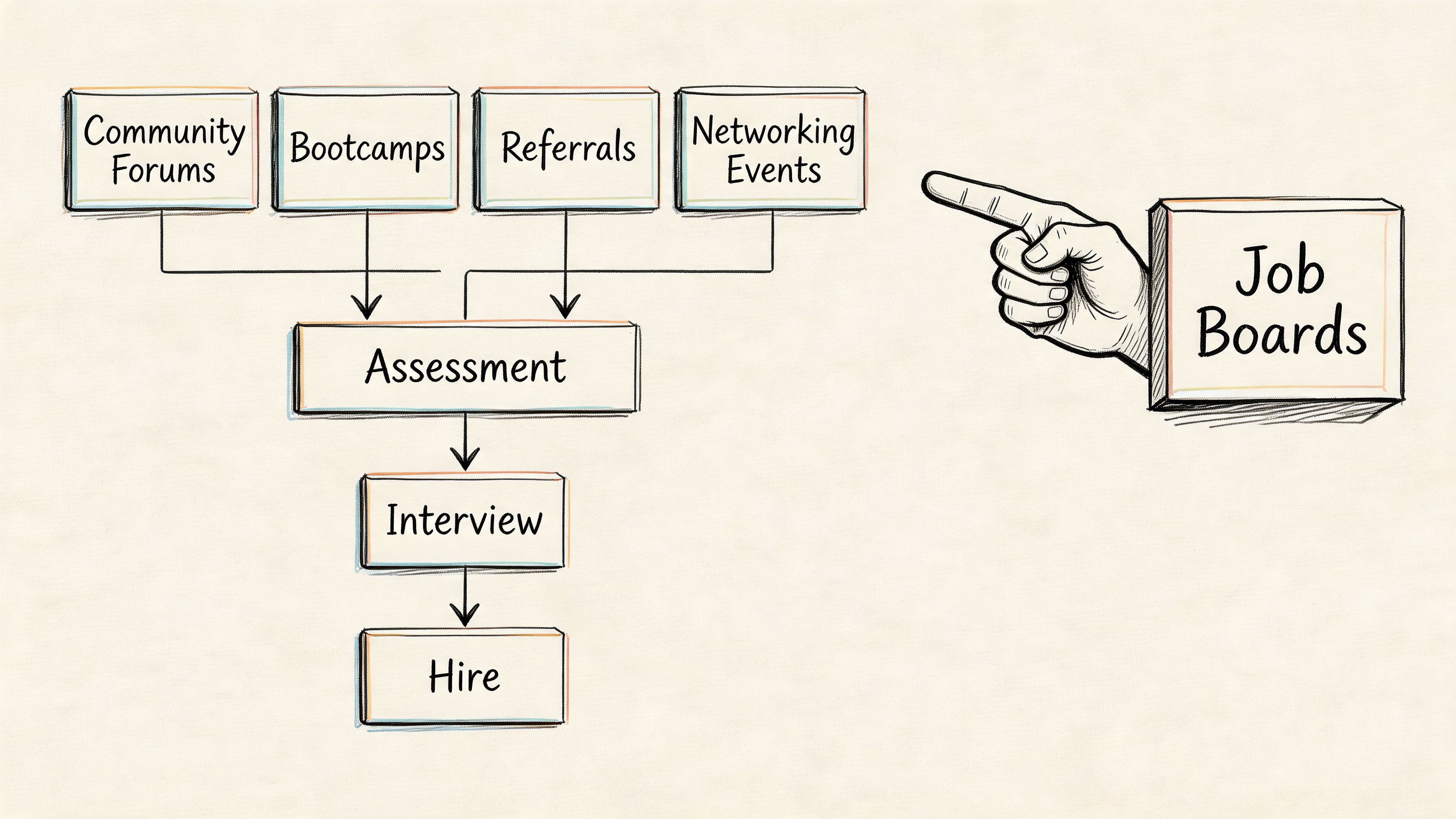

Where to source candidates now

If you only post on local job boards, you’ll limit yourself to whoever happens to be searching that week. That’s especially painful if you need someone with production experience in cloud pipelines.

Remote sourcing is often the better move. Visa friction is real. US H1B backlogs average 18 to 24 months, while 75% of Silicon Valley ETL hires now happen remotely through talent networks, pulling experienced developers from LATAM, India, and Eastern Europe (remote ETL hiring and visa data).

That changes the practical answer to jobs etl developer. You’re no longer hiring only within driving distance of your office. You’re hiring for overlap, communication, and production readiness.

A sourcing mix that works

Use more than one channel:

- Targeted outbound: search for candidates who mention Airflow, Glue, Data Factory, warehouse migrations, or API ingestion ownership

- Specialist talent networks: useful when you need pre-vetted remote candidates quickly

- Engineer referrals: especially from backend, platform, and analytics teams

- Selective public listings: still useful, but write them around outcomes

For interview prep on analytical reasoning, this list of top 10 data analyst interview questions is a decent companion resource. ETL interviews shouldn’t copy analyst interviews, but the best prompts still reveal how candidates reason about messy data.

Interview questions that reveal actual capability

Technical questions

- A vendor API starts returning a new nested field and occasionally omits an older one. How do you prevent pipeline breakage and protect downstream tables?

- How would you design an incremental load for an orders table that receives late-arriving updates?

- What’s the difference between an idempotent load and a naive append?

- An Airflow DAG succeeds, but analysts say the numbers are wrong. Where do you check first?

Architecture questions

- When would you use Airflow plus warehouse SQL instead of Spark?

- Where should transformation happen in our stack, before load or after load?

- How would you structure staging, intermediate, and trusted tables for finance and product analytics?

- If volume grows and nightly jobs start missing the reporting window, what do you change first?

For distributed processing depth, this set of Hadoop and Spark interview questions is useful when the role includes heavier data processing.

Behavioral questions

- Tell me about a pipeline failure that hit a business deadline. What did you do in the first hour?

- Describe a time you pushed back on a stakeholder request because the data model was wrong.

- How do you document assumptions so analysts and ML engineers don’t reinvent the same logic differently?

Hire for calm debugging. Plenty of candidates can build a clean demo. Fewer can explain what they do when a production data source starts lying.

Mini case example one

A B2B SaaS team had three separate ingestion paths for billing data: direct database pulls, Stripe exports, and a spreadsheet finance updated manually. The ETL hire they needed wasn’t a “data visionary.” They needed someone who could normalize source precedence, build one trusted billing mart, and stop monthly reconciliation chaos.

In interview, the strongest candidate didn’t start with tools. They started with ownership boundaries, source trust ranking, and late-arriving correction logic.

Mini case example two

A product team wanted feature tables for a recommendation model, but event data arrived inconsistently and user identifiers changed between systems. The right ETL candidate asked about identity resolution, event-time versus load-time semantics, and backfill strategy before saying anything about Kafka or Spark.

That’s what strong looks like.

A take-home that respects candidate time

Keep it to a realistic slice of work. Two to four hours is enough.

Brief:

You receive order data from a REST API and customer data from a CSV export. Build a small pipeline that:

- Extracts both sources

- Validates required fields and handles missing values

- Joins them into a trusted orders dataset

- Loads the output to a local warehouse table or structured file

- Includes basic tests and a short README

Score for clarity, correctness, and operational thinking. Don’t score for flashy frameworks.

ETL Developer Salary and Seniority Levels in 2026

A startup hires a "mid-level ETL developer" at a local market rate. Six months later, that person is owning warehouse modeling, Airflow failures at 2 a.m., finance reconciliation, and the data contracts feeding an ML feature pipeline. The title said mid-level. The job was senior. That mismatch is where hiring budgets break.

The salary conversation only makes sense after you define what the person will own in production. In 2026, ETL hiring is no longer just about batch loads into a warehouse. The better candidates are building cloud-native pipelines that feed reporting, reverse ETL, and AI workloads from the same governed data layer. If you need someone to support model training data, feature freshness, or event-time correctness, pay for that scope explicitly.

What each level should own

Junior

Juniors work best on bounded tasks inside an existing system. They can write SQL transforms, build straightforward connectors, fix routine pipeline issues, and add tests when the architecture is already set. They should not be the only owner of revenue, finance, or ML-critical pipelines.

Mid-level

A good mid-level ETL developer owns one business domain end to end. That might be product analytics ingestion, customer lifecycle data, or finance reporting. They can translate business requirements into tables, jobs, schedules, and monitors without needing daily design help.

This is often the right first hire for a company that already has a sensible stack and needs faster delivery.

Senior

Senior ETL developers make system choices that hold up under growth. They decide where managed services are enough and where custom code is justified. They handle schema drift, backfills, cost control, data quality, observability, and incident response with good judgment.

They also tend to influence adjacent systems. The best ones improve warehouse conventions, orchestration standards, and the handoff between analytics engineering and ML teams. If your stack is still taking shape, review your data pipeline tooling options before you lock this role definition, because tooling complexity changes the level you need.

Lead or architect

This role matters when multiple teams are producing and consuming data in different ways. A lead sets shared patterns for ingestion, transformation, security, governance, and reuse across analytics and AI systems. They are usually less valuable if you still need someone to personally clear a backlog of broken pipelines every week.

Salary benchmarks to use carefully

Local salary tables are a starting point, not a hiring plan. The actual question is whether you are paying for ticket execution, domain ownership, platform design, or cross-team data architecture.

A junior hire is cheaper and slower. A senior hire costs more and often removes expensive failure modes that do not show up on a salary spreadsheet, such as bad revenue reporting, brittle backfills, or late model inputs. For a scaling company, the cost of one wrong level can exceed the cost of hiring a stronger developer in the first place.

| Seniority | Expected scope | Typical hiring signal |

|---|---|---|

| Junior | Executes assigned tasks, learns stack, supports maintenance | Good SQL and coachability |

| Mid-level | Owns pipelines within one business domain | Reliable delivery, clear debugging process |

| Senior | Designs pipeline patterns and raises team standards | Strong trade-off judgment and stakeholder trust |

| Lead or architect | Sets cross-team data integration direction | Platform thinking and strong communication |

A short video can also help non-data leaders calibrate what they’re really hiring for.

When global remote hiring makes sense

Remote hiring becomes attractive when your local market cannot give you the right mix of pipeline engineering, cloud operations, and business context fast enough. That is increasingly common for ETL roles tied to AI and ML work, because the best candidates can support analytics engineering, data platform reliability, and production-grade feature pipelines in the same role.

The mistake is using remote hiring to chase the lowest rate. Use it to widen access to proven operators. Strong global candidates are often a better fit than local generalists if you need someone who has already worked with dbt, Airflow, Fivetran alternatives, warehouse cost controls, and production incident ownership.

Remote hiring works when the operating model is clear. Define ownership boundaries, review windows, escalation paths, and expected overlap hours before the search starts. Teams that care about documentation, manager access, and optimizing new hire experience usually integrate remote ETL hires faster and with fewer handoff issues.

Use global hiring to get the right capability level, not to underprice a hard job.

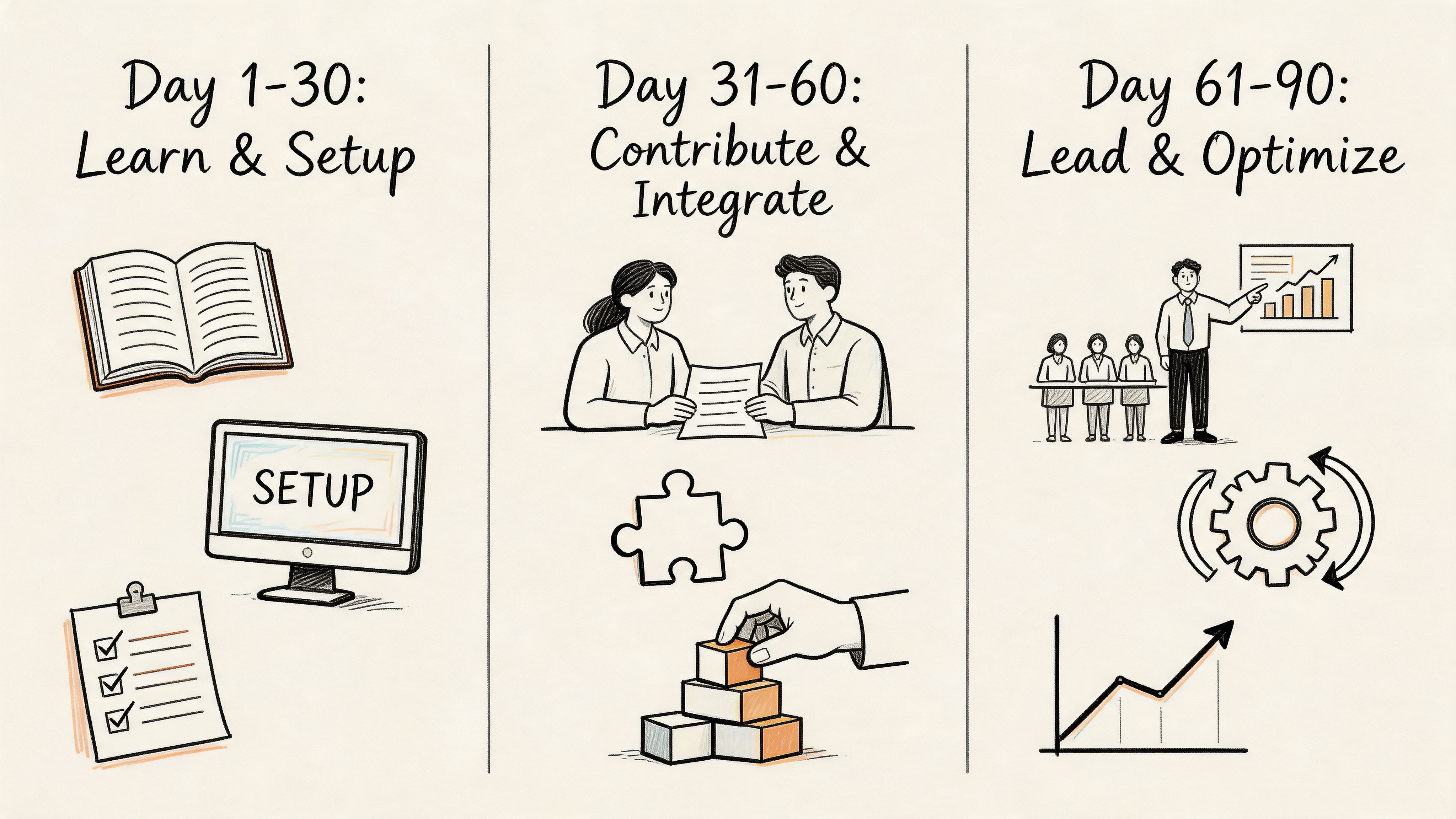

Your 90-Day Onboarding Plan for a New ETL Developer

A good ETL hire can still fail if onboarding is loose. Data systems have hidden context, undocumented dependencies, and tribal knowledge in three different teams. If you don’t structure the first 90 days, your new hire spends too long reverse-engineering the business instead of improving it.

Leaders often treat onboarding as admin plus access requests. That’s too shallow for pipeline work. In production ETL systems, habits formed early matter. Modular Python with unit test coverage above 80% can reduce ETL failure rates by 60%, and CI/CD practices can reduce deployment failures by 30%, according to this review of MLOps-oriented engineering skills (Python testing and CI/CD for ETL reliability).

Days 1 to 30

Focus on orientation and one contained win.

- Map the environment: review source systems, warehouse layers, orchestrators, alerting, and known failure points

- Meet key stakeholders: analytics, finance, product, and platform teams

- Ship one low-risk improvement: fix a brittle transform, add missing tests, or improve one alert

A helpful non-technical companion for managers is this guide to optimizing new hire experience. The principle carries over well. Early clarity reduces confusion later.

Days 31 to 60

Ownership should start here.

- Take over one business-critical pipeline

- Add tests and deployment discipline

- Document data contracts and failure handling

- Propose one improvement in tooling or architecture

If your stack is still evolving, this overview of the best data pipeline tools is useful for deciding what to standardize before the team grows.

Days 61 to 90

Now the hire should influence the system, not just operate within it.

| Time window | Main goal | Deliverable |

|---|---|---|

| 1 to 30 days | Learn systems and remove one obvious pain point | Access, system map, first small fix |

| 31 to 60 days | Own one pipeline end to end | Tests, monitoring, docs, stakeholder handoff |

| 61 to 90 days | Improve the operating model | Proposal or delivery of a broader optimization |

Strong onboarding gives you leverage twice. It gets the new hire productive sooner, and it reveals whether your data stack is mature enough to support scale.

Checklist and Next Steps

If you’re hiring for jobs etl developer right now, don’t start with a long list of tools. Start with the data flow that hurts most and hire for ownership of that problem.

Use this working checklist as your internal template:

- Define the first pipeline mission: reporting reliability, API ingestion, warehouse cleanup, or ML feature readiness

- List source systems and targets: databases, SaaS apps, event streams, warehouse, feature store

- Set role scope: junior support, domain ownership, or architecture-level responsibility

- Write an outcome-based job description: focus on what they must stabilize or build

- Prepare an interview kit: SQL, debugging, orchestration, architecture, stakeholder judgment

- Use a short take-home: one realistic integration task with tests and documentation

- Plan the first 90 days before the offer goes out

Three next steps usually work best:

- Audit your current pipeline pain points. Pick one domain where failure is visible and costly.

- Calibrate the level you need. Don’t try to buy senior judgment at junior scope.

- Run a structured hiring process. One scorecard, one realistic take-home, one hiring manager who knows what “good” looks like.

If you want to move quickly, the two practical calls to action are simple: Start a Pilot and See Sample Profiles.

Frequently Asked Questions About ETL Developer Jobs

Is ETL a dying field because ELT is more common now

No. The tooling pattern has changed, but the need hasn’t. Companies still need people who can extract, validate, reshape, and deliver trustworthy data. The label may shift toward data engineering in some teams, but the work remains critical.

What has died off is the narrow version of the role that only manages legacy batch jobs and never touches cloud workflows, testing, or downstream ML needs.

Should I hire a contractor or a full-time ETL developer

Hire a contractor when the problem is bounded. Good examples are a warehouse migration, a connector build, or a backlog of broken jobs that needs cleanup. Hire full-time when the data domain is strategic and ongoing, such as product telemetry, finance pipelines, or ML feature generation.

A useful rule is this: if the person will own long-term pipeline reliability and collaborate with several teams every week, full-time usually wins.

What’s the difference between an ETL developer and a data warehouse developer

A data warehouse developer is usually more focused on warehouse schemas, storage structures, and reporting-layer design. An ETL developer is more focused on movement, transformation, and operational reliability between systems.

In smaller teams, one person may do both. The distinction matters when deciding whether your pain is upstream ingestion or downstream warehouse modeling.

What should I look for in the first interview

Look for operational reasoning. Ask how the candidate handles schema drift, duplicate loads, failed backfills, and conflicting business definitions. You want someone who can explain trade-offs, not just name tools.

The best early interview signal is usually whether they ask clarifying questions about source trust, load frequency, downstream users, and failure tolerance.

Do I need someone with Spark and Kafka on day one

Not always. If your immediate need is reliable warehouse ingestion from application databases and SaaS tools, strong SQL, Python, orchestration, and cloud ETL experience may matter more.

Bring in Spark or Kafka depth when you have high-volume processing or real-time requirements. A lot of teams over-spec the role and end up filtering out good operators.

Can one ETL developer support AI features

Yes, if the AI work depends on better data movement and quality rather than advanced model engineering. A strong ETL developer can make feature pipelines more consistent, reduce training-serving mismatch, and improve trust in source data.

If you also need model deployment, experiment tracking, or feature serving architecture, that’s where you may need MLOps or a broader data engineering hire alongside them.

If you need vetted ETL and AI data talent fast, ThirstySprout can help you hire senior remote engineers who’ve built production pipelines, ML-ready data systems, and cloud-native workflows. You can start a pilot, review sample profiles, and find a fit without dragging the search out for months.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.