A senior Java interview often looks solid for the first 20 minutes. The candidate names Spring Boot patterns, talks through REST conventions, and says the right things about clean code. Then you shift to a production incident that reflects AI and ML work: inference latency spikes, heap usage keeps climbing, Kafka consumers fall behind, and a retry storm starts hammering a shared service. At that point, the useful signal is no longer Java trivia. It is whether the engineer can reason about concurrency, memory, failure isolation, and operational trade-offs under pressure.

That is the hiring lens this guide uses.

For CTOs building remote AI and ML teams, Java interviews should predict how someone will behave in production, in code review, and during incident response. A candidate working on feature pipelines, model gateways, streaming services, or platform APIs needs more than syntax recall. They need judgment about thread safety, garbage collection behavior, API boundaries, backward compatibility, and observability. They also need to explain those decisions clearly to a distributed team that will challenge assumptions asynchronously.

This is not another generic list of Java interview questions. It is a hiring framework.

Each question in this guide is filtered through one standard: does this answer predict success on a modern AI and ML team shipping systems that have to stay up, stay fast, and stay maintainable? That means interviewer notes matter as much as the sample answer. Business impact matters too. If a candidate cannot connect a Java concept to reliability, cost, latency, deployment, or team velocity, I would not treat that as senior-level strength.

The language choice itself is part of the hiring context. Teams still choose Java for services that need predictable performance, mature tooling, and long-lived maintainability. If you are weighing runtime and hiring trade-offs across backend stacks, this comparison of Go vs Java for backend systems gives useful context for the decision around team shape and service profile.

Use this guide to score engineers on production judgment, debugging depth, and architectural clarity.

TL;DR:

- Ask questions that expose how the candidate thinks about concurrency, memory, exceptions, and design under changing requirements.

- Push past the first correct answer. The strongest signal comes from follow-up questions about trade-offs in containers, distributed systems, and remote team workflows.

- Evaluate whether the engineer can tie Java decisions to business outcomes such as latency, reliability, cloud cost, and incident frequency.

- Use the answers as a hiring filter for production-ready Java engineers in AI and ML environments, not as a quiz on textbook definitions.

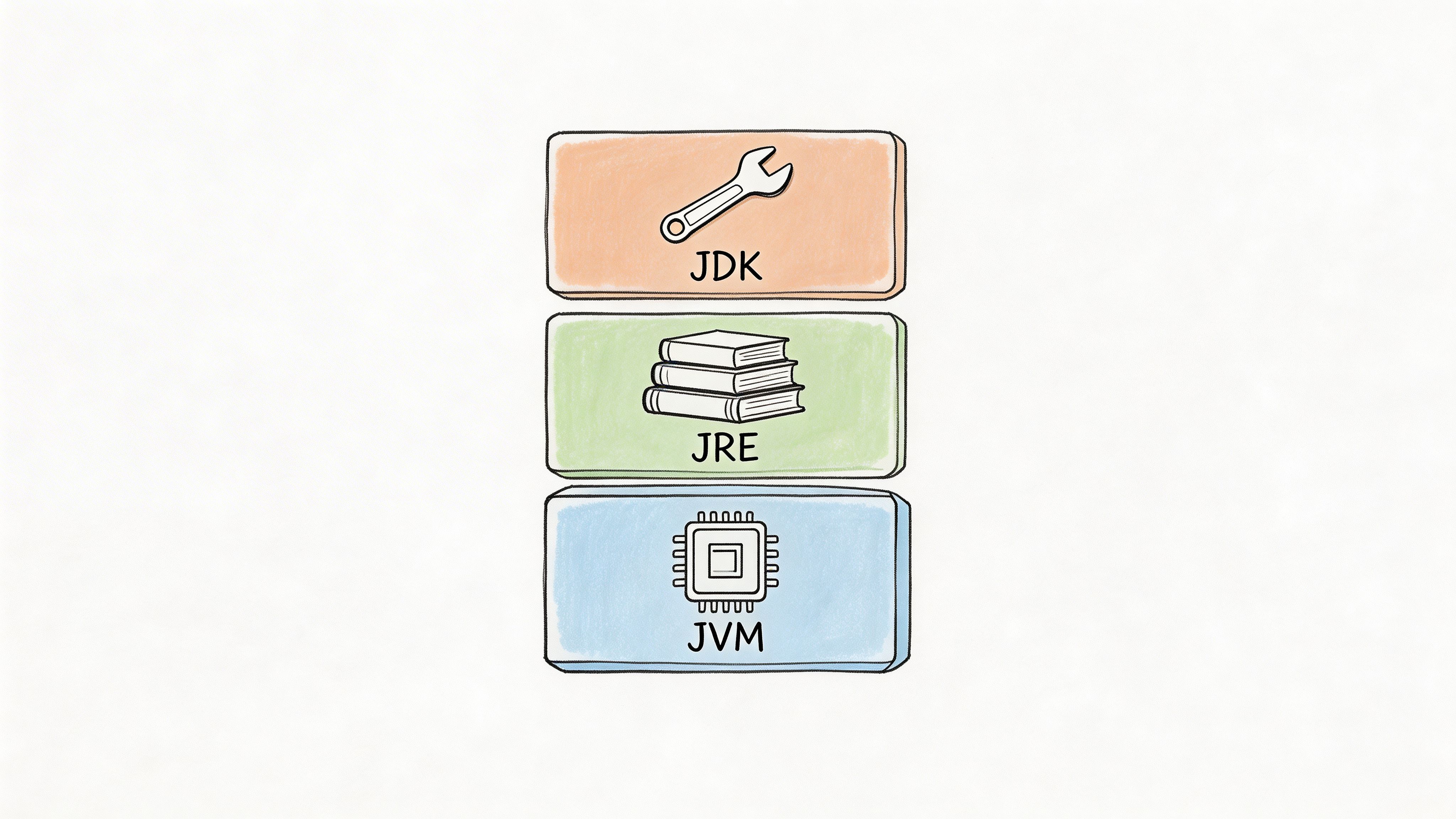

1. What is the difference between JDK, JRE, and JVM

Most candidates can answer this in one sentence. The better ones explain why the distinction matters in build pipelines, containers, and runtime debugging.

The short answer is simple. The JVM executes Java bytecode. The JRE includes the JVM plus the libraries needed to run Java applications. The JDK includes the JRE plus development tools such as the compiler and debugger.

What a strong answer sounds like

A strong candidate won't stop at definitions. They'll connect the layers to deployment choices.

For example, in a containerized inference service, you usually need a runtime environment for execution and a full development kit in build stages. If they mention multi-stage Docker builds, slim runtime images, or runtime selection trade-offs, that's a good signal. If they only say "JDK is for development, JRE is for running," that's entry-level knowledge.

One useful follow-up is to ask what changes when you use an alternative runtime such as GraalVM or OpenJ9. You aren't looking for vendor trivia. You're looking for awareness that runtime choice affects startup behavior, image size, observability, and sometimes compatibility.

Practical rule: Ask the candidate to describe how they'd package a Java model-serving service for Kubernetes. If they can discuss build stage versus runtime stage, toolchain requirements, and what they'd inspect when the container crashes, they're thinking like an owner.

A mini-case you can use:

- Scenario: Your team compiles a Spring-based API that wraps an LLM routing service. CI needs compilation, tests, and static analysis. Production only needs the runnable artifact.

- Good signal: The candidate separates build and runtime concerns cleanly.

- Weak signal: They install the full JDK everywhere because "it's simpler."

If you're comparing stack choices for backend AI systems, this comes up often in Go vs Java for production backend trade-offs.

Interviewer tip

Ask, "What breaks if the build image and runtime image use different Java versions?" You want to hear about compatibility, dependency behavior, and environment drift. The business impact is straightforward. Teams that understand this reduce deployment surprises and spend less time chasing environment-specific failures.

2. Explain the concept of Generics in Java and why they are important

Generics are a strong hiring filter because they reveal whether a candidate can design APIs that stay safe and maintainable after six months of team growth.

A solid answer starts with the basics. Generics let Java classes, interfaces, and methods work with parameterized types instead of hard-coded ones. The practical value is compile-time type checking, fewer casts, and clearer contracts between components.

That matters in production AI systems because data moves through many hands. In one service, a preprocessing stage may accept raw events and return normalized feature objects. In another, an inference client may wrap responses with typed metadata, error details, and tracing context. Teams that skip generics often fall back to Object, unchecked casts, and late failures that only show up under real traffic.

Good candidates usually make that concrete fast:

- Typed transformation pipeline:

Transformer<InputT, OutputT>for converting inbound records into model-ready payloads - Reusable ingestion layer:

DataLoader<T>for text, image, or tabular sources without duplicating interface design - Safer service contracts: generic result wrappers for paged queries, async jobs, or batch scoring responses

For mid-level roles, that is a good start. For senior hires, push further.

Ask about type erasure and see if they can explain the trade-off. Java generics improve source-level safety, but the runtime does not retain all generic type information in a way people often assume. That affects reflection, JSON serialization, dependency injection, and framework integration. A candidate who has wrestled with Jackson, Spring, or message deserialization in a real system usually has a sharper answer than someone repeating textbook definitions.

A useful interview prompt is: "Design a generic preprocessing component for records entering a model-serving platform used by multiple teams."

Strong candidates discuss more than syntax. They talk about API clarity, bounded wildcards, and whether the abstraction helps or hurts the people who have to use it remotely across services. They often know the PECS rule, producer extends, consumer super, but the better signal is whether they apply it to a real interface instead of reciting it.

For example, if a shared library needs to read subclasses of FeatureRecord, ? extends FeatureRecord may make sense on the input side. If it writes processed records into a sink, ? super ProcessedRecord may be the safer contract. That level of precision predicts fewer broken integrations.

Weak candidates tend to over-generalize. They build APIs so abstract that nobody can tell what the valid types are, or they avoid the problem and use raw collections. Both create maintenance cost. In a remote AI/ML team, unclear type boundaries slow code review, complicate onboarding, and increase the odds of schema-related bugs reaching production.

Use a mini-case if you want a cleaner read:

A platform team owns a Java library used by three services. One consumes JSON events, one ingests Avro messages, and one stores embedding metadata for retrieval workflows. Ask the candidate how they would model shared interfaces without forcing every service into the same concrete type. If they can define tight generic contracts, explain where they would stop abstracting, and name the runtime edges they would test, they are thinking like an engineer who can own a multi-team system.

Interviewer tip

Ask, "Where would you avoid generics even if you could use them?" Good candidates often say that overly generic APIs can hide business meaning, make stack traces harder to interpret, and increase cognitive load for the team. The business impact is direct. Engineers who use generics with restraint tend to ship libraries that are easier to extend, review, and operate.

3. What are Collections in Java and explain the Collections Framework hierarchy

A candidate who treats collections as a memorization question is telling you something useful. They probably know Java syntax, but you still do not know whether they can make sound decisions in a production system.

The baseline answer is simple. The Java Collections Framework defines standard interfaces and implementations for storing and working with groups of objects. Collection is the root interface for structures such as List, Set, and Queue. Map is part of the framework but does not extend Collection, because it models key value associations rather than a group of standalone elements.

That definition is table stakes. Hiring value comes from the next layer. Can the candidate connect the hierarchy to access patterns, memory behavior, ordering guarantees, duplicate handling, and concurrency risk?

For AI and ML systems, those choices show up everywhere.

ArrayListfits append-heavy pipelines and indexed reads, such as building a batch of feature rows before serializationLinkedListis rarely the right answer in modern services, and strong candidates usually say so unless they can defend a specific insertion patternHashSethelps with deduplication, such as rejecting repeated event IDs before they poison downstream training dataTreeSettrades speed for sorted order, which matters if ranking or deterministic iteration is part of the requirementHashMapis the default for fast key-based lookup, such as resolving feature names to values during inferenceLinkedHashMapmatters when insertion order must stay stable, for example in debugging output, export jobs, or deterministic test fixturesPriorityQueueis useful for top K selection, scheduling, and bounded ranking workloads

A good interview answer includes the hierarchy and then gets specific about trade-offs. ArrayList usually wins on cache friendliness and random access. HashMap gives average constant-time lookup but can consume more memory than candidates expect. TreeMap keeps keys sorted, but every insert and read pays for that ordering. Those are the kinds of choices that affect latency, cost, and incident rate.

One question I like is: "You have a feature enrichment service that receives requests concurrently, reads reference data often, and updates it occasionally. What collection choices would you make, and why?" This forces the candidate to move past textbook definitions.

Look for signals like these:

- They ask about read to write ratio before naming a data structure

- They distinguish ordering requirements from lookup requirements

- They mention null handling and duplicate semantics without prompting

- They know standard collections are not automatically safe for concurrent access

- They consider whether a concurrent collection is the right fix, or whether the flow should be redesigned to reduce shared mutable state

That last point matters in remote teams. Engineers who reach for synchronization first often create systems that pass tests and still fail under real traffic. Engineers who reduce sharing, isolate mutation, or snapshot data for readers usually build services that are easier to reason about and easier to operate.

Push further with HashMap. Ask what happens as it grows. Strong candidates should explain resizing in plain language, including that capacity growth can cause rehashing work and temporary latency spikes. They should also know that defaults are usually fine until the workload proves otherwise, and that premature tuning can waste memory across many service instances.

Here is a practical mini-case:

A Java service consumes Kafka events, attaches model metadata, and places enriched payloads onto a scoring queue. The service must preserve request order for one tenant, deduplicate retries for another, and keep lookup latency low for shared feature definitions. A senior candidate should separate those concerns instead of forcing one collection to solve all of them. That usually means one structure for ordered processing, another for deduplication, and another for lookup. If they can explain where data copies happen and where contention might appear, they are thinking like someone who can own a production path.

Interviewer tip

Ask, "Tell me about a time the wrong collection choice caused a real problem." Candidates with hands-on experience usually talk about memory growth from oversized maps, hidden contention from synchronized wrappers, duplicate data slipping past a list-based design, or unstable ordering breaking tests and downstream consumers. The business value is direct. Collection choices shape throughput, latency, correctness, and how quickly a distributed team can diagnose failures.

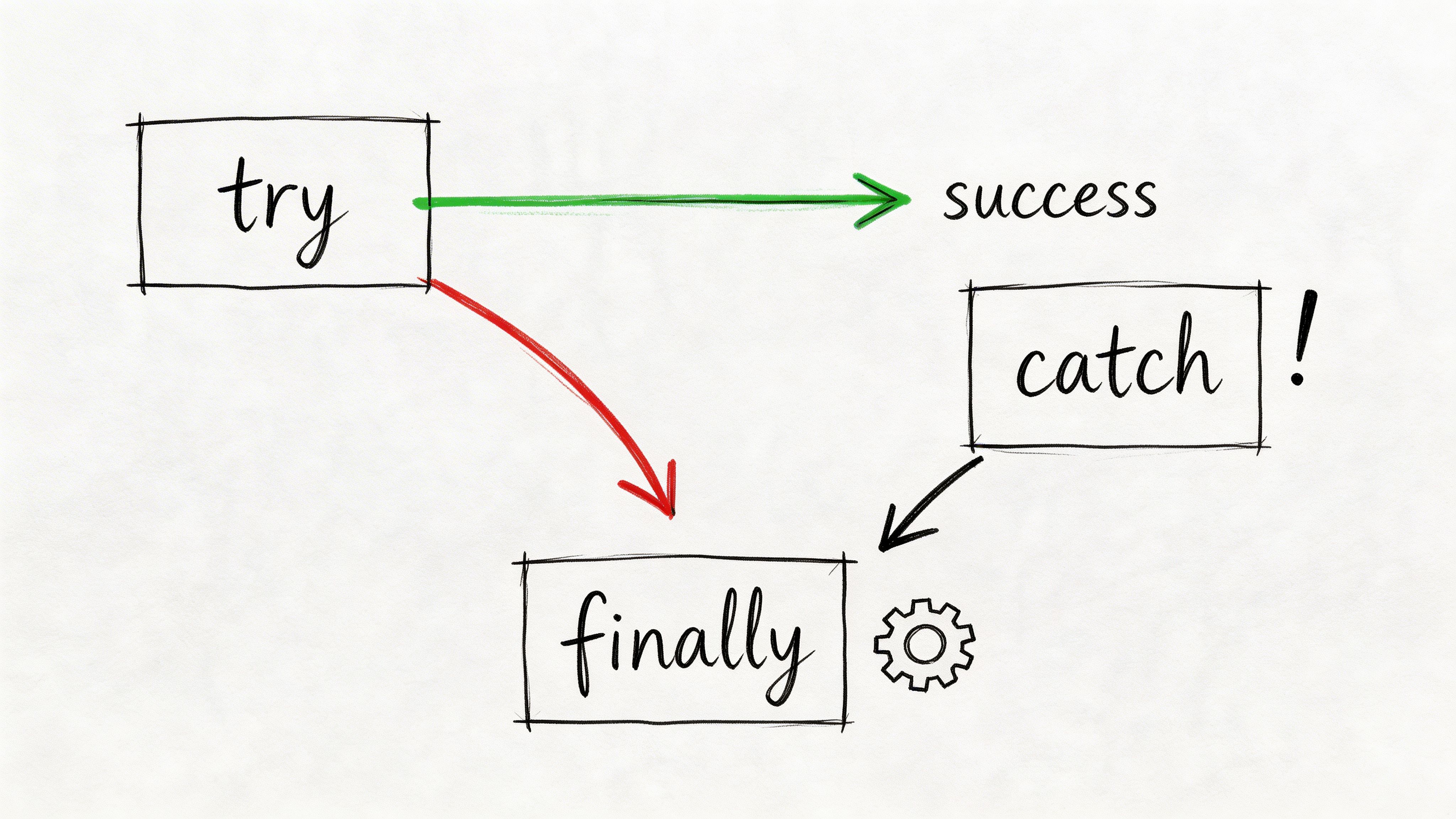

4. Explain Exception Handling in Java with try-catch-finally-throw

A remote AI team ships a model update on Friday. By Monday, inference latency is up, one region is returning 500s, and the logs show three different stack traces for what turns out to be the same root cause. That is why this interview question matters. Exception handling tells you whether a candidate will contain failure or spread confusion across services, dashboards, and on-call rotations.

try, catch, finally, and throw are the syntax. Hiring value comes from how the candidate uses them under production pressure. Strong Java engineers know that exception handling is part of service design, not just control flow. They decide which failures should stop the request, which should trigger fallback behavior, and which should fail the whole service at startup.

In AI and ML systems, those choices have clear business impact. A malformed request should produce a clean client error. A temporary network timeout to a feature store may justify a bounded retry. A corrupted model artifact should usually stop startup and fail readiness checks. Candidates who flatten all of that into catch (Exception e) are telling you they will blur signal, hide root causes, and make incident response slower.

What strong production thinking sounds like:

- Specific exception types:

ModelLoadException,FeatureStoreTimeoutException, orInvalidInferenceRequestExceptioncommunicate intent better than genericRuntimeException - Clear boundaries: translate low-level exceptions at service edges, but preserve the cause so logs and traces still explain what failed

- Resource cleanup: use try-with-resources for files, sockets, streams, and JDBC work instead of relying on

finallyfor every close path - Selective recovery: retry transient failures with limits and context, not every exception blindly

- Actionable logging: log request IDs, model version, tenant, and dependency name. Avoid duplicate logging at every layer

One detail separates mid-level from senior answers. Senior candidates know finally is not the default cleanup tool anymore for many resource cases. They should mention try-with-resources because it closes resources reliably and reduces the risk of hiding the original exception with cleanup errors.

Use a scenario that exposes judgment:

"Your inference service loads a model on startup. The file exists, but deserialization fails because the artifact version is incompatible with the running code. What do you log, what do you throw, and should the service start?"

Weak answers focus on syntax. Better answers explain a startup failure path: log structured context once, throw a service-specific startup exception, fail readiness, and stop the process if the model is required for correct results. If they mention a degraded mode, push further. Ask what functionality remains safe, how clients are told about reduced capability, and who owns the rollback decision.

I also listen for one habit that causes real damage in distributed systems. Candidates often catch an exception, log it, wrap it, and throw it again. Then an upstream layer logs it again. Now one failure creates five alerts and a noisy incident thread. Good engineers decide where an exception should be recorded and where it should propagate.

Hiring lens: Exception handling predicts operational maturity. The right hire preserves root cause, sets failure boundaries deliberately, and keeps bad data or broken dependencies from silently poisoning downstream decisions.

This question is useful because it maps directly to outcomes a CTO cares about: lower MTTR, clearer ownership during incidents, safer startup behavior, and fewer hidden defects in model-serving paths. In a remote team, those traits matter even more. The code has to explain the failure when the engineer who wrote it is asleep in another time zone.

5. What are Multithreading and Concurrency? Explain Thread lifecycle and synchronization mechanisms

A remote AI team ships a model-serving service that passes load tests, then starts timing out in production because one shared cache blocks request threads and another object publishes stale state. That is why this question matters. It predicts whether a candidate can keep a Java service correct under pressure, not whether they memorized thread state names.

Multithreading is the use of multiple threads within one process. Concurrency is the broader problem of coordinating overlapping work safely and efficiently, whether tasks run at the same instant or interleave. In Java, a thread moves through NEW, RUNNABLE, BLOCKED, WAITING, TIMED_WAITING, and TERMINATED. A capable candidate knows those states, but a hireable senior engineer connects them to real failure modes such as starvation, lock contention, missed signals, and slow shutdown.

For AI and ML systems, concurrency bugs rarely stay local. They show up as duplicate inference work, stale feature flags, broken request tracing, or latency spikes that only appear during batch retraining windows. In remote teams, those bugs are expensive because the engineer debugging them is often reading logs and thread dumps hours after the incident started.

What to listen for

Strong candidates usually move quickly from definitions to design choices:

ExecutorServiceand bounded pools: to control task submission, isolate workloads, and avoid turning traffic spikes into memory pressuresynchronized: a simple option for small critical sections when the lock scope is clearReentrantLock, read-write locks, and concurrent collections: when they need timed lock attempts, better observability, or lower contentionvolatile: for visibility of single-variable state changes, not as a substitute for atomic updates- Immutability and reduced shared state: because the best synchronization strategy is often to share less

That last point matters. Engineers who reach for locking before they question the object model often create systems that work in tests and stall under real traffic.

Use a production scenario, not a quiz

Ask something like this:

Mini-case: A Java scoring service handles concurrent requests, caches model metadata in memory, and refreshes configuration in the background. Under load, some requests read stale configuration. Others pile up behind a synchronized block. CPU is low, latency is high, and the issue appears only during config refreshes.

Then stop talking.

A strong candidate will inspect how configuration is published to worker threads, whether the cache object is mutable, whether refresh and read paths contend on the same lock, and whether the thread pool is hiding backpressure. The best answers often suggest replacing mutable shared maps with immutable snapshots, using atomic reference swaps for config publication, or separating refresh work from request-serving executors.

That is a better signal than asking for the difference between concurrency and parallelism.

Interviewer tips that expose real depth

Ask for a race condition they have fixed. Listen for specifics: what shared state was involved, how they reproduced it, and how they proved the fix held under load.

Ask how they detect deadlocks. Good answers include thread dumps, lock ordering rules, timeout-based lock acquisition where appropriate, and code review habits that avoid nested locks.

Ask what happens when task submission outpaces consumption. Weak candidates say "increase the thread pool." Strong candidates talk about bounded queues, rejection policies, upstream backpressure, and workload isolation so batch jobs do not starve latency-sensitive inference requests.

Ask where virtual threads fit. Senior candidates should know that newer Java versions make thread-per-task designs more practical for blocking I/O, but they should also say that virtual threads do not remove contention on shared state, fix poor locking, or make CPU-bound model scoring free.

A practical media refresher helps if your hiring team wants a visual baseline before running interviews:

Business impact of this question

This question maps directly to production readiness. A candidate who understands Java concurrency can prevent outages caused by thread starvation, reduce p95 latency by removing unnecessary contention, and design services that degrade predictably during traffic bursts or dependency slowdowns.

For a CTO hiring into AI and ML work, that matters because model quality alone does not keep systems healthy. If the serving layer deadlocks, publishes stale config, or burns all request threads waiting on one shared resource, the business still misses SLAs. Concurrency knowledge is one of the clearest signals that a Java engineer can build software that survives real demand, especially on a distributed team where clean architecture and safe defaults matter more than heroics.

6. Explain the concepts of Inheritance, Polymorphism, and Method Overriding in Java

A CTO hires a Java engineer for an AI product, then six months later the team needs to add a new model provider, route some requests through a safety layer, and support tenant-specific inference policies. This question helps you find out whether the candidate will extend that system cleanly or turn every change into a risky rewrite.

Inheritance means one class derives state or behavior from another. Polymorphism means the code can call different implementations through a shared type. Method overriding means a subclass provides its own version of a parent method at runtime.

The definitions matter less than the design judgment behind them.

A good interview prompt is a small serving architecture. Ask the candidate to model a Java layer that supports multiple model types and providers. For example, a shared Model interface might expose predict(), while LlmModel, EmbeddingModel, and ClassificationModel implement it differently. That tests whether they understand polymorphism as a tool for keeping calling code stable while implementations change.

Then push harder.

If the candidate immediately proposes a large base class such as BaseModelServer with inherited hooks for retries, tracing, guardrails, caching, and fallback logic, ask how they would change one concern without changing the hierarchy. Senior engineers usually recognize the problem quickly. In ML systems, these concerns evolve at different speeds. Provider routing changes for cost. Safety policies change for compliance. Caching changes for latency. Inheritance couples those decisions too early.

The stronger answer is usually narrow inheritance and heavy use of composition. A candidate might use interfaces for behavior contracts, then inject collaborators for logging, rate limiting, prompt filtering, or provider selection. That makes method overriding a targeted tool instead of the default extension mechanism.

Watch for whether they understand the risks of overriding. Poor overrides can break parent class assumptions, create inconsistent behavior across subclasses, and make debugging harder in distributed systems. In remote teams, that cost gets worse because developers need designs that are easy to reason about during code review and incident response.

A useful follow-up is simple: ask for one case where inheritance is the right choice in a production Java codebase. Good candidates usually give a constrained example, such as a stable framework abstraction with shared lifecycle behavior, and then explain why they would avoid deep class trees elsewhere.

Interviewer guidance

Listen for trade-offs, not slogans.

Strong candidates usually say all of the following in some form:

- inheritance works best for a true is-a relationship with stable shared behavior

- polymorphism reduces branching in calling code and makes new implementations easier to add

- overriding should preserve the parent contract and avoid surprising behavior

- composition is often safer when features change independently

- deep hierarchies make testing, refactoring, and onboarding harder

Weak candidates stay at the textbook level or treat inheritance as the default way to reuse code.

Business impact of this question

This question predicts maintainability more than syntax knowledge. Engineers who make good abstraction choices build services that can accept new model types, vendors, and control layers without destabilizing existing paths. That affects delivery speed, incident rate, and how well a distributed team can keep shipping under changing product requirements.

For AI and ML hiring, that signal matters. The best candidate is rarely the person who recites object-oriented terms fastest. It is the one who knows where inheritance helps, where it causes rigidity, and how to design Java systems that survive product change.

7. Explain the SOLID principles and how they apply to Java design

If a candidate rattles off Single Responsibility, Open/Closed, Liskov Substitution, Interface Segregation, and Dependency Inversion in perfect order, don't give them points yet. Lots of people memorize SOLID. Fewer can use it without overengineering the codebase.

What you want in a real answer

The best responses stay grounded in shipping systems.

A candidate might explain Single Responsibility by splitting a model-serving workflow into separate classes for loading artifacts, handling inference, and collecting metrics. They might discuss Dependency Inversion by injecting a model provider interface so the service can support different backends cleanly. That's useful because it ties a design principle to a business need: easier testing, safer change, and fewer risky edits in critical paths.

Use a code-review style prompt here. Ask them to identify what feels wrong in a class that does all of these things:

- fetches feature data

- transforms inputs

- calls a model

- logs request outcomes

- writes audit events

A strong candidate sees too many responsibilities immediately. An even stronger one proposes a refactor that is practical, not doctrinaire.

Where candidates often go wrong

Two failure modes show up a lot.

The first is dogmatic SOLID. The candidate creates so many tiny abstractions that nobody can trace a request through the system.

The second is hand-wavy pragmatism. They say "it depends" and never commit to a cleaner boundary.

The right middle ground is what matters in AI and ML teams. Systems change fast. New model providers get added. Feature extraction logic evolves. Teams need enough abstraction to adapt, but not so much that onboarding becomes archaeology.

A good mini-case:

Your platform team supports internal batch scoring and real-time inference. The same Java service now includes vendor-specific prompt formatting, feature lookup, model fallback logic, and API serialization. Ask the candidate where they'd draw seams first. Their answer tells you whether they'll improve maintainability or make the architecture more abstract without making it better.

Senior hiring content increasingly emphasizes design patterns and production architecture, but many prep lists still underserve actual system design and decision communication, especially for distributed systems and AI-related workloads, as noted in Built In's discussion of senior Java interview gaps.

8. What is the Java Memory Model and how does Garbage Collection work

A remote engineer ships a Java inference service that passes load tests, then production starts missing latency targets after a few hours of steady traffic. CPU looks fine. The problem is memory behavior. This question helps you tell apart candidates who know Java syntax from candidates who can keep an AI service stable under real load.

The Java Memory Model, or JMM, defines how threads read and write shared data. It governs visibility, ordering, and what counts as a safe publication of state between threads. Candidates should be able to explain volatile, synchronized, and the happens-before relationship at a practical level. If they cannot connect those rules to bugs like stale reads, broken double-checked locking, or inconsistent cache state, they are not ready for concurrent production systems.

A solid baseline answer also covers runtime memory areas without turning into a textbook recital. Heap holds shared objects. Each thread has its own stack with frames and local variables. Metaspace stores class metadata. The point of asking is not to test memorization. It is to see whether the candidate can reason about where pressure builds, what is shared, and what can go wrong under concurrency.

Garbage collection is the second half of the signal. Java garbage collectors reclaim objects that are no longer reachable, but "automatic memory management" does not mean "memory is solved." In production AI services, allocation rate, object lifetime distribution, cache design, serialization overhead, and collector choice all affect latency, throughput, and cloud cost.

A stronger candidate usually goes beyond naming collectors. They explain the trade-off. G1 is the default on modern Java and is a reasonable general-purpose choice. Low-latency services may need a closer look at ZGC or Shenandoah, depending on the JDK version, workload, and operational tolerance for tuning. Batch jobs may accept longer pauses in exchange for throughput. That trade-off matters more than collector trivia.

For teams building model-serving platforms, the memory profile gets ugly fast. JSON serialization, protobuf conversion, token buffers, vector payloads, caches, and async task queues all create object churn. This shows up clearly in production patterns common to Java AI engineering workloads, where memory behavior often drives p99 latency before business metrics make the issue obvious.

A practical interview test

Use a scenario with failure symptoms instead of asking for definitions alone:

"Your Java inference service is stable in staging. In production, p99 latency climbs during sustained traffic, GC activity increases, and Kubernetes restarts pods for memory pressure. How do you investigate?"

Strong answers usually cover several layers:

- Allocation pressure: too many short-lived objects from parsing, mapping, logging, or request fan-out

- Retention problems: caches without bounds, listener references, thread locals, queues that never drain

- Concurrency correctness: shared mutable state causing retries, duplicate work, or hidden memory growth

- Operational evidence: GC logs, heap dumps, allocation profiling, container memory limits, and JFR

- Collector fit: whether the current GC matches latency goals and object lifetime patterns

The interviewer should press on sequence, not just tools. What would they check first? How would they separate a true leak from normal heap growth? Would they tune the JVM immediately, or fix the allocation pattern in code first? Good engineers know that JVM tuning can buy time, but bad object design usually comes back.

What this predicts in an AI and ML team

This question has real hiring value because modern AI systems create memory stress in boring places. Request shaping, feature enrichment, embeddings transport, retry queues, and model client wrappers all add pressure long before the model itself is the bottleneck.

Candidates who answer well tend to think in production terms. They ask about request size distribution, warm-up behavior, pod limits, off-heap buffers, and whether the service is optimized for throughput or tail latency. That is the mindset you want on a remote team, where engineers need to diagnose incidents from partial signals and make safe changes without a senior lead sitting beside them.

A weak answer says the garbage collector "cleans unused memory automatically" and stops there. A strong answer ties memory semantics to reliability, cost, and incident response. That is the business signal CTOs should hire for.

9. Explain the difference between Abstract Classes and Interfaces, and when to use each

A candidate's answer here tells you how they design systems that need to survive real change.

Abstract classes and interfaces both define abstractions, but they solve different design problems. An abstract class lets you share state, constructor logic, protected helpers, and partial implementation across closely related types. An interface defines a contract. It tells the rest of the system what a component can do without forcing that component into a single inheritance path. Since modern Java supports default methods, interfaces can carry some shared behavior, but they still work best as contracts first.

For hiring, the useful signal is not whether the candidate can recite syntax. It is whether they choose the right abstraction for a codebase that will keep evolving under product pressure, team growth, and changing model infrastructure.

How to test judgment, not memorization

Use a design prompt from your own stack.

Suppose your platform accepts training or inference input from Kafka, S3, and direct API uploads. An interface such as DataLoader<T> is usually the cleaner starting point. Each loader can implement the same contract while keeping transport-specific concerns isolated. That makes testing easier, supports plugin-style expansion, and avoids forcing unrelated components into a brittle inheritance tree.

Now change the scenario. Your evaluation jobs all need common metric registration, shared audit fields, retry guards, and standardized lifecycle hooks. An abstract base class can be a good fit if those behaviors are common and unlikely to diverge. In that case, shared state and protected utility methods reduce duplication and keep the workflow consistent.

Good candidates will usually say something close to this: use interfaces to define capabilities and preserve flexibility. Use abstract classes when types share meaningful implementation and lifecycle behavior.

What strong answers include

The best answers go beyond "interfaces are for contracts, abstract classes are for partial implementation." They talk about the cost of each choice.

- Use interfaces for pluggability, test doubles, adapter patterns, and boundaries between services or modules

- Use abstract classes when subclasses have real shared behavior, shared state, or a common template flow

- Prefer composition if a base class starts collecting optional hooks, feature flags, or methods that only some subclasses need

- Treat default methods carefully because they help evolve APIs, but too much behavior in interfaces can blur responsibilities

That last point matters in remote teams. Once an interface becomes a dumping ground for convenience logic, every implementation inherits hidden coupling. Changes get riskier. Reviews get slower. Ownership gets fuzzy.

Interviewer tip: push on trade-offs

Ask the candidate to redesign a small subsystem twice.

For example, your team supports three inference backends: a local ONNX runtime, a hosted model API, and an asynchronous batch scorer. If the candidate reaches for a large abstract superclass with many empty or optional methods, that is a warning sign. It often leads to rigid hierarchies where every new backend has to inherit behavior it does not want.

A stronger design usually starts with an interface such as InferenceEngine, then adds composed helpers for retries, metrics, request shaping, or authentication. That structure is easier to extend when vendors change, latency targets shift, or one backend needs a different scaling model.

This question predicts production readiness because AI and ML platforms change underneath the application code. New providers appear. Data contracts drift. Online and batch paths split. Engineers who understand abstraction boundaries make those changes cheaper and safer.

A weak answer stays at the language-feature level. A strong one connects the choice to coupling, testability, extensibility, and failure isolation. That is the kind of reasoning CTOs should screen for.

10. What are Streams and the Functional Programming features in Java 8+

Your team is reviewing a pull request from a remote candidate’s take-home exercise. The code is modern Java. It uses streams, lambdas, method references, and Optional everywhere. It also hides failure paths, makes debugging painful, and turns a simple scoring pipeline into something nobody wants to touch at 2 a.m. That is why this question belongs in a serious Java interview.

Streams and functional features in Java 8+ let engineers express data processing as transformations instead of manual loops and mutable state. A good candidate explains the mechanics clearly: streams process data through lazy intermediate operations such as map, filter, and sorted, then execute only when a terminal operation such as collect, reduce, or forEach runs. Lambdas and method references cut boilerplate. Functional interfaces such as Function, Predicate, Consumer, and Supplier make that style possible.

The hiring signal is not whether someone can write list.stream().filter(...).map(...).collect(...). Many candidates can. The useful signal is whether they know where this style improves code, where it hurts, and how that affects a production AI or ML system.

In real Java services that support model inference, feature generation, or offline scoring, streams are usually a good fit for transformation-heavy paths with clear, testable stages. Common examples include:

- converting raw events into typed domain objects

- filtering malformed inputs before they reach a model

- grouping or aggregating predictions for reporting

- mapping transport DTOs into internal request objects

- using

Optionalat API boundaries where absence is expected and explicit

Now push past syntax.

Ask the candidate when they would avoid streams. Strong engineers talk about cost, not style. They mention that long stream chains can hide business rules, stack traces are often less helpful than imperative code during incidents, and object creation in tight loops can matter in high-throughput paths. They should also be cautious with parallelStream() in request-serving applications, because it uses the common fork-join pool and can create hard-to-predict contention under load.

That trade-off matters in AI and ML teams. Batch pipelines, validation layers, and enrichment steps often benefit from declarative transformations. Latency-sensitive inference handlers often need simpler control flow, explicit error handling, and easier profiling.

A practical interview prompt works better than a definition question.

Give the candidate a case where a service receives 10,000 records, validates them, enriches each record with metadata, drops invalid entries, and emits aggregate quality metrics. If they reach for one dense stream pipeline with nested map, flatMap, groupingBy, and Optional chains, ask three follow-ups: how will they debug one bad record, how will they expose per-stage metrics, and how will they test each transformation in isolation? Their answers usually tell you whether they write maintainable production code or just compact code.

Optional deserves its own follow-up. Good candidates know it helps model absence in return values. They also know it is usually a poor choice for fields, DTO serialization, and every internal branch of a hot path. The same pattern applies to lambdas. They improve small, focused behaviors. They become harder to read when they carry branching business logic, side effects, and exception handling.

Interviewer tip: test for operational judgment

Ask for two implementations of the same task. One with streams, one with a loop.

Then ask which version they would ship in a model-serving API and which version they would ship in an offline feature-processing job. A strong candidate compares readability, allocation behavior, observability, exception handling, team familiarity, and performance profiling. That is the level of judgment that predicts success on a remote engineering team, where other developers have to review, run, and debug the code without sitting next to the author.

This question has direct business impact. Java engineers on AI and ML platforms spend a lot of time shaping data between storage, services, and models. The best hires do not treat streams as a marker of modern Java. They treat them as one tool among many, and they choose them only when the result is easier to test, easier to reason about, and safer to operate.

Top 10 Java Interview Topics Comparison

| Concept | 🔄 Complexity | ⚡ Resource Needs | ⭐ Expected Outcomes | 📊 Ideal Use Cases | 💡 Key Advantages / Tips |

|---|---|---|---|---|---|

| What is the difference between JDK, JRE, and JVM? | Low, conceptual layering | Low–medium, dev vs runtime packages | Correct deployment and build/runtime separation | Choosing runtime for containers vs build environments | Use JRE for smaller images; JDK in build containers; consider GraalVM for native images |

| Generics in Java | Moderate, type-system concepts | Low, compile-time feature | Compile-time type safety and reusable APIs | Type-safe libraries, data pipelines handling heterogeneous types | Watch type erasure for reflection; use bounded wildcards for constraints |

| Collections & Framework hierarchy | Moderate, API knowledge + tradeoffs | Medium, memory/time tradeoffs per implementation | Efficient data structures tailored to use patterns | Dataset storage, feature lookup, queues for scheduling | Choose implementation by complexity; prefer ConcurrentHashMap for concurrent caches |

| Exception Handling (try-catch-finally-throw) | Low–Moderate, patterns & design | Low, runtime overhead except in hot paths | Resilient, maintainable error handling and guaranteed cleanup | Model serving, resource management, retry/fallback flows | Use try-with-resources; avoid broad catches and suppressing root causes |

| Multithreading & Concurrency | High, subtle semantics and pitfalls | High, CPU, threads, memory overhead | Improved throughput but higher risk of race/ deadlock | Parallel preprocessing, concurrent inference, thread pools | Prefer ExecutorService, concurrent collections; design for happens-before and deadlock avoidance |

| Inheritance, Polymorphism, Method Overriding | Low–Moderate, OOP patterns | Low, structural design costs | Extensible, testable architectures with polymorphic behavior | Plugin model types, transformer hierarchies, common interfaces | Favor composition over deep inheritance; ensure Liskov Substitution holds |

| SOLID principles | Moderate, design discipline | Low–medium, upfront design effort | Maintainable, extensible, testable systems | Large evolving ML platforms and team-scale codebases | Apply pragmatically; avoid over-engineering; use DI for swapping implementations |

| Java Memory Model & Garbage Collection | High, concurrency and GC internals | High, heap tuning, GC selection impacts | Predictable latency and throughput when tuned | Latency-sensitive inference, large-model training, long-running services | Monitor GC logs; pick G1/ZGC based on pause/throughput SLAs and profile memory usage |

| Abstract Classes vs Interfaces | Moderate, tradeoff decisions | Low, design-time choice | Balanced flexibility vs shared implementation/state | Defining contracts (interfaces) vs shared behavior (abstract classes) | Use interfaces for loose coupling and testing; abstract classes for shared state/behavior |

| Streams & Java 8+ Functional Features | Moderate, functional idioms + parallelism caveats | Medium, may increase CPU/memory with parallelization | Declarative, composable data pipelines; potential parallel speedups | Feature engineering, transform pipelines, bulk data processing | Use parallel streams carefully; avoid stateful operations and profile for small datasets |

Your Action Plan: Hire Your Next Java AI Engineer

A CTO approves a Java hire for an AI platform role. Thirty days later, the new engineer is still giving clean interview answers, but cannot explain a GC pause in a latency-sensitive inference service, cannot reason clearly about thread safety in a feature pipeline, and turns design reviews into theory debates. That hiring miss usually starts in the interview loop.

Use this section as an operating plan, not a recap of Java basics. The goal is to decide whether a candidate can succeed in a modern remote AI and ML team where Java sits inside real production systems, long-lived services, data pipelines, and cross-functional architecture decisions.

Strong hires show four things in combination. They understand Java fundamentals well enough to avoid expensive mistakes. They can connect those fundamentals to production behavior under load. They explain trade-offs in writing and in conversation. They make design choices that keep an AI platform easier to change as model workflows, infra constraints, and team ownership shift.

That changes how these java interview questions and answers should be used. Each question in this guide should help you test one prediction: will this engineer ship reliable systems with platform, product, and ML peers, or will they need constant correction once the system gets messy?

A senior interview loop usually works best in three layers.

Start with core Java questions to confirm baseline depth. A candidate for a senior AI-facing backend role should be comfortable discussing runtime boundaries, collections choices, exception strategy, memory behavior, concurrency risks, and interface design without drifting into memorized definitions. If they cannot connect JDK, JVM, and GC behavior to operational consequences, they are unlikely to handle production ownership well.

Then move into scenario testing. Ask them to diagnose stale reads in a model scoring service. Ask where they would place retries around a flaky feature store client and where they would not. Ask how they would design pluggable model backends, support new providers, and keep the code testable without building an inheritance tree nobody wants to maintain. Architectural judgment becomes visible, strengthening the hiring signal.

Use a written scorecard. Without one, interviewers often reward confidence, familiarity, or style instead of evidence.

Score against a small set of dimensions:

- Java depth tied to production use

- debugging and incident reasoning

- system design judgment

- trade-off clarity

- remote communication quality

- ownership mindset around reliability, latency, and changeability

For AI and ML roles, add one more lens: business impact. A weak answer on synchronization is not just a language gap. It can translate into corrupted shared state, inconsistent inference results, or painful tail-latency spikes. A shallow answer on collections can turn into memory waste in data-heavy services. A poor answer on SOLID or interfaces often predicts code that slows model iteration because every new backend or pipeline change forces risky rewrites.

Calibration matters too. Junior screening and senior evaluation should not look alike. For senior hires, I look for candidates who can move from language mechanics to operational consequences to design decisions in a single answer. That pattern is a much better predictor of success than trivia speed.

Here are the next three moves that improve hiring quality fast.

Download the Java Interview Scorecard PDF

Use one rubric across interviewers. Grade runtime knowledge, concurrency reasoning, design maturity, incident handling, and communication quality against the same standard.See Sample Profiles

Review vetted senior Java and AI engineering profiles before interviews begin. That helps the team calibrate what production-ready judgment looks like in actual candidate backgrounds.Start a Pilot

Run a short paid project before a full hire when the role is high risk or highly cross-functional. Give the candidate real constraints: a code review, a design memo, a debugging task, and async collaboration with your team. That exposes execution quality far better than polished interview answers.

If your local market is thin, broaden sourcing through curated remote jobs communities.

The teams that hire well do not optimize for the flashiest answer. They hire engineers who keep services stable, reduce architecture drag, write decisions clearly, and make the rest of the team faster with less operational risk.

If you're hiring Java engineers who can operate in real AI and ML environments, ThirstySprout can help you meet vetted senior talent fast. We work with companies that need builders who understand production systems, remote collaboration, and the practicalities of shipping AI products, not just passing interviews. Start a Pilot, or see sample profiles to benchmark the level you're aiming for.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.