You have 45 minutes with a candidate whose résumé says all the right things. PyTorch. AWS. Kubernetes. “Built scalable ML systems.” Two answers in, you can usually tell whether that person has operated a production system or stayed close to notebooks and demos.

The gap shows up in the details. Strong candidates explain why they chose batch inference instead of real-time serving, what failed after launch, how they monitored drift, and which trade-offs they accepted to hit a business deadline. Weaker candidates stay at the level of tools and model names. Hiring managers need questions that expose judgment, ownership, and execution under constraint.

That matters because AI hiring is expensive in both time and focus. Senior candidates notice weak interview design fast. They also notice when the interview mirrors the job. Good interview questions asked by hiring manager teams should test how someone ships, debugs, communicates, and prioritizes. Those are the habits that show up after the offer is signed.

This is the interview kit we use at ThirstySprout for AI and data hires. It is built for teams that care about production outcomes, not résumé keyword matches. Each question includes the reason it works, what strong answers sound like, red flags, follow-up prompts, and role-specific variants for LLM, MLOps, and data engineering work. There is also a scorecard you can use to keep interviewers calibrated.

The goal is simple. Reduce false positives, give strong candidates a fair signal of how your team works, and make hiring decisions you can defend six months later.

If your team is still tightening its process, these MLOps best practices for production teams help define the bar before you start interviewing. For a broader companion set of practical AI interview strategies, use this guide alongside your hiring loop.

1. Tell me about your experience shipping production AI/ML systems

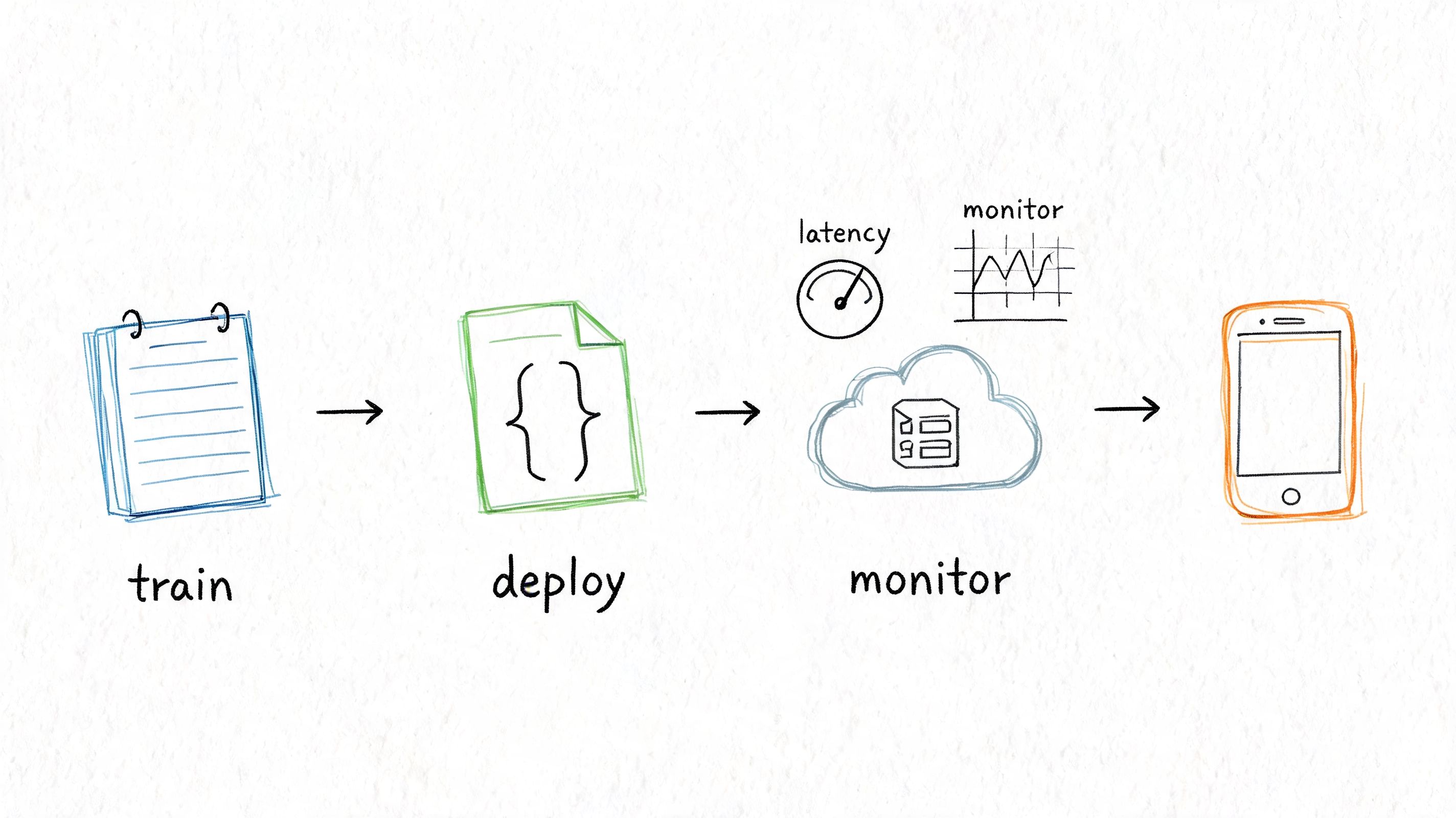

Start here because it cuts through résumé inflation quickly. Candidates who’ve shipped can usually tell a coherent story from problem definition to deployment to monitoring. Candidates who haven’t often stall at model training.

A strong answer sounds operational. You want to hear about the business problem, the architecture, the launch path, the constraints, and what happened after release. Good candidates talk about model serving, observability, rollback plans, feature pipelines, data freshness, and failure handling without needing prompts.

If I only had one opening question for a senior AI hire, this would be it. It reveals whether the person has lived through the ugly parts, not just the demo.

What good answers include

- Clear ownership: They can name what they personally designed, implemented, or drove across teams.

- Production specifics: They mention serving patterns, CI/CD, deployment strategy, monitoring, retraining triggers, or incident response.

- Trade-off awareness: They explain why they chose one approach over another.

- Business grounding: They connect the system to a customer or revenue problem, not just model quality.

For MLOps-heavy roles, I like to compare their answer against operational themes in these MLOps best practices. If their story skips reproducibility, deployment discipline, or monitoring, that’s usually a sign they were adjacent to production rather than accountable for it.

Practical rule: Ask, “What failed after launch?” If they can’t answer, they probably weren’t close enough to production.

A mini-case. Candidate A says, “I built a recommendation model using PyTorch and deployed it to AWS.” Candidate B says, “We launched a ranking service behind an API, discovered stale features during weekend traffic shifts, added feature freshness checks, and changed retraining cadence.” Candidate B has given you something testable.

For broader practical AI interview strategies, this question works best when you keep drilling past the polished summary.

Red flags and follow-ups

Red flags are usually obvious once you listen for sequence and ownership.

- Vague deployment language: “We pushed it live” with no mention of how.

- Research-only framing: Lots on architecture, almost nothing on reliability.

- No post-launch learning: Production always teaches something.

Follow with these:

- “What was the hardest production constraint?”

- “How did you monitor model health after release?”

- “What would you redesign if you inherited that system today?”

Role variants:

- LLM. Ask about evaluation, prompt/version management, and hallucination handling.

- MLOps. Ask about pipeline reproducibility, model registry, deployment automation.

- Data engineering. Ask about upstream data contracts and inference data quality.

2. Describe a time you had to optimize a model or system for production constraints

This question gets past “smart” and into “useful.” In real teams, very few systems fail because nobody knew the latest paper. They fail because latency is too high, inference costs spike, memory use is unstable, or the model can’t survive ordinary traffic.

The best candidates tell a story that starts with measurement. They don’t say, “We optimized it.” They say what was constrained, how they measured it, what they changed, and what they gave up.

Listen for prioritization under pressure

A strong answer often includes two or three rejected options. That matters. Senior engineers rarely get credit for finding a solution. They get credit for picking the right compromise.

For example, an LLM candidate might describe reducing prompt size, caching intermediate outputs, or moving a workflow from synchronous generation to asynchronous processing. A platform candidate might describe replacing a monolith inference path with a lighter service boundary or introducing batching where user experience allowed it.

Good engineers optimize the whole path, not just the model. Sometimes the fix is in serialization, feature retrieval, or request fan-out.

Here’s a simple interview scenario I use. “A support copilot is useful, but responses are too slow and too expensive at peak hours. Walk me through your approach.” Strong candidates usually start with instrumentation, then break the problem into model, retrieval, network, and product constraints.

Rubric and warning signs

Use this quick rubric in your scorecard:

- Strong: Measures before changing anything, names multiple levers, explains user impact, and can defend the final trade-off.

- Mixed: Focuses only on one layer, usually the model, and misses system effects.

- Weak: Talks in generic tuning terms without describing a decision process.

Red flags:

- They optimize for benchmark elegance instead of operating reality.

- They can’t explain what they chose not to optimize.

- They ignore cost until prompted.

Role variants help sharpen this:

- LLM. Ask about context window management, retrieval payload size, fallback models, or prompt compression.

- MLOps. Ask how they validated a change safely in production.

- Data engineering. Ask where pipeline bottlenecks appeared and how they isolated them.

The point isn’t whether they chose quantization, caching, batching, or a simpler architecture. The point is whether they can make a hard trade-off without hiding behind abstraction.

3. Walk me through your approach to debugging a model that’s underperforming in production

It is 2 a.m. Metrics look fine at the infrastructure layer, but support tickets are piling up and a model that performed well before launch is now making bad calls. This question tells you who can handle that moment with discipline.

The best candidates do not start with retraining. They start by defining the failure precisely. “Underperforming” can mean lower precision, worse ranking, rising latency, poor calibration, lower coverage, or a drop in a business metric because the product around the model changed. If they cannot name the failure mode, they will waste time fixing the wrong layer.

A strong answer follows an ordered investigation. First, confirm the symptom with production evidence. Next, segment the blast radius by user cohort, traffic source, model version, geography, or feature set. Then inspect the path end to end: inputs, feature generation, model outputs, thresholds, serving behavior, and downstream application logic. I want to hear a candidate explain why that order matters. It reduces noise, protects users, and avoids expensive guesswork.

A useful scenario is a fraud model that still looks healthy offline but starts missing obvious cases in production. Strong candidates ask whether feature definitions changed, whether a join broke, whether labels are delayed, whether the score threshold no longer matches the current operating point, and whether monitoring captured model quality or only API uptime. That is the right instinct. Production failures often come from interfaces between systems, not from the model weights alone.

What you want to hear

Use this in your scorecard:

- Strong: Defines the failure clearly, checks instrumentation first, segments the issue, compares training and production behavior, examines real examples end to end, proposes a safe mitigation, and explains what evidence would confirm the root cause.

- Mixed: Mentions drift, bad data, or retraining, but gives no sequence for narrowing the search space.

- Weak: Jumps straight to tuning or swapping models, cannot name the monitoring they expect, and treats production debugging like an offline notebook exercise.

Why this predicts job performance

This question surfaces judgment under ambiguity. On the job, the work is rarely “find the bug” in isolation. It is “find the bug without making things worse, explain the impact in plain language, and decide whether to roll back, patch, or contain.” Candidates who debug well usually communicate well because both skills depend on clear problem framing.

I also look for trade-off awareness. The fastest mitigation is not always the cleanest fix. Sometimes the right move is to disable a feature, tighten a threshold, route traffic to a simpler fallback, or stop a bad pipeline before a full root-cause analysis is complete. Senior people know how to stabilize first and optimize second.

Follow-up prompts

Use follow-ups to test whether the candidate has done this work:

- Instrumentation: “What signals should already exist before this incident happens?”

- Root cause: “How would you distinguish data drift from a feature bug or a bad deploy?”

- Mitigation: “Users are being harmed right now. What is the fastest safe action?”

- Validation: “How would you prove the fix worked and did not just move the failure elsewhere?”

Strong candidates narrow the search space, protect the user, and make each diagnostic step earn its cost.

Red flags

- They have no debugging order.

- They default to retraining without checking inputs and interfaces.

- They cannot talk about slices, cohorts, or failing examples.

- They describe monitoring in generic terms and never mention model-specific signals.

- They ignore rollback, containment, or fallback options.

Role-specific variants

- LLM: Ask how they would separate retrieval failure, prompt failure, generation failure, safety filtering, and application orchestration. Strong answers usually include trace inspection, sampled conversations, prompt and retrieval diffs, and evaluation on known bad cases.

- MLOps: Ask how they would compare model versions, feature pipelines, and deployment artifacts, then validate a fix safely with shadow traffic, canaries, or rollback criteria.

- Data engineering: Ask where schema changes, late data, broken joins, or backfills could corrupt model inputs, and how they would isolate the fault before downstream teams start retraining a healthy model.

The goal is not to hear a perfect taxonomy of failure modes. The goal is to find out whether the candidate can run a calm, evidence-based incident process in a messy production system. That is the person you trust with real users and real revenue.

4. How do you stay current with AI/ML advancements, and what recent developments have influenced your work?

This isn’t a hobby question. It’s a judgment question.

AI moves fast, but raw consumption isn’t the same as useful learning. The best candidates don’t just follow papers, model releases, and repo trends. They filter them. They can explain what changed their practice and what they ignored.

What you want to hear

A strong candidate can name one or two recent developments, explain them in plain English, and connect them to a practical engineering decision. Maybe they changed how they think about retrieval-augmented generation, evaluation design, model compression, observability, or deployment safety. What matters is the chain from information to action.

Weak answers sound like a social media feed. “I keep up with arXiv, X, and podcasts.” Fine, but what did that change in your work? If the answer is nothing specific, the learning loop is shallow.

One useful prompt is, “Tell me about a recent idea you didn’t adopt.” That often reveals maturity better than asking what excited them. Senior people know when not to chase novelty.

Personal synthesis beats trend-chasing

I’d rather hire the engineer who thoroughly understood one production-relevant idea than the candidate who can name every foundation model release from memory.

Try this mini-case. Ask an LLM candidate, “What recent development changed how you think about RAG versus fine-tuning?” Strong answers often discuss operational complexity, evaluation burden, knowledge freshness, or domain adaptation. Weak answers collapse into vendor slogans.

Another useful angle is ethics and judgment. More teams now ask candidates how they personally think about AI use, reliability, and risk. That’s not fluff. In remote teams, where autonomy is high, personal judgment shows up in product decisions long before a manager catches it.

A practical follow-up:

- “What limitation did you notice in that approach?”

- “How did you validate it in your own work?”

- “What would make you reverse your opinion?”

Red flags:

- They confuse awareness with competence.

- They repeat benchmark headlines without caveats.

- They can’t separate research progress from deployable progress.

This question works because it reveals learning velocity and skepticism at the same time. Both matter more than ever in AI hiring.

5. Tell me about a time you had to work with imperfect or insufficient data. How did you handle it?

A candidate’s answer to this question tells you whether they can build under real operating conditions. Early data is often incomplete, labels are inconsistent, upstream systems change without notice, and the business still needs a decision.

The signal here is judgment. Strong candidates know how to make progress without treating weak data as a solved problem. They can separate what is usable now from what must be fixed before the system deserves more trust.

I usually want a story with two threads. One is short-term execution: how they established a baseline, constrained the problem, or used partial labels carefully enough to learn something useful. The other is system repair: how they improved collection, labeling, schema discipline, or review processes so the next version had a better foundation.

That trade-off matters. Teams get into trouble when a candidate only knows one mode. Some engineers keep experimenting forever because the data is messy. Others ship anyway and hand the business a model with confidence levels nobody should trust.

A strong answer sounds concrete. For example, a team wants a support-ticket classifier, but historical tags were applied inconsistently across shifts and regions. Good candidates usually start by auditing label quality, measuring disagreement, reducing the taxonomy if needed, and creating a trusted evaluation set before tuning models. If they mention heuristics, weak supervision, or human review, they should also explain where those methods break and how they checked for false confidence.

What this question is really testing

This is less about data cleaning skill than operating judgment.

Use the question to evaluate:

- Problem framing: Did they redefine the task to fit the available signal?

- Risk management: Did they state what the model should and should not be used for?

- Iteration quality: Did they improve the dataset, not just the model?

- Communication: Did they explain uncertainty in terms stakeholders could act on?

If you want a stronger behavioral read, borrow follow-up patterns from these behavioral interview questions for software engineers. The best prompts force candidates to describe decisions, trade-offs, and consequences, not just tools.

Rubric for strong answers

Look for answers that include:

- A clear description of what was wrong with the data. Missingness, label noise, sampling bias, stale records, schema drift, or weak ground truth.

- A practical first step. Baseline model, rule-based benchmark, manual audit, trusted subset, or narrowed use case.

- Explicit quality checks. Error analysis, inter-annotator disagreement, slice-based evaluation, backtesting, or data validation rules.

- A plan to improve the source. Better instrumentation, annotation guidelines, contracts between systems, or workflow changes.

- Honest communication about limits. What they could ship safely, what they postponed, and what failure modes remained.

Red flags

- They say they “cleaned the data” but cannot explain what was wrong.

- They jump straight to model architecture before validating labels or coverage.

- They treat more data as the answer without discussing whether the new data would be any better.

- They never mention a holdout set, trusted subset, or human review loop.

- They shipped despite known uncertainty and cannot describe guardrails.

One sentence I listen for is some version of: “We reduced scope so the model only handled cases where the signal was good enough.” That usually comes from someone who has learned the cost of pretending coverage and quality are the same thing.

Follow-up questions that separate strong candidates

Ask:

- “How did you decide the data was good enough for the first release?”

- “What did you do when stakeholders wanted a broader use case than the data supported?”

- “How did you measure whether label quality or model quality was the bigger problem?”

- “What changed in the pipeline or workflow after that project?”

- “If you had another month, would you improve the model or the data first? Why?”

Role-specific variants

- LLM: Ask about low-quality corpora, retrieval over conflicting documents, synthetic data use, or annotation drift in preference data.

- MLOps: Ask how they detected schema changes, enforced contracts, monitored data quality, and prevented silent training-serving mismatch.

- Data engineering: Ask how they traced source reliability, handled missing fields at ingestion, and designed pipelines that exposed bad inputs early.

This question works because bad data creates second-order problems. It does not just reduce model accuracy. It distorts prioritization, hides failure modes, and gives leadership a false sense of readiness. The best candidates have seen that happen, and they can explain exactly how they kept it from getting worse.

6. Describe your experience collaborating with non-technical stakeholders on ML projects

The strongest AI engineers don’t just build systems. They align systems with product goals, legal constraints, customer workflows, and operational reality. If a candidate can’t work across functions, their technical skill won’t travel very far.

This question matters even more in remote teams. Async communication magnifies misunderstandings. Product hears “possible,” leadership hears “committed,” and engineering inherits a deadline nobody scoped.

Ask for a story where they had to push back

The best version of this question is not “Have you worked with product?” Almost everyone says yes. Ask when they had to say no, narrow scope, or reframe success.

Strong candidates can describe how they translated uncertainty into business language. They explain what they promised, what they refused to promise, and how they kept trust while doing it.

For a useful behavioral pattern, this set of behavioral interview questions for software engineers is close to how strong hiring managers structure evidence-based conversations.

Rubric for collaboration

- Strong: Aligns technical decisions to customer or business outcomes, manages expectations clearly, and handles disagreement without drama.

- Mixed: Participates in meetings but doesn’t influence direction.

- Weak: Treats non-technical stakeholders as blockers or “the business.”

A mini-case. Product wants a generative AI feature that answers customer questions from internal docs. The candidate should be able to explain why source quality, retrieval design, and fallback behavior matter to user trust. If they can only discuss embeddings and prompts, they’re not really collaborating. They’re implementing in isolation.

The best cross-functional engineers don’t simplify the truth. They translate it.

A related hiring reality is that 72% of organizations now use AI-driven tools in recruiting, while 66% of hiring managers believe AI can mitigate bias and 54% still have concerns about implementation. That tension makes human judgment in live interviews more important, not less. Collaboration questions help you see judgment in a way automated screening can’t.

Red flags:

- They frame disagreements as personal rather than structural.

- They never mention documentation, written decisions, or expectation setting.

- They say yes too easily.

Role variants:

- LLM. Ask how they explained hallucination risk or quality limits.

- MLOps. Ask how they negotiated platform work versus feature deadlines.

- Lead roles. Ask how they handled disagreement between product urgency and engineering risk.

7. Walk me through how you’d approach building or evaluating a domain-specific system

A candidate can sound impressive for 30 minutes and still fail this question.

Give them a system that looks like your actual work. A support assistant grounded in internal docs. A recommender for product discovery. A forecasting pipeline with sparse history. A fraud model with delayed labels. You are not testing whether they can recite a fashionable architecture. You are testing whether they can make sound decisions under real constraints.

The first minute matters. Strong candidates start by narrowing the problem. They ask who the user is, what decision the system supports, what failure costs look like, and what constraints are fixed. That tells you whether they build from outcomes backward or from tools forward.

I score this question across five areas:

- Problem framing: Do they define the user, task, decision point, and business objective?

- Data and feedback loops: Do they ask what signals exist, how labels are created, and where bias or drift can enter?

- System design: Can they justify the architecture they choose, including what they are deliberately not building yet?

- Evaluation: Do they separate offline metrics, online behavior, and operational health?

- Failure handling: Do they plan for bad retrieval, stale features, latency spikes, low-confidence outputs, and rollback?

For LLM roles, this question exposes whether the candidate understands trade-offs or just vocabulary. A good answer covers retrieval quality, chunking, citation strategy, prompt assembly, fallback behavior, and when fine-tuning is justified. This guide on fine-tuning LLMs for domain-specific behavior is useful background if you want to probe retrieval versus fine-tuning without turning the interview into a theory exercise.

Use this video in your interview prep stack if you want another lens on structured questioning before running the session:

What a strong answer actually sounds like

The best candidates make trade-offs explicit. They might say, “I’d launch a retrieval-based assistant first because it is easier to evaluate, cheaper to update as docs change, and easier to constrain. I would only fine-tune if retrieval quality plateaus or the task needs domain style and reasoning patterns that prompting cannot reliably produce.” That is the kind of answer that predicts good judgment on the job.

Weak candidates jump straight to a model name. Mixed candidates describe components but never connect them to user risk or business value. Strong candidates sequence the work, define how they would test it, and explain what they would postpone.

Use follow-ups to pressure-test depth:

- “What would you ship in the first six weeks?”

- “What metric would make you stop the launch?”

- “How would you evaluate this before you have strong labels?”

- “What changes if latency has to drop by half?”

- “What changes if legal requires traceability for every output?”

Rubric, red flags, and role variants

- Strong: Frames the problem well, chooses a design that fits constraints, defines offline and online evaluation, and names likely production failures.

- Mixed: Has solid technical instincts but skips instrumentation, rollout strategy, or trade-offs.

- Weak: Treats the problem as model selection, ignores data quality, and has no plan for monitoring or fallback behavior.

Red flags:

- They never ask clarifying questions.

- They equate a good demo with a good production system.

- They only mention accuracy, not calibration, latency, cost, or user trust.

- They cannot explain why one design is better than another for this specific case.

Role variants:

- LLM: Ask when they would use retrieval, fine-tuning, agents, or a plain workflow. Listen for evaluation discipline, not enthusiasm.

- MLOps: Ask how they would deploy safely, monitor drift, version data and prompts, and handle rollback.

- Data Eng: Ask how they would build the serving data path, freshness guarantees, lineage, and backfill strategy.

This question works well because it functions like a compact work sample. It gives you the “why,” the answer rubric, the red flags, and a set of follow-ups you can score in a repeatable way. If you use a hiring scorecard, this is one of the best prompts to include because strong answers correlate with real execution, not interview polish.

8. Tell me about a technical debt or legacy system you refactored

Many senior candidates love greenfield stories because they’re flattering. I care just as much about what they do when they inherit something brittle, undocumented, and politically inconvenient.

Legacy work shows judgment. It forces prioritization, risk management, influence, and restraint. Those are the same muscles your next hire will need in a real company.

Strong answers don’t romanticize refactoring

A good candidate explains why the debt mattered. Maybe releases were risky, experimentation was slow, onboarding was painful, or reliability was suffering. They should also explain why they didn’t rewrite everything.

The best answers show staged migration thinking. For example, a candidate might describe carving a model-serving path out of a larger service, adding contract tests around feature generation, or introducing a parallel pipeline before switching traffic. I like hearing exactly how they reduced blast radius.

Here’s a useful mini-case. An inherited training pipeline depends on manual steps, one person knows the order of operations, and experiments can’t be reproduced. Strong candidates usually start by making behavior visible and repeatable before trying to modernize every component. Weak candidates jump to “we rebuilt it.”

What separates judgment from enthusiasm

- Strong: Improves reliability or developer velocity while controlling migration risk.

- Mixed: Knows what’s wrong but can’t explain sequencing.

- Weak: Frames refactoring as virtuous for its own sake, without business context.

One reason this question matters in AI teams is that reproducibility and scale become painful fast. Good MLOps interview guidance emphasizes capturing all data, code, and model artifacts per iteration, along with monitoring and business understanding through deployment. That focus is central to evaluating whether someone can stabilize a legacy ML stack rather than just criticize it.

A sharp follow-up is, “What new feature work did you delay, and why was that worth it?” If they can’t answer, they may not have owned the prioritization.

Red flags:

- They talk about elegance more than outcomes.

- They never mention rollback or migration safety.

- They describe debt as somebody else’s mistake instead of a current business constraint.

This question is underrated because it surfaces the kind of engineering judgment that usually only appears after the hire.

9. How do you approach learning and using a new tool, framework, or technology

This sounds simple, but it’s one of the better interview questions asked by hiring manager teams that work in fast-moving stacks. AI tooling changes quickly. Cloud patterns change. Data systems change. Your new hire won’t know everything on day one. They need a repeatable way to get productive without causing chaos.

Look for a learning system, not just curiosity

Strong candidates describe a method. They usually start with the problem to solve, not the tool itself. Then they narrow the surface area, read official docs, build a small proof of concept, compare alternatives, and only then integrate into production work.

That sequence matters. It filters out people who chase tools for status or novelty.

A good mini-case is to ask, “Tell me about the last framework or platform you had to learn under delivery pressure.” Maybe they moved from one orchestrator to another, adopted a vector database, switched cloud services, or learned a new model serving framework. Listen for how they de-risked learning while still shipping.

What strong answers usually include

- Problem-first evaluation: They define what the tool must do before testing it.

- Fast experiments: They create a narrow prototype before broad adoption.

- Transferable reasoning: They explain what concepts carried over from older tools.

- Adoption judgment: They know when not to roll out a shiny new thing.

This question has become more important as AI systems spread through engineering organizations. Mature MLOps guidance increasingly emphasizes balanced ML and infrastructure skills, reproducibility, security, privacy, and troubleshooting across cloud and deployment layers. Candidates who learn well across those boundaries tend to perform better than those who only know one favorite stack.

One practical follow-up is, “What made you trust the tool enough for production use?” Another is, “When has your usual learning approach failed you?” That second one often reveals humility.

Red flags:

- They rely on tutorials but don’t validate assumptions.

- They can’t compare tools on operational criteria.

- They overvalue framework familiarity over underlying concepts.

The best engineers don’t just learn fast. They learn in a way that lowers risk for the team.

10. Tell me about your experience working in a remote or distributed team

A distributed AI team can lose a full day on one bad handoff. The candidate who performs well here is the one who keeps work moving across time zones, writes decisions down clearly, and surfaces risk before it turns into drift, rework, or a production mistake.

This question predicts execution quality more than preference. Plenty of candidates like remote work. Far fewer can show a repeatable operating style that keeps model, data, and platform work aligned without constant meetings.

Ask for a specific example. Good prompts are: “Tell me about a project where key teammates were in different time zones,” or “Describe a handoff that went well, and one that failed.” The goal is to hear how they handled ambiguity, ownership, documentation, and escalation.

Why this question matters

Remote work exposes habits that office environments can hide. Weak engineers rely on ad hoc conversations, implicit context, and fast replies from nearby teammates. Strong engineers leave a clear trail. They write design choices down, define next actions, record assumptions, and make it easy for someone else to pick up the work six or twelve hours later.

For AI teams, that matters even more. A distributed project often spans data engineering, model training, evaluation, infrastructure, and product stakeholders. If the candidate cannot create shared context in writing, the team pays for it in slower reviews, duplicated experiments, and preventable incidents.

What strong answers should include

Use this as a scoring lens:

- Clear async habits: They describe decision docs, status updates, issue templates, or handoff notes they used.

- Ownership and escalation: They know when to unblock themselves and when to escalate a dependency or risk.

- Time zone judgment: They reserve meetings for decisions that benefit from live discussion, not routine coordination.

- Context quality: Their examples include assumptions, trade-offs, owners, and a proposed next step, not just “I sent a message.”

- Team impact: Senior candidates explain how they improved the team’s operating cadence, not only their own responsiveness.

The best answers sound operational. For example: “I posted a short update at end of day with the experiment result, failure mode, logs, and two options for the teammate in Europe to choose from. That let them continue before I was back online.” That is the behavior you want.

Red flags

- They equate remote effectiveness with being online all the time.

- They talk about flexibility and autonomy but not documentation.

- They wait for the next meeting instead of creating a useful handoff.

- They blame time zones for coordination problems they could have reduced with better written context.

- They cannot explain how they kept stakeholders informed when work changed course.

One follow-up I like is: “A blocker appears at the end of your day, and the owner is six time zones away. What do you do?” Strong candidates prepare a handoff with the current state, evidence collected, likely options, and a recommendation. Weak candidates say they would wait.

Another useful follow-up is role-specific. For an LLM engineer, ask how they documented prompt, eval, or retrieval changes so another engineer could reproduce results. For MLOps, ask about incident handoffs, runbooks, and on-call coordination. For Data Engineering, ask how they communicated schema changes, pipeline failures, or backfill plans across regions.

There is also an integrity angle in distributed hiring. As noted earlier, background checks and policy guardrails help, but they do not replace direct evidence of how someone works. This question gives you that evidence. It shows whether the candidate creates clarity when no manager is sitting nearby and no meeting is available to rescue a vague handoff.

10 Hiring Manager Interview Questions Comparison

| Question / Focus | 🔄 Implementation complexity | ⚡ Resource requirements | 📊 Expected outcomes | 💡 Ideal use cases | ⭐ Key advantages |

|---|---|---|---|---|---|

| Tell me about your experience shipping production AI/ML systems | High, covers deployment, monitoring, and scaling trade-offs | Moderate–High, MLOps infra, cross‑team coordination | Validate production readiness and operational competence | Hiring senior ML engineers for production systems | Filters for hands‑on production experience and accountability |

| Describe a time you had to optimize a model or system for production constraints (latency, cost, memory) | Medium–High, requires system profiling and model changes | Low–Moderate, profiling tools, compute for experiments | Demonstrates pragmatic trade‑offs and measurable improvements | Startups/scaleups with strict latency or cost targets | Predicts success under resource constraints and cost sensitivity |

| Walk me through your approach to debugging a model that's underperforming in production | High, needs systematic hypotheses and isolation steps | Moderate, observability, logs, data validation, A/B tests | Shows incident response, root‑cause analysis, and fixes | Mission‑critical ML systems (Series B+) | Predicts ability to resolve production incidents quickly |

| How do you stay current with AI/ML advancements, and what recent developments have influenced your work? | Low, behavioral assessment of habits and judgment | Low, time for reading, conferences, communities | Gauges learning velocity and ability to apply new ideas | Leadership/strategy roles and rapidly evolving stacks | Identifies adaptable engineers who guide technical direction |

| Tell me about a time you had to work with imperfect or insufficient data. How did you handle it? | Medium, requires creative data strategies and validation | Low–Moderate, augmentation, labeling, transfer learning tools | Reveals data‑efficiency techniques and pragmatic solutions | Early‑stage startups or scarce‑data domains | Predicts resourcefulness and practical shipping mindset |

| Describe your experience collaborating with non‑technical stakeholders (product, leadership, customer success) | Low–Medium, communication and translation skills | Low, documentation, stakeholder time, alignment practices | Assesses clarity in explaining trade‑offs and managing expectations | Cross‑functional teams and product‑led startups | Improves product alignment and reduces miscommunication |

| Walk me through how you'd approach building/evaluating a recommender system (or LLM/forecasting model) | High, architecture, evaluation, and operational trade‑offs | High, data pipelines, evaluation infra, compute | Shows systems thinking, metrics, and iteration plans | Specialized domain hires (recommendation, LLMs, forecasting) | Differentiates senior architects and domain experts |

| Tell me about a technical debt or legacy system you refactored. What was your approach? | Medium–High, planning, incremental risk mitigation | Moderate, engineering time, tests, rollout strategy | Demonstrates prioritization and measurable improvement | Scaling companies with legacy platforms (Series B+) | Reveals long‑term thinking and roadmap influence |

| How do you approach learning and using a new tool, framework, or technology? | Low–Medium, structured learning and quick prototyping | Low, docs, examples, small projects, community help | Measures ramp‑up speed and pragmatic depth of knowledge | Roles requiring rapid onboarding or diverse stacks | Predicts fast productivity and independent learning |

| Tell me about your experience working in a remote or distributed team. How do you handle async communication and time zones? | Low–Medium, processes for async coordination | Low, documentation tools, handoffs, overlap planning | Assesses async discipline, documentation, and scheduling | Remote‑first companies and distributed teams | Ensures fit for async workflows and independent delivery |

Your Next Hire From Interview to Impact

You finish a full interview loop for a senior ML candidate. Everyone feels good. The résumé was strong, the technical answers sounded credible, and the conversation stayed pleasant. Six months later, the model is late, the handoffs are weak, and nobody can explain whether the candidate ever owned production outcomes in the first place.

That failure usually starts in the interview design, not in the offer decision.

A strong hiring loop tests for the work the person will actually do. For AI roles, that means evidence of shipping, debugging, prioritizing under constraints, explaining trade-offs, and working with messy data and messy organizations. This toolkit is built for that level of signal. The questions are only one part. The value comes from the full package around them: why each question matters, what strong answers look like, what should worry you, which follow-ups expose real ownership, and how to adjust the prompt for LLM, MLOps, and data engineering candidates.

Use a scorecard. Keep it tight. I recommend five dimensions across every interview: ownership, technical judgment, trade-off quality, business alignment, and communication. That structure matters because interviewers are bad at remembering details and even worse at comparing candidates when each person used different criteria.

A practical scorecard also keeps the loop fair. Candidates can tell when a team is testing for real work versus improvising. Clear prompts, consistent rubrics, and role-specific follow-ups create a better interview on both sides. They also make debriefs faster, because the discussion shifts from vague impressions to evidence.

A few operating rules improve the hit rate:

- Stay on one example until it is clear. Ask for a specific project, then trace scope, constraints, decisions, and results.

- Separate participation from ownership. Ask what the candidate decided, what they influenced, and what they would change now.

- Probe the hard parts. Production ML work includes incidents, bad data, broken assumptions, and stakeholder conflict. Good candidates can explain those moments plainly.

- Score substance over polish. Some senior engineers are concise to the point of sounding understated. That is fine if the answer shows judgment.

- Change the variant by role. An LLM engineer should discuss evaluation and guardrails. An MLOps lead should discuss reliability, rollout, and observability. A data engineer should discuss lineage, quality, and pipeline durability.

If you change one part of the loop, change the opening 15 minutes. Replace broad icebreakers with one real, role-relevant question from this set. You will get better signal early, and candidates usually respond with more specifics because the conversation has a clear purpose.

I also weight applied judgment more heavily than novelty. The engineer who can ship a useful system, explain trade-offs, and work through ambiguity is often the better hire than the candidate who can summarize every recent paper release but has not owned delivery. That trade-off shows up quickly when you use structured follow-ups and a shared rubric instead of informal impressions.

For teams turning this into an operating process, a downloadable scorecard helps. So does starting with candidates who already have production depth. ThirstySprout is one option for teams that want vetted senior AI talent and a more structured hiring process. The goal is not to outsource judgment. The goal is to spend your interview time verifying the right things.

Your next strong hire does not need perfect answers across all ten questions. They need a pattern of sound judgment, credible ownership, and the ability to turn technical work into business progress. That is the person you want when the model drifts, the launch date slips, or the roadmap changes mid-quarter.

If you’re hiring senior AI engineers, MLOps talent, or a remote ML team, ThirstySprout can help you start with vetted candidates who’ve shipped production systems. You can review sample profiles, tighten your interview scorecard, and move from screening to serious evaluation faster.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.