TL;DR: How to Run a Better Phone Screen

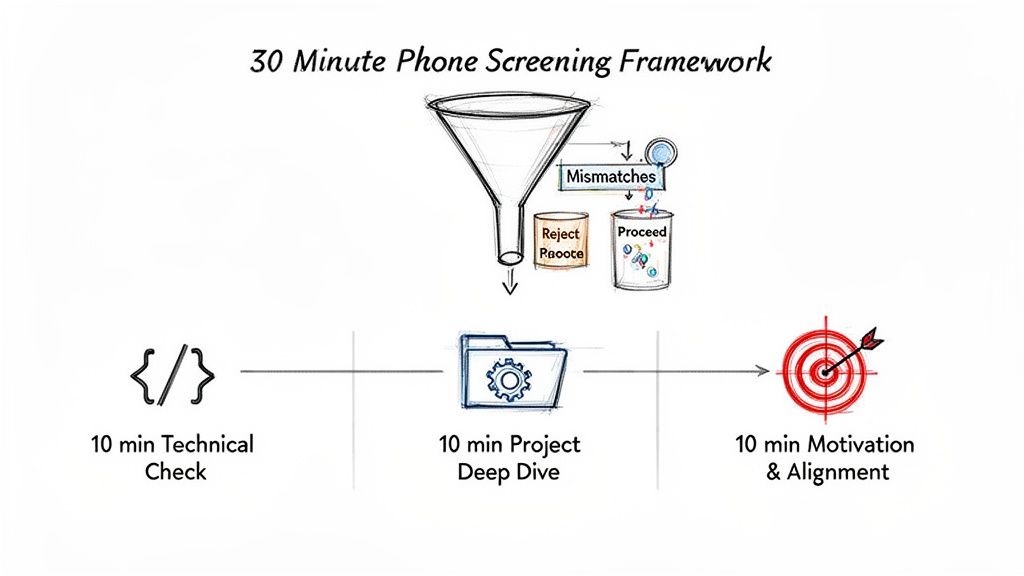

- Structure is key: Use a 30-minute, three-part framework: 10 minutes for technical fundamentals, 10 for a project deep-dive, and 10 for motivation.

- Ask practical questions: Ditch brainteasers. Ask role-specific questions that test real-world problem-solving, like how they would debug a RAG system or handle model drift.

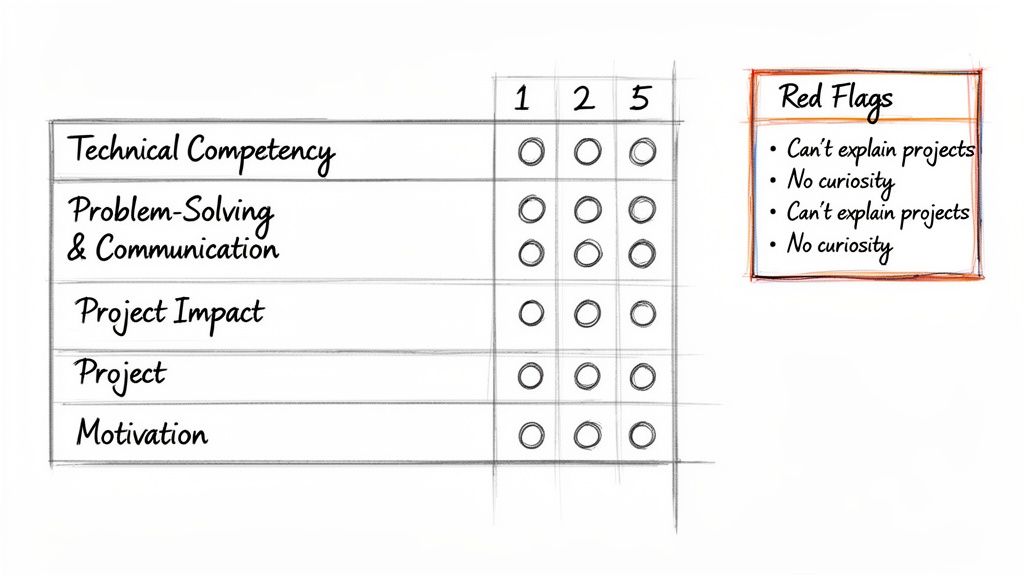

- Use a scorecard: Replace "gut feel" with a simple 1-5 rating system across four pillars: Technical Competency, Problem-Solving, Project Impact, and Motivation. This ensures fairness and data-driven decisions.

- Focus on business impact: A strong candidate can connect their technical work to a business outcome, such as reducing costs or improving user engagement. If they can't, it's a red flag.

- Recommended Action: Implement the scorecard and question templates from this guide in your next hiring round to reduce senior engineer interview time by up to 50% and hire faster.

Who This Guide Is For

This guide is for technical leaders who need to hire AI talent effectively and quickly.

- CTO / Head of Engineering: You need to protect your senior engineers' time and ensure only high-quality candidates reach the final rounds.

- Hiring Manager / Team Lead: You are on the front lines, responsible for running the screen and making the critical "go/no-go" decision.

- Talent Ops / Recruiter: You partner with engineering to build a repeatable, fair, and efficient hiring process that attracts top AI experts.

It's designed for operators who need a practical system they can implement today to improve hiring outcomes within weeks.

The 30-Minute Framework for an Effective AI Phone Screen

A bad phone screen is a rambling, 30-minute chat that leaves you with a vague "gut feeling." A great one is a sharp diagnostic tool that saves your team from wasting dozens of hours on unqualified candidates. For roles like AI Engineer or MLOps specialist, a structured phone screen is the most effective gate in your hiring process.

This framework moves beyond generic questions to systematically evaluate the three things that matter at this stage: core technical knowledge, real-world project experience, and professional motivation. A standardized screen can reduce the time senior engineers spend in interviews by up to 50%, freeing them to focus on product development and accelerating your time-to-hire.

Part 1: The Foundational Technical Check (10 Minutes)

The goal of the first 10 minutes is to verify core, role-specific knowledge. This isn't about abstract algorithm puzzles; it's a quick reality check to confirm their resume isn't just a list of keywords.

A good answer is quick, accurate, and confident. A weak signal is fumbling with basic terms or giving a textbook definition without understanding its practical application.

Part 2: The Practical Project Deep-Dive (10 Minutes)

Here, you move past resume bullet points to dig into their real-world problem-solving ability. Ask the candidate to pick one challenging project from their resume and walk you through it.

Guide the conversation with specific follow-up questions:

- "What was the business problem you were solving?"

- "What was a key technical trade-off you had to make and why?"

- "What was your specific contribution versus the rest of the team's?"

- "How did you measure success? What were the results?"

Strong candidates can clearly explain the "why" behind their technical decisions and connect their work to business outcomes. Inability to detail their own contributions is a major red flag.

Part 3: The Motivation and Alignment Check (10 Minutes)

The final 10 minutes are for gauging motivation and cultural alignment. Top AI talent has options; you need to find out if they want this job, not just any job.

Ask direct questions like, "What about this role specifically caught your eye?" or "Looking at the job description, what seems most exciting to you?" Their answer reveals if they've done their homework and can connect their career goals to your team's mission. Think of this structured call like a well-designed system; if you're curious about the mechanics of automated communication, it's worth understanding how AI phone answering works to see these principles in action.

Practical Example 1: Phone Screen Questions for Key AI Roles

A phone screen isn't about stumping a candidate. It’s about asking targeted questions that reveal whether they have actually done the work they claim. Generic questions waste time; role-specific questions that probe for real-world experience are the fastest way to distinguish between a theorist and a practitioner.

A well-structured screen should touch on a few key areas to give you a complete picture of the candidate.

Questions for an LLM Engineer

Your goal is to test their practical experience with common challenges like Retrieval-Augmented Generation (RAG) and model optimization.

Question: "A RAG system you built for customer support is starting to hallucinate answers. What are the first three things you investigate to fix it?"

- What to Look For: A weak answer is just "fine-tune the model." A strong answer addresses the entire system. They should mention practical steps like improving the retrieval component (e.g., better chunking, hybrid search), enhancing the quality of source documents, or adding a re-ranking layer.

Question: "How would you decide between two different embedding models for a new semantic search feature?"

- What to Look For: A focus on task-specific metrics. A strong candidate will talk about building a "golden set" of queries and expected results for benchmarking and evaluating models using metrics like Mean Reciprocal Rank (MRR) or nDCG (normalized Discounted Cumulative Gain).

Question: "Walk me through setting up a CI/CD pipeline for a new machine learning model. What are the key stages and your preferred tools?"

- What to Look For: They should break it down into stages: code linting/testing (CI), model training/validation, packaging (e.g., Docker), and deployment (CD). They must name specific tools like Jenkins, GitHub Actions, DVC for data versioning, or MLflow for experiment tracking, and justify their choices.

Question: "A deployed model's performance is degrading over time. How do you diagnose and handle this concept drift?"

- What to Look For: The best answers focus on monitoring. They'll talk about setting up alerts on data distribution shifts and using tools like Prometheus or Grafana to track performance metrics and trigger automated retraining pipelines.

Question: "You have a high-volume stream of raw JSON events from a Kafka topic. Describe the pipeline you'd build to process this data and load it into a structured table in Snowflake or BigQuery."

- What to Look For: A great answer will mention a stream processing framework like Spark Streaming or Flink. They should also cover critical details like handling schema evolution, routing malformed records to a dead-letter queue, and implementing data quality checks.

Question: "Explain the difference between a star and a snowflake schema. When would you use one over the other?"

- What to Look For: A truly experienced engineer will focus on trade-offs. They'll know a star schema is simpler and faster for most queries, while a snowflake schema saves storage space but increases join complexity.

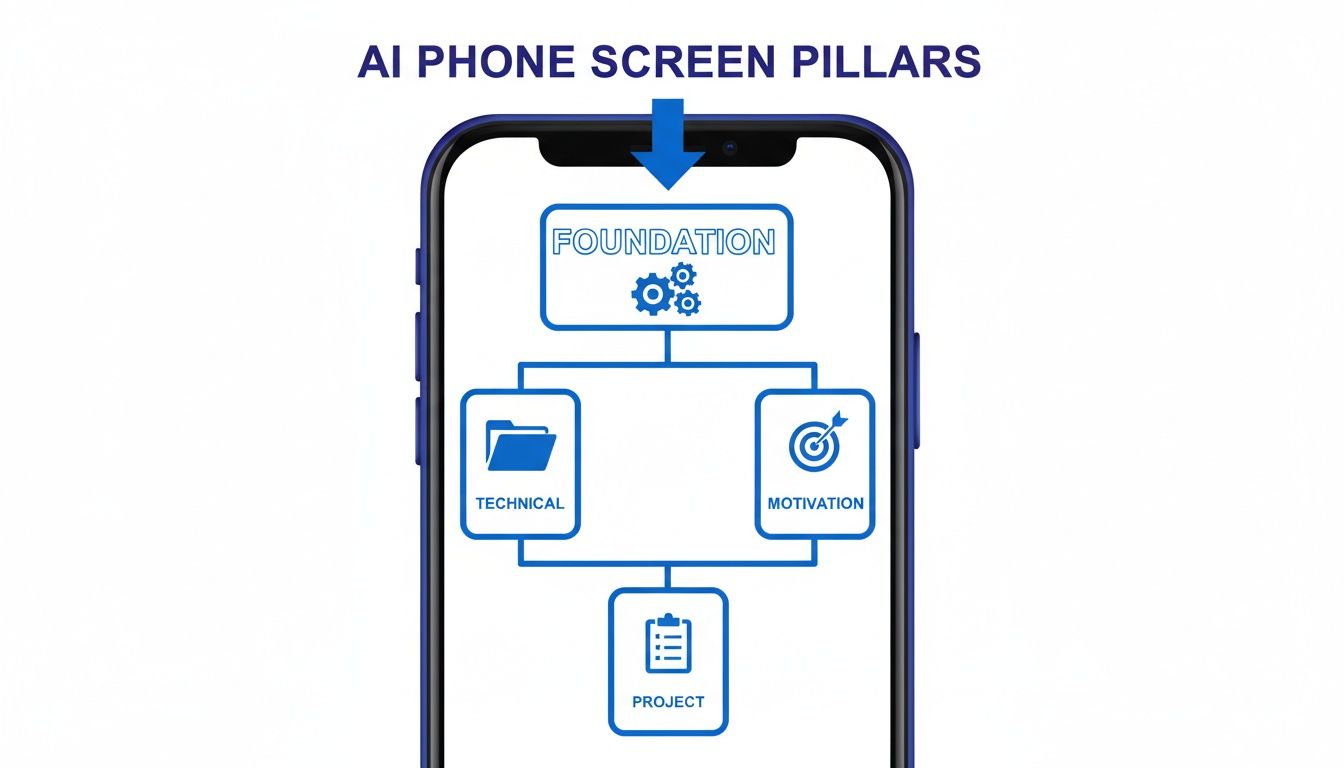

- Technical Competency: Do they have the core, non-negotiable skills for this role?

- Problem-Solving & Communication: Can they break down a complex problem and explain their thought process clearly?

- Project Impact: Can they connect their work to real business outcomes?

- Motivation & Alignment: Do they show genuine, specific interest in your company and this role?

- 1: Could not answer fundamental questions.

- 3: Solid, by-the-book understanding.

- 5: Exceptional depth, providing nuanced answers with trade-offs and concrete examples.

- ThirstySprout, "AI Engineer Interview Questions"

- ThirstySprout, "Behavioral Interview Questions for Software Engineers"

- Ntrvsta, "AI Phone Screening vs. Traditional Interviews: What the Data Says for 2026"

- RingDNA, "How Does AI Phone Answering Work?"

- Recepta, "Hiring an Inbound Sales Representative: The Full Guide"

Questions for an MLOps Engineer

An MLOps screen should feel like a tour of their toolbox. Test for hands-on experience with automation and the CI/CD (Continuous Integration/Continuous Deployment) lifecycle for models.

Questions for a Data Engineer

For Data Engineers, focus on their ability to build scalable, reliable data pipelines.

For more role-specific questions, see our guide to AI engineer interview questions.

Practical Example 2: The Phone Screen Scorecard

The biggest trap in phone screening is relying on "gut feeling." Subjective feedback like "they seemed smart" leads to inconsistent, biased hiring. To hire top AI talent repeatably, you need to replace impressions with data. A standardized scorecard is the best tool for this.

It forces every interviewer to assess candidates against the same criteria, providing a structured reason for every "yes" or "no."

Scorecard Template (Downloadable Checklist)

Our scorecards are built on four essential pillars, rated on a simple 1–5 scale.

Define what the ratings mean for your team. For example:

Phone Screen Scorecard Template

➡️ Download the Phone Screen Scorecard Template (Notion)

Using this rubric turns the debrief from "Did we like them?" to "Where did they score highly, and where are the gaps?" It creates a clear, objective record that helps you refine your candidate vetting engine over time.

Deep Dive: Common Pitfalls and How to Avoid Them

Even with a great framework, poor execution can derail the process. The most common mistakes are human errors that introduce bias, waste time, and create a negative candidate experience.

Pitfall 1: The Interviewer Monologue

The Problem: The interviewer dominates the conversation, spending 20 minutes pitching the company and leaving the candidate little time to speak.

The Fix: Apply the 80/20 rule: the candidate should talk for at least 80% of the time. Give a one-sentence overview, then ask, "From the job description, what are you most curious about?" This turns your pitch into a listening opportunity.

Pitfall 2: Asking Generic Questions

The Problem: Using tired questions like, "What's your greatest strength?" invites rehearsed answers that reveal nothing about a candidate's ability to solve real problems.

The Fix: Use behavioral questions that require specific examples. Instead of "What are your weaknesses?", ask "Tell me about a time a project failed. What was your role in the failure, and what did you learn?" For more examples, see our guide on behavioral interview questions for engineers.

Pitfall 3: Losing Control of the Clock

The Problem: A 30-minute screen that drags on to 45 minutes disrespects the candidate's time and signals disorganization.

The Fix: Politely interject to keep the conversation on track. Use a simple phrase like, "That's a great overview. In the interest of time, let's jump to the technical trade-offs you made." This is critical for efficiency; recent data on AI versus traditional phone screening shows a strong industry push toward shorter, more focused interviews.

Pitfall 4: Introducing Unconscious Bias

The Problem: Favoring candidates who feel familiar ("gut feel") or come from a similar background sabotages objective hiring.

The Fix: Rely on structure. Stick to the scorecard, ask every candidate the same core questions, and base your decision entirely on the evidence you gathered. The only question that matters is: "Did the candidate provide concrete proof of the skills we need?"

What to Do Next: Your 3-Step Action Plan

Reading about a better process is easy. Putting it into practice is what separates great hiring teams from the rest.

Step 1: Standardize Your Process (Today)

Download and adapt our phone screen scorecard template. Ensure every interviewer for a given role uses the exact same rubric. This is the foundation for a fair, data-driven process.

Step 2: Train Your Team (This Week)

Schedule a 30-minute alignment session with all hiring managers and phone screeners. Walk through this guide, review the scorecard, and calibrate on what a "3" vs. a "5" rating looks like. This is non-negotiable for consistency.

Step 3: Implement and Measure (Next Hire)

Use the new structured process for your next open role. After 5–10 screens, review the data: What's your pass-through rate? Is time-to-hire improving? Use these metrics to refine your questions and continuously improve your hiring engine. The principles apply broadly—this guide on Hiring an Inbound Sales Representative shows how a structured process benefits all roles.

Ready to skip the screening headaches? Start a Pilot with ThirstySprout and interview vetted AI experts in days, not months. We handle the screening so you can focus on building.

References and Further Reading

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.