You're hiring for a remote AI team. Model training runs are expensive, data issues hide until late, and one bad coordination layer can burn a sprint without anyone noticing until review. In that environment, scrum master interview questions shouldn't be generic. They should expose whether the candidate can remove friction, tighten feedback loops, and keep distributed specialists aligned without turning Scrum into theater.

That bar is higher now than it used to be. Modern Scrum hiring still rests on the framework's basics. The official Scrum Guide defines 3 accountabilities, 5 events, and 3 artifacts, so candidates still need to know the fundamentals cold. But hiring has shifted beyond terminology. Coursera's Scrum Master interview guide notes that interviews commonly mix universal, definitional, behavioral, and situational questions, and it recommends the STAR method because employers want theory plus judgment under pressure.

For a high-stakes remote AI team, that means your interview kit should do three things at once. Test framework fluency. Test coaching and conflict handling. Test whether the person can run async, cross-functional delivery where experiments fail, blockers surface late, and decisions must be documented well enough for people in other time zones to execute without confusion.

Use the questions below as a practical hiring kit. Each one includes what a strong answer sounds like, what a weak answer usually reveals, and how I'd score it in a real interview loop.

1. Tell me about a time you removed blockers for your team. What was the situation and how did you handle it?

This is the first question I'd ask a Scrum Master candidate for an AI team. If they can't explain blocker removal clearly, they're probably operating as a meeting facilitator, not a delivery enabler.

A strong answer starts with the blocker in concrete terms, not vague language like “team inefficiency.” I want to hear what was stuck, who was affected, what the Scrum Master changed, and how they followed through. Scrum.org's guidance on Scrum Master interview questions puts real emphasis on answers that show coaching impact, trust-building, and specific actions with outcomes. That's exactly right.

What good sounds like

A solid candidate might describe a GPU allocation bottleneck that repeatedly stalled model training. They didn't just escalate a complaint. They mapped the queue, involved the MLOps engineer, created a fair-share request process, and made blocked work visible in the sprint so the team could switch to data prep or evaluation tasks instead of waiting blindly.

Another good answer is less technical but equally strong. For example, a team keeps missing deployments because model artifacts and registry versioning are inconsistent. The Scrum Master partners with engineering to define a lightweight release checklist, gets agreement on naming conventions, and makes handoff issues visible during review and retro.

Practical rule: Strong candidates remove the immediate blocker and reduce the chance of recurrence.

Red flags and scorecard

Weak answers sound heroic but shallow. “I jumped in and fixed it” often means they solved someone else's problem for them. “I escalated to management” can be valid, but only if they explain why escalation was necessary and what system changed afterward.

Use this scorecard in the interview:

- 1 out of 5, reactive only: Solves symptoms, no root cause, little team involvement.

- 3 out of 5, competent: Identifies cause, coordinates across people, follows through.

- 5 out of 5, force multiplier: Removes blocker, improves workflow, teaches the team how to surface similar issues earlier.

Ask two follow-ups. What would you do differently now? How did the team respond after the change? Those questions usually separate process talk from real operational judgment.

2. How do you handle a situation where a team member disagrees with a decision you made as Scrum Master?

Senior engineers, especially in AI and infrastructure work, will challenge process decisions. That's healthy. A Scrum Master who treats disagreement as insubordination is a bad fit for a strong technical team.

The right answer should show facilitation, not command. I want to hear that the candidate first made the disagreement explicit, then examined whether the decision itself was wrong, poorly timed, or poorly communicated. Good Scrum Masters don't defend their authority. They protect the team's ability to make better decisions together.

A strong example

Suppose a senior machine learning engineer pushes back on short sprint cycles because model training and evaluation need longer feedback loops. A good candidate doesn't reply with “Scrum says two weeks.” They ask what work is becoming distorted, what uncertainty is being hidden, and whether the team is mixing research and implementation inside the same commitment.

Then they test an adjustment. Maybe they keep the sprint length but split exploratory work into clear spikes and define a tighter “done” standard for engineering tasks. Or they move some status exchange async and reserve live time for decision-making.

What to listen for

- Ownership language: “I realized I hadn't brought the team into the trade-off” is stronger than blame.

- Decision hygiene: In remote teams, good candidates document the decision and rationale.

- Comfort with being wrong: If they never changed their mind, they're probably managing optics.

If a candidate says, “I explained my reasoning and they accepted it,” keep digging. The real question is whether the team got to a better outcome.

A weak answer usually reveals one of two failure modes. Either they become overly passive and let the loudest engineer override team norms, or they become rigid and hide behind the framework. Neither works in a distributed team of specialists. You need someone who can absorb challenge, improve the process, and keep trust intact.

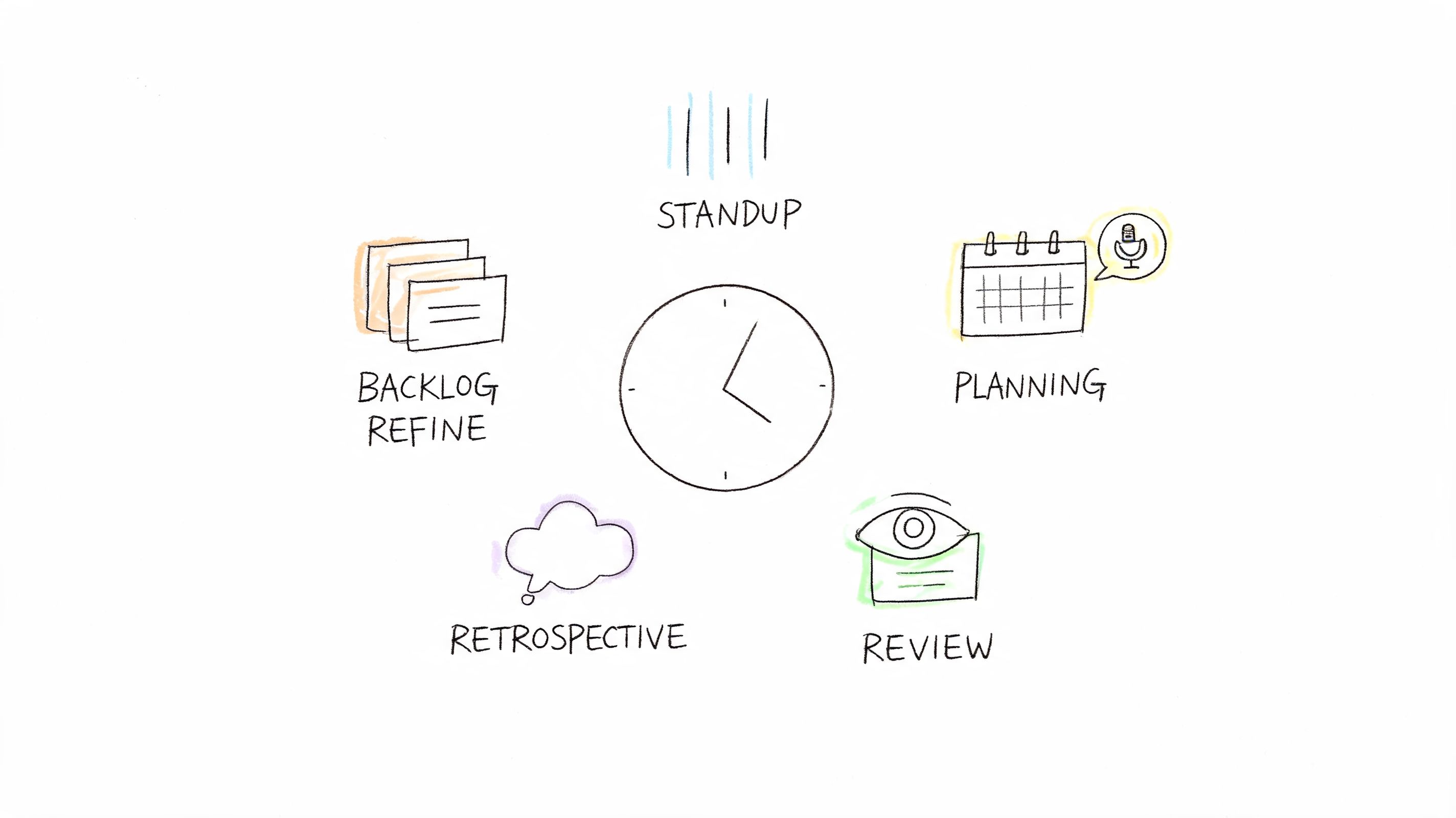

3. Describe your experience with Agile ceremonies. Which ceremonies do you find most valuable and which would you change?

This question looks basic, but it's one of the best tests for dogmatism. If the candidate ranks ceremonies as if one “matters most” in all contexts, they're usually too rigid. A better answer shows that each event serves a different purpose and that the format should adapt to the team's work.

One useful benchmark comes from a modern Scrum interview guide from Turing, which notes that current interviews often include velocity, estimation, risk management, scaling, and the standard Daily Scrum prompts about what was done yesterday, what will be done today, and what blockers exist. That reflects the reality I see in hiring. Teams want operational thinking, not ceremony trivia.

What good adaptation looks like

For a remote AI team, a candidate might say Daily Scrum is useful only if it exposes dependencies and blockers early. If it becomes rote status reporting, they'd move updates into Slack or Notion and use live time for coordination. That's a strong answer.

Another strong answer involves Sprint Review. Instead of forcing everyone into a long live demo, they might use recorded walkthroughs for model results, pipeline changes, or user-facing impact, then collect async comments before a short discussion. If you want a broader operating context, this scrum methodology guide for software engineering leaders is a helpful framing resource.

Strong versus weak answer

- Strong: Names the purpose of each event, then explains when and how to adapt it.

- Weak: Talks about “keeping ceremonies on schedule” as the primary success condition.

- Very weak: Removes events casually without replacing their underlying function.

I also ask one extra question here. Tell me about a ceremony you eliminated or redesigned. Why? If they can't answer that, they may know Scrum vocabulary but not how to make it work in an expensive, meeting-sensitive engineering environment.

4. Walk me through how you would estimate a sprint with a team building an ML model training pipeline. What challenges would you anticipate?

If they estimate machine learning work exactly like CRUD feature work, that's a problem.

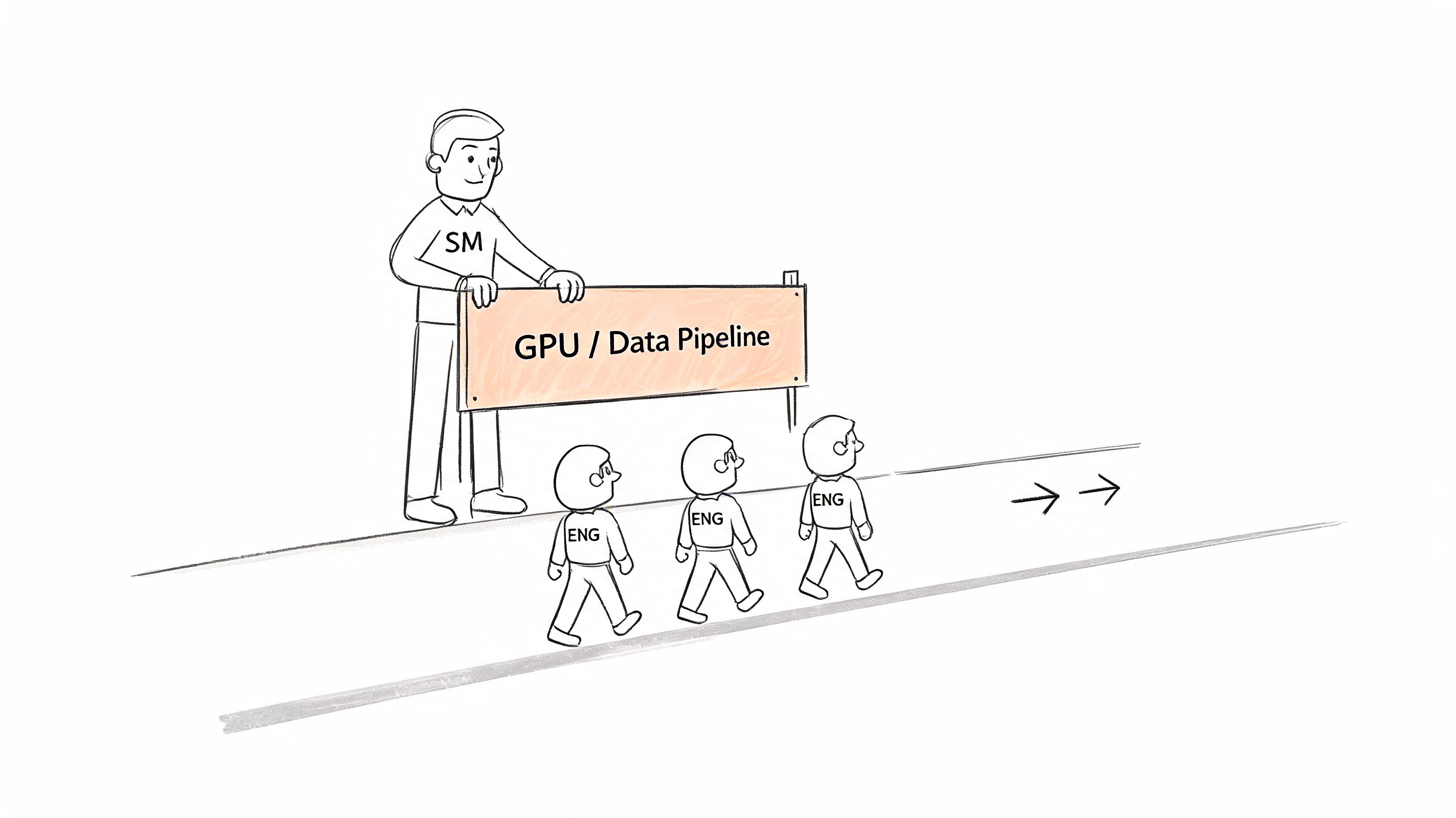

ML teams deal with uncertainty from data quality, experiment design, compute availability, and evaluation criteria. I'm not looking for the Scrum Master to be an ML engineer. I am looking for evidence that they understand where predictability breaks down and how to structure planning without pretending uncertainty doesn't exist.

A practical answer pattern

The best candidates break the sprint into work types. Research spikes. Data cleanup. Pipeline hardening. Model evaluation. Production integration. They'll usually say that exploratory work needs explicit boundaries and learning goals, while implementation work should have tighter acceptance criteria.

A good mini-case sounds like this. The team plans a training pipeline improvement, but GPU access becomes constrained mid-sprint. Instead of letting the sprint collapse, the Scrum Master helps the team reorder work toward CPU-bound preprocessing, observability, or test automation while making the infrastructure dependency visible to stakeholders.

What to probe

Ask these follow-ups:

- Definition of done: How would you define done for a model-related story?

- Failed experiments: What happens if the experiment doesn't produce the hoped-for result?

- Reforecasting: When do you adjust the sprint plan versus hold the commitment?

Good Scrum Masters treat failed experiments as information, not automatic team failure.

Weak answers usually overpromise certainty. They talk as if every task can be estimated cleanly upfront. Strong answers acknowledge uncertainty, isolate it, and stop it from contaminating everything else in the sprint. That's the difference between planning and fantasy.

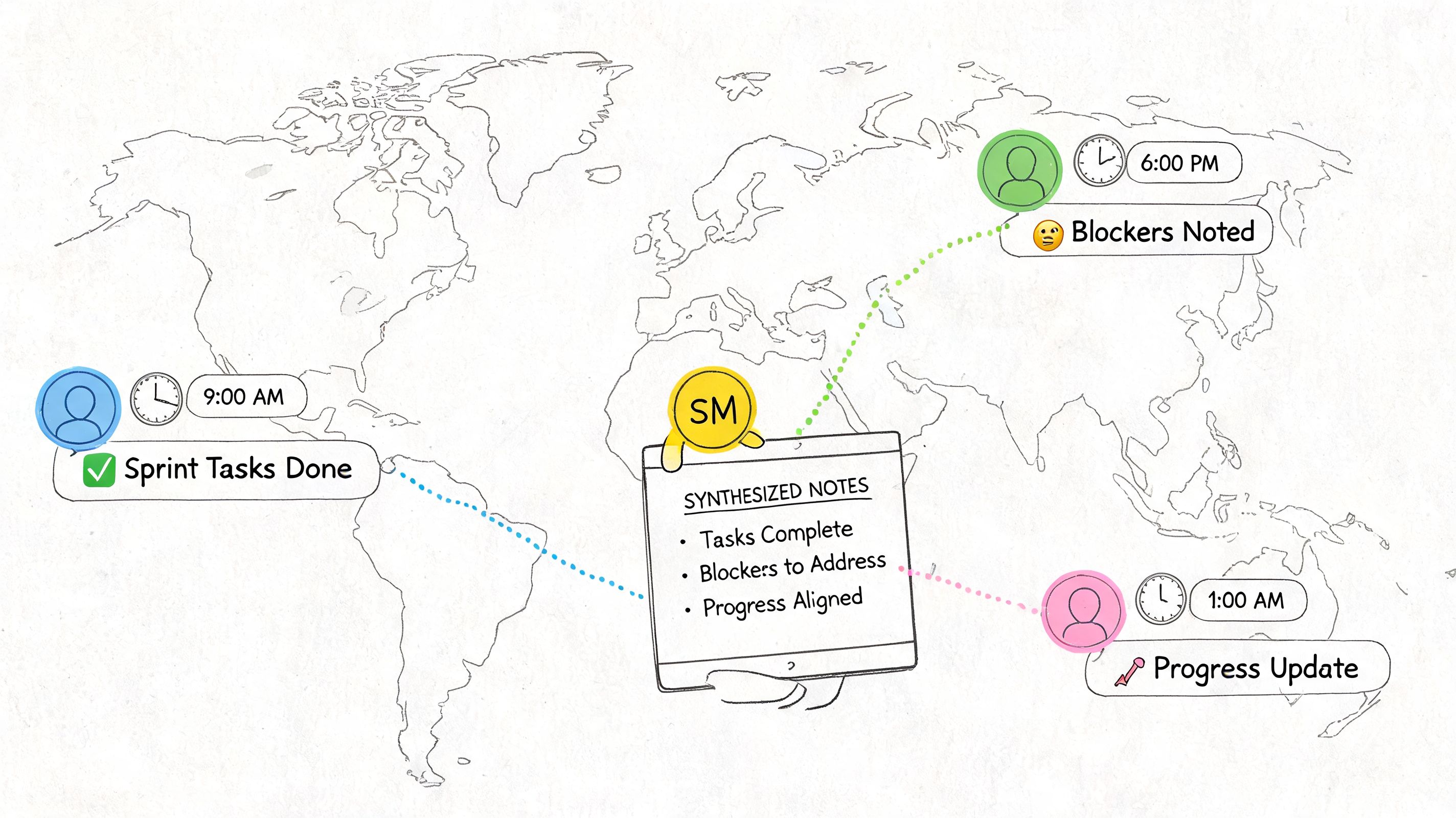

5. Tell me about your experience with distributed or remote teams. What practices have you found effective for async communication?

This question has become much more important than many interview loops admit. Remote and hybrid work isn't a side condition anymore. Atlassian's Teamwork Lab coverage in this Scrum interview article highlights that 62% of respondents work in distributed teams at least some of the time, and 48% say they are more productive in distributed settings than in office-only settings. If your Scrum Master can't operate async, they'll create invisible drag.

What a credible remote answer includes

I want specifics. Slack, Notion, Linear, Jira, Loom, Zoom transcripts, recorded demos, documented decisions, and response windows. If they say “communication is key,” that tells you nothing.

A strong candidate might describe async standups with end-of-day updates, then a short written synthesis that flags blockers, ownership, and requests for help. Another might explain how they use short recorded walkthroughs for sprint reviews, then collect feedback before a live discussion. For teams that rely on meeting recordings, practical tooling matters, and workflows like exporting Zoom transcripts can support searchable decisions and follow-up.

What weak looks like

- Meeting-heavy remote process: They recreate office habits on video.

- No written decisions: Important context lives only in someone's head.

- Async without closure: Threads stay open and no one knows who decided what.

The best remote Scrum Masters know that async communication needs structure. Decision owner. Deadline for input. Place of record. Next step. Without those four pieces, “async-first” usually becomes “ambiguous forever.”

6. Describe a time when you had to influence a decision you disagreed with at the organizational level. How did you handle it?

This separates team coordinators from real operators.

In many companies, the Scrum Master has little formal authority. They still have to protect the team from harmful commitments, bad sequencing, or pressure that turns planning into fiction. The right answer here isn't rebellion. It's disciplined influence.

A good answer has tension in it

Maybe leadership wanted an aggressive rollout date for a new AI feature while the training and monitoring path was still unstable. A strong candidate explains how they framed the risk in terms leadership understands. Delivery confidence, support burden, rollback complexity, or exposure to customer-visible failures. Then they show what compromise they negotiated. A phased release, narrower initial scope, additional observability, or a clearer dependency map.

Another strong example is budget pressure. If cloud spending threatens experimental work, a useful Scrum Master doesn't just complain about cost cuts. They help surface waste, sharpen priority decisions, and preserve space for the highest-value work.

Score the method, not just the result

- Strong: Builds a case, involves the right people, seeks a workable path.

- Mixed: Disagrees but mostly vents.

- Weak: Either caves immediately or turns the conflict into a personal fight.

Ask whether they were ultimately right. Good candidates can answer that objectively. Sometimes leadership made the better call. What matters is whether the candidate improved decision quality and protected team trust while the disagreement played out.

7. How do you measure and improve sprint velocity or team productivity? What metrics do you track?

This is one of the most dangerous scrum master interview questions because it invites bad management habits. If the candidate treats velocity as a productivity target, that's a red flag.

The healthier answer is that velocity is mainly a planning signal. It helps a team forecast based on what they've historically completed. That aligns with current interview prep material that describes velocity as using previously completed work to estimate what a team can finish in future sprints, as discussed earlier from Turing's guide. Strong candidates keep that distinction clear.

What a balanced answer sounds like

A capable Scrum Master might track velocity trends, cycle time, blocked work, carryover, defect patterns, and whether retro actions get completed. For an AI team, they may also separate exploratory work from implementation work so the team doesn't punish learning-heavy tasks.

That distinction matters. A negative experiment can still be a successful sprint outcome if it invalidates a bad path early. The wrong metric system teaches the team to hide uncertainty.

Metrics should improve planning and learning. They shouldn't become a scoreboard that pressures the team into gaming estimates.

Red flags to watch

- Velocity as performance ranking

- No mention of quality

- No discussion of metric limits

- No differentiation between research work and delivery work

One useful follow-up is simple. What metrics do you avoid and why? The best candidates usually have a mature answer. They know some numbers are easy to collect and still harmful to optimize against.

8. Tell me about a time when your team failed to meet a sprint commitment. How did you handle it and what did you learn?

You want the candidate who gets calmer when a sprint fails.

Missed commitments happen for good reasons and bad reasons. In AI work, uncertainty is part of the job. The true test is whether the Scrum Master can turn a miss into learning without normalizing sloppy planning or blaming individuals.

What a strong answer includes

The best answers identify the miss precisely. Maybe data validation took longer than expected. Maybe a hidden dependency in the deployment path surfaced late. Maybe the team stuffed exploratory and execution work into one commitment and lost clarity.

Then the candidate explains what changed. They tightened backlog refinement, added a clearer entry criterion, separated research spikes from delivery stories, or improved stakeholder communication when risk rose mid-sprint.

A mini-case worth hearing

A team misses a model deployment because training succeeds but evaluation data quality collapses late. The Scrum Master leads a retrospective that doesn't turn into finger-pointing. The team decides to make data checks visible earlier, pull uncertainty forward in planning, and communicate revised expectations before the end of the sprint rather than during review.

What I don't want to hear is “we worked harder next sprint.” That's not process improvement. That's debt with a motivational speech attached.

Also listen for whether the candidate follows up later. If a fix only appears in one retro and never shows up again, it wasn't really adopted.

9. How do you handle a talented engineer who doesn't fit the team culture or is difficult to work with?

Many otherwise capable Scrum Masters fail at this point. They either protect the high performer at the team's expense or overcorrect and make the issue moral rather than operational.

A useful answer starts with direct observation. What behavior is causing damage? Interrupting others in planning. Refusing to document handoffs. Dominating architecture choices. Withholding context that others need. “Bad culture fit” is too vague to coach against.

What strong handling looks like

A strong Scrum Master first has a direct conversation. Not vague feedback. Specific examples, impact on the team, and a clear expectation for change. Then they look for root cause. Some difficult behavior comes from stress, role confusion, or feeling unheard. Some of it is just poor collaboration discipline.

A realistic AI-team case is the brilliant ML engineer who dismisses product constraints and won't document assumptions. The Scrum Master might involve them earlier in technical trade-off discussions while also setting essential expectations around documentation and respectful participation.

What not to tolerate

- Knowledge hoarding

- Contempt for less specialized teammates

- Repeated refusal to align on working agreements

If the candidate never escalates or never sets firm boundaries, that's a problem. Support comes first. Clarity comes with it. If behavior still harms the team, a good Scrum Master knows when to involve management or recommend ending the arrangement.

10. What is your philosophy on technical debt and how do you facilitate discussions about it with non-technical stakeholders?

In AI systems, technical debt isn't just messy code. It can be stale data pipelines, brittle model handoffs, weak monitoring, poor prompt versioning, or undocumented evaluation criteria. If the Scrum Master can't translate that into business language, debt work will keep losing to feature pressure.

The answer should be business-facing

A strong candidate explains debt in terms leaders can prioritize. Delivery risk. Incident likelihood. Slower experimentation. Delayed releases. Higher support burden. They don't dump jargon on a product leader and hope it lands.

The practical pattern I trust most is continuous paydown tied to visible consequences. Not a dramatic “debt sprint” every time the system gets painful. Instead, regular prioritization with clear alternatives. If we delay this cleanup, what becomes slower or riskier? If we address it now, what gets easier next quarter?

Strong and weak examples

A strong answer might describe a discussion where model staleness and data pipeline fragility were framed as reliability and time-to-market issues, not “infra concerns.” Another might involve helping product and engineering compare a near-term feature against cleanup needed to make future experiments faster and safer.

A weak answer usually sounds ideological. “We should always reserve debt capacity” is too abstract. I'd rather hear how they built agreement around a specific trade-off and what information they used to make that discussion concrete.

If they can facilitate this conversation well, they're not just running Scrum. They're helping the company make better bets.

Top 10 Scrum Master Interview Questions Comparison

| Question / Topic | 🔄 Implementation complexity | ⚡ Resource requirements | ⭐ Expected outcomes | 📊 Ideal use cases | 💡 Key advantages |

|---|---|---|---|---|---|

| Tell me about a time you removed blockers for your team | Medium, requires technical and stakeholder work | Medium‑High, infra access, stakeholder time | High (⭐⭐⭐⭐), increased velocity, fewer repeat blocks | Distributed AI teams facing GPU, data, or deployment bottlenecks | Creates systemic fixes; improves throughput; demonstrates advocacy |

| How do you handle a team member who disagrees with your decision? | Low‑Medium, facilitation and coaching | Low, 1:1s, documentation, time to revisit decision | High (⭐⭐⭐⭐), stronger psychological safety and buy‑in | Remote teams with senior engineers and peer decision making | Preserves cohesion; invites feedback; models humility |

| Describe your experience with Agile ceremonies | Medium, tailoring rituals to context | Medium, meeting time, async tooling, facilitation | High (⭐⭐⭐⭐), better meeting ROI and focus | Remote/async teams needing efficient rituals (standup, retro) | Saves engineer time; enables continuous improvement; context‑aware |

| Estimate a sprint for an ML training pipeline | High, uncertainty, research vs engineering | Medium‑High, compute, experimentation time, spikes | Moderate‑High (⭐⭐⭐), clearer planning, fewer surprises | ML model training, research‑heavy sprints | Acknowledges uncertainty; sets realistic 'done' criteria |

| Experience with distributed / remote teams | Medium, timezone coordination and async design | Low‑Medium, tools (Slack, Loom, Notion), documentation | High (⭐⭐⭐⭐), improved async collaboration and coverage | Global, timezone‑spread AI teams | Reduces blockers via async norms; improves onboarding; keeps records |

| Influence a decision you disagreed with at org level | High, politics, evidence gathering, negotiation | Medium, data collection, stakeholder meetings | Moderate (⭐⭐⭐), mitigations or policy change possible | Fractional/part‑time roles requiring influence without authority | Protects team capacity; negotiates phased or mitigated outcomes |

| Measure & improve sprint velocity / productivity | Medium, select and interpret meaningful metrics | Medium, tracking tools, analysis time, transparency | High (⭐⭐⭐⭐), better forecasting and value focus | Teams wanting data‑driven planning, especially AI projects | Informs tradeoffs; avoids vanity metrics; highlights quality issues |

| Team failed to meet a sprint commitment | Low‑Medium, root‑cause analysis and remediation | Low, retros, minor process changes, stakeholder comms | Moderate (⭐⭐⭐), learning leads to more reliable delivery | High‑uncertainty work (experiments, ML) where misses happen | Encourages learning; fixes systemic causes; maintains trust |

| Talented engineer who doesn't fit team culture | High, behavior change and possible escalation | Medium, coaching, mediation, documentation | Moderate (⭐⭐⭐), either improved fit or controlled separation | Senior/autonomous AI engineers in small collaborative teams | Balances talent and team health; clarifies expectations; reduces siloing |

| Philosophy on technical debt & stakeholder discussions | Medium‑High, translate tech risk to business impact | Medium, metrics, ongoing paydown effort, communication | High (⭐⭐⭐⭐), reduced incidents, faster time‑to‑market | Organizations balancing feature delivery and long‑term maintainability | Aligns technical work with business value; enables continuous debt paydown |

Your Next Steps: From Questions to a Hire

If you use these scrum master interview questions one by one without a system, you'll still get inconsistent hiring decisions. The fix is simple. Standardize how you evaluate answers.

Start by turning these questions into a scorecard with four dimensions for every interview. Framework fluency. Situational judgment. Remote operating ability. Evidence of behavioral impact. Keep the scale simple so different interviewers can use it consistently. What matters is calibration, not complexity.

Next, make candidates prove they can operate in your environment. For a remote AI team, I'd add a practical exercise after the interview. Give them a short scenario. A delayed model release, a cross-time-zone blocker, a disagreement between product and engineering, or a sprint overloaded with research tasks. Ask for a written response and a short facilitation plan. That reveals far more than another round of generic conversation.

Then close the loop on evidence. Coursera's interview guidance, referenced earlier, is right to push candidates toward structured examples. During debrief, reject vague impressions like “seemed collaborative.” Replace them with observable signals. Did they identify root causes? Did they adapt Scrum to context without breaking its purpose? Did they show how they'd maintain trust and clarity in async work? Did they talk about outcomes, not just ceremonies?

I'd also keep one hiring principle front and center. Don't hire a Scrum Master just because they know the framework's vocabulary. The Scrum Guide structure still matters. Candidates should know the accountabilities, events, and artifacts. But in a high-stakes environment, baseline knowledge is only table stakes. You're hiring for judgment under ambiguity.

If you want to tighten your broader hiring loop, this guide to AI interview preparation for managers is a useful companion resource for structuring evidence-based interviews across technical roles.

If your team needs more than a question list, package this into a repeatable process: a scorecard, one practical async exercise, and a debrief rubric that rewards evidence over charisma. That's the difference between filling the role and improving delivery.

ThirstySprout can be one option if you're building remote AI teams and want hiring support around structured evaluation. The useful part isn't just access to candidates. It's reducing randomness in how you assess whether someone can help a distributed, experimental engineering team ship reliably.

If you're hiring a Scrum Master for a remote AI team, ThirstySprout can help you scope the role, standardize the interview loop, and connect with vetted AI talent that can work effectively across time zones. Start a pilot or see sample profiles if you want to move from interview questions to an actual hiring process.

Hire from the Top 1% Talent Network

Ready to accelerate your hiring or scale your company with our top-tier technical talent? Let's chat.